1. Introduction

Computer vision is an artificial intelligence subject that focuses on teaching computers to analyze and comprehend visual input from their surroundings. Computer vision techniques employ features to define, represent, and describe pictures and videos in an attempt to understand them and make useful predictions considering them. These features are roughly classified as low-level and high-level.

In this tutorial, we’ll introduce the two feature categories and analyze their differences and usages.

2. Computer Vision Features

Features are crucial in computer vision because they facilitate the representation and analysis of visual input. Algorithms can find patterns and correlations in photos and videos by extracting and describing them with features and then making predictions or conclusions based on this knowledge.

Computer vision algorithms may reach great levels of accuracy and understanding of visual input by integrating low and high-level information.

A computer vision algorithm, for instance, may identify the existence of objects in an image by using low-level characteristics such as edges and corners. Next, the system might utilize high-level characteristics like objects and scenes to classify and identify the content or scenario of the image.

3. Low-Level Features

Common strategies for learning low-level features include edge, corner, color detection, and color histogram analysis. Simple procedures, such as the Canny edge detector, the Harris corner detector, and the K-means clustering algorithm, can be employed to execute these strategies and extract these features.

4. High-Level Features

Convolutional neural networks (CNNs) and recurrent neural networks (RNNs) are two major strategies for learning high-level features. These techniques may learn to extract and describe high-level features by being trained on big datasets of annotated images or videos.

Furthermore, pre-trained models that have previously been trained with large chunks of data are another technique for learning high-level characteristics. So, these models may be fine-tuned for special purposes and tasks and, therefore, can deliver accurate results with hardly any training.

5. Differences Between Low and High-Level Features

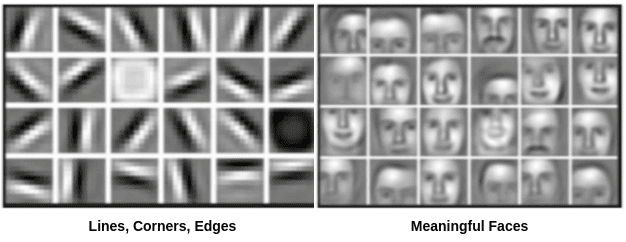

The main difference between low and high-level features lies in the fact that the first one is characteristics extracted from an image, such as colors, edges, and textures. In contrast, the second one is extracted from low-level features and denotes more semantically meaningful concepts. This basic difference can be easily understood by the diagram below:

5.1. Content

Furthermore, low-level features are more closely related to the raw pixel data of the image and are more sensitive to noise and changes in the image. In contrast, high-level features are more robust and abstract and can be more helpful for tasks that require a higher level of understanding of the image content. Also, high-level features have a clear semantic meaning and can be more easily interpreted by humans.

5.2. Scale

Low-level attributes are typically retrieved at a local scale, which means they are vulnerable to little modifications of the picture, like lighting or orientation. High-level characteristics, on the other hand, are frequently retrieved at a global scale, which means that they take into account the whole picture or video and are more robust.

5.3. Resources

Moreover, low-level feature extraction usually takes fewer system resources than high-level feature extraction, as the latter frequently requires more advanced machine learning methods.

5.4. Task Specificity

Low-level characteristics are frequently task-specific, which means they are appropriate for a certain set of activities. High-level features, on the other hand, are frequently more generic and suitable for a broader range of jobs. Lastly, low-level features are useful for tasks such as image segmentation, object detection, and feature matching. In contrast, high-level features are useful for tasks such as image classification, object recognition, and scene understanding.

6. Conclusion

In this article, we walked through low and high-level features in computer vision. In particular, we focused on the differences between the two feature categories and introduced the main ways of extracting each feature and their basic usage as well.