Learn through the super-clean Baeldung Pro experience:

>> Membership and Baeldung Pro.

No ads, dark-mode and 6 months free of IntelliJ Idea Ultimate to start with.

Last updated: March 18, 2024

In this tutorial, we’ll show how to turn a flat list into a tree or a forest.

At input, we have a list of pairs representing a parent-child hierarchy. Each is a structure where

is the ID of a node, and

is the ID of its parent. Essentially, the pairs denote directed edges between the nodes in a hierarchy.

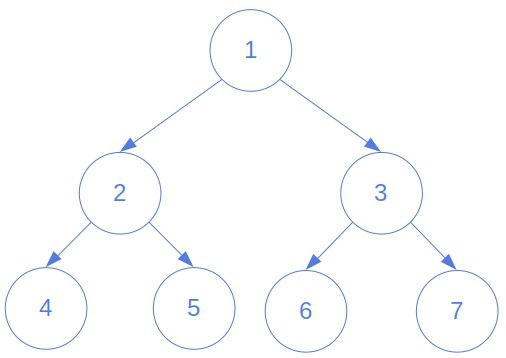

Further, the pairs may appear in any order. For example, node 2 is the parent of node 4 in this tree:

In any case, the pair may come before

in the list.

What’s more, there may be several trees in the hierarchy. A node whose parent doesn’t appear as a child in the input list represents the root of an independent tree. Our goal is to turn the list into a set of trees, i.e., a forest if there’s more than one, and identify their roots.

The IDs may be of any type: integer numbers, string data, tuples, and so on. Finally, we’ll consider the general case and present solutions that work with all data types.

A straightforward way is to process the pairs one by one, find the parent’s node in the forest constructed so far, and attach the child to it. Then, if the parent isn’t already there, we create the corresponding node. But, that’s only a partial solution. Organizing the nodes into proper trees doesn’t mean much if we don’t identify their roots.

To find the roots, we use the following observation. If an ID appears as a child, we can rule it out as a potential root. So, if we start by considering all the nodes as candidate roots by default and rule out a candidate if we find its parent in the input list, only the actual roots will remain upon processing the whole list.

algorithm BuildingForestFromFlatList(list):

// INPUT

// list = a list of child-parent id pairs

// [(child_id_i, parent_id_i) | i in {1, 2, ..., n}]

// OUTPUT

// The forest of the trees contained in list

roots <- an empty set

for (child_id, parent_id) in list:

child_node <- FIND_OR_CREATE(child_id)

parent_node <- FIND_OR_CREATE(parent_id)

parent_node.children <- parent_node.children + {child_node}

child_node.parent <- parent_node

if parent_node.parent = NONE:

roots <- roots + {parent_node}

if child_node in roots:

roots <- roots - {child_node}

return roots

algorithm FIND_OR_CREATE(id):

// INPUT

// id = the id to find or create

// OUTPUT

// The node reachable from roots with the given id

node <- find the node reachable from roots, whose ID is id

if node = NONE:

node <- create an empty node

node.id <- id

return nodeLet’s prove the algorithm’s correctness. Our loop invariant is that contains all the nodes without known parents. We call such nodes candidate roots.

The invariant is trivially true before the loop since we initialize to be an empty set.

Now, let’ suppose that the invariant holds before -th iteration. Is it so at the beginning of the next one as well? We analyze several cases:

So, we add to a new node only if it’s a candidate root and remove a node that turns out to have a parent. Therefore, the invariant holds before the next loop. At the end of the algorithm,

will contain only the actual roots and no other nodes.

The lower bound of time complexity is since we have to process

pairs, where

is the input list’s length, i.e., the number of edges in the forest. Since each node has exactly one parent,

also approximates the number of nodes. More precisely,

is the difference between the number of nodes and the number of trees because the roots have no in-looking edges and won’t appear in the list.

The upper bounds depend on how we implement . If we don’t use an auxiliary data structure to memorize pairs for quick access, the memory complexity will be

, but time complexity will become quadratic. That’s because in the worst case, we’ll traverse the whole forest to find the node with the given ID. So, we’ll do

lookups upon reading the

-th pair from the list, and the worst-case time complexity will be

:

If we use a hash table with node IDs as keys and pointers to the nodes as values, the average-case time complexity of will be

. So, the whole algorithm will be linear in the average case:

Everything in the pseudocode remains the same, except that we now maintain a hash table and use it in :

algorithm BuildingForestFromFlatList(list):

// INPUT

// list = a list of child-parent id pairs

// [(child_id_i, parent_id_i) | i in {1, 2, ..., n}]

// OUTPUT

// The forest of the trees contained in list

roots <- an empty set

hash_table <- create an empty hash table

for (child_id, parent_id) in list:

child_node <- FIND_OR_CREATE(child_id, hash_table)

parent_node <- FIND_OR_CREATE(parent_id, hash_table)

parent_node.children <- parent_node.children + {child_node}

child_node.parent <- parent_node

hash_table[child_id] <- child_node

hash_table[parent_id] <- parent_node

if parent_node not in roots:

roots <- roots + {parent_node}

if child_node in roots:

roots <- roots - {child_node}

return roots

algorithm FIND_OR_CREATE(id, hash_table):

// INPUT

// id = the id to find or create

// hash_table = a hash table with the nodes and their IDs

// OUTPUT

// The new or already existing node with the specified ID

if id in hash_table.keys:

node <- hash_table[id]

else:

node <- create an empty node

node.id <- id

return nodeMoreover, since the set of keys won’t change upon creating the hash table, we can achieve perfect hashing. That means that the search will be even in the worst case. Consequently, our algorithm for building trees will become linear.

There’s another way to approach this problem. We can regard the input array as a list of edges in a (probably disconnected) graph. Upon reading all the edges, we start depth-first traversal from an arbitrary node and visit all the other nodes that belong to the same tree. The one with no parent represents the tree’s root. Repeating the process until we reach all the nodes, we identify the trees as connected components and collect their roots. The catch is that we have to maintain edges in both directions to visit the ancestors of the start node if we start the traversal from a non-root vertex.

Here’s the pseudocode:

algorithm BuildForestFromFlatList(list):

// INPUT

// list = a list of child-parent id pairs

// OUTPUT

// The forest of the trees contained in list

// read the list

graph <- initialize an empty graph

for (child_id, parent_id) in list:

if child_id not in graph.nodes:

graph.nodes <- add child_id to graph.nodes

if parent_id not in graph.nodes:

graph.nodes <- add parent_id to graph.nodes

graph.nodes[child_id].parent <- graph.nodes[parent_id]

graph.nodes[parent_id].children.add(graph.nodes[child_id])

roots <- an empty set

for node in graph.nodes:

if node.visited is false:

while node.parent != NONE:

node <- node.parent

roots <- roots + {node}

DepthFirst(node)

return roots

DepthFirst is based on Depth-First Search (DFS):

algorithm DepthFirst(node):

// INPUT

// node = a node in the graph

// OUTPUT

// All the nodes in the tree to which the input node belongs

// are visited

node.visited <- true

for child in node.children:

DepthFirst(child)Reading the list and storing the nodes is . Depth-First traversal doesn’t visit any node twice. So, the whole algorithm’s complexity may be linear. But, it depends on the way we implement

. In particular, on whether or not we represent the edges with an adjacency matrix or adjacency lists.

In the former case, the attribute is a boolean row (we’ll have to map the IDs to natural numbers). For each node, we’ll need to pass through its whole row to find its children nodes. The algorithm would be of quadratic complexity. On the other hand, if we use adjacency lists, we’ll visit each node and cross each edge once. Since a tree with

nodes contains

edges, the

-th call to

will take

time, where

is the number of nodes in the

-th tree. Summing over the trees, we get

.

The Depth-First approach can handle even the degenerate case where there are no edges. However, from the way the problem is defined, we see that such input is impossible. The reason is that it would correspond to an empty list, in which case no data would be available for processing, and no algorithm would be able to run.

In this article, we presented two algorithms for building a forest of trees from a flat list that contains the pairs of the form (child id, parent id). One algorithm is an ad-hoc solution we devised specifically for this problem. The other is an adaptation of the method for identifying connected components via Depth-First Search. Both can run in linear time, provided we use appropriate data structures.