1. Introduction

Threads are usually described as lightweight processes. They run specific tasks within a process. Each thread has its id, a set of registers, the stack pointer, the program counter, and the stack.

However, threads share resources with one another within the process they belong to. In particular, they share the processor, memory, and file descriptors.

In this tutorial, we’ll explain how resource sharing works between threads.

2. Resources and Threading

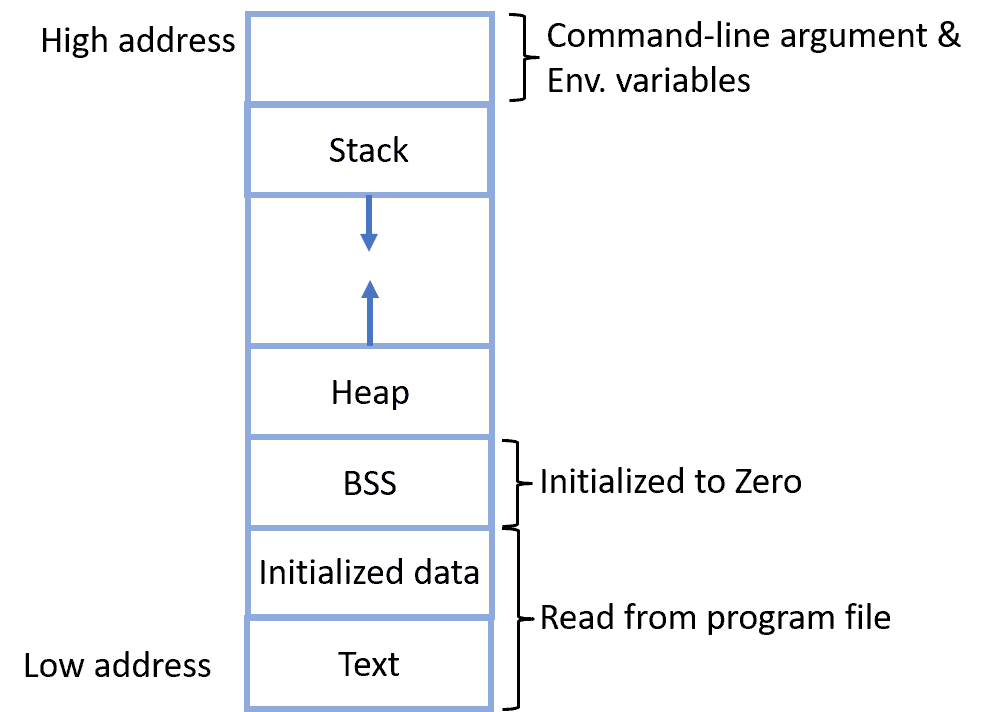

To understand resource sharing, let’s first take a look at the memory map of a process:

The memory is logically divided as follows:

- The stack contains local and temporary variables as well as return addresses.

- The heap contains dynamically allocated variables.

- Text (Code) – these are the instructions to execute.

- Initialized data – contains initialized variables.

- Uninitialized data – the block starting symbol (BSS) contains declared but uninitialized static variables.

2.1. Threading Schemes

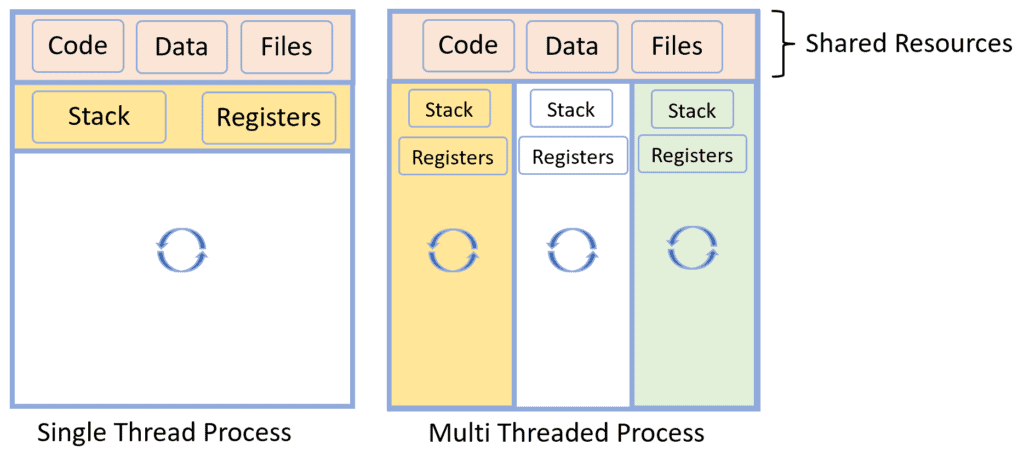

There are two threading schemes: a process can have a single thread or contain multiple threads.

In single-threaded processes, there’s only one thread. As a result, we need only one program counter, and there’s only one instruction set to execute. On the other hand, multi-threaded processes contain several threads which share some resources while keeping others private:

There are several benefits to using multiple threads:

- The operating system doesn’t require allocating a new memory map for a new thread since it has already created one for the underlying process, and the thread can use it freely.

- There’s no need to create new structures to keep track of the state of open files.

However, we need to make threads access the shared resources in a safe way. That means that the resources are always in a consistent state. For example, no two threads should be allowed to change the same file at the same time because of the risk of overriding each other’s changes.

3. Resource Sharing in Multi-Threaded Processes

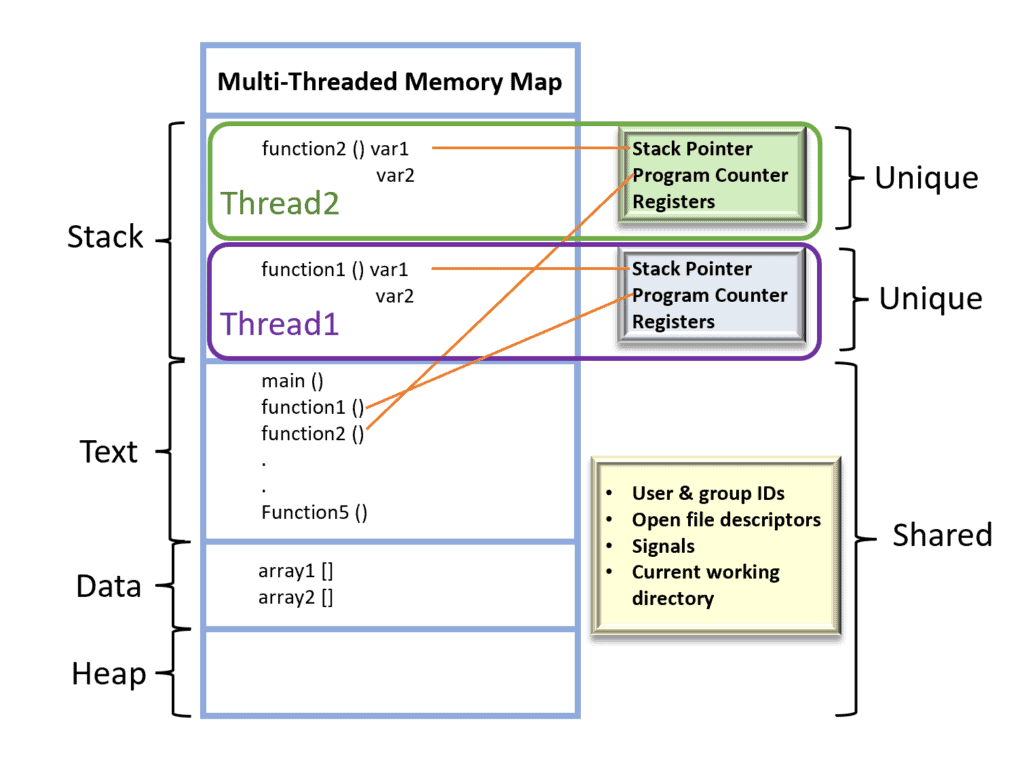

The operating system is responsible for scheduling all threads in a multi-threaded process. It keeps track of processes and their per-process information:

Some of these resources are private and visible only in the thread owning them, whereas others are shared across all the threads within the same process:

3.1. Stack

The process divides the stack area and gives each thread a portion of it.

As shown in the figure above, the allocated stack area for a thread contains at least the following:

- the called function’s arguments

- local variables

- a return memory address that points where to return the function’s output

In addition, when a thread completes its task, the stack area of the corresponding thread gets reclaimed by the process. So, although each thread has its own stack, thread-specific stacks are parts of the same stack area the process owns.

3.2. Shared Resources

Threads also share some resources:

- Text area – contains the machine code that is executed.

- Data area – we use it for initialized and uninitialized static variables.

- Heap – reserved for the dynamically allocated variables and is located at the opposite end of the stack in the process’s virtual address space.

Shared resources make a thread lightweight because they reduce the overhead of resource allocation since it has already been done during the creation of the parent process.

4. Conclusion

In this article, we talked about how threads share resources within the same process. The code, data, and heap areas are shared, while the stack area only gets divided among threads.