Yes, we're now running our Spring Sale. All Courses are 30% off until 31st March, 2026

How Many Threads Is Too Many?

Last updated: March 18, 2024

1. Introduction

In this tutorial, we’ll talk about a suitable thread count threshold that servers should use. How could we determine what this threshold would be? We’ll review the important concepts before attempting to arrive at a reasonable technique to estimate the ideal cut-off or the answer to the question “How many threads is too many?”.

2. Processes and Threads

A process in computing is an instance of a computer program that is running on one or more threads. A process could consist of several concurrently running threads of execution, depending on the operating system.

There are numerous different process models, some of which are lightweight, but almost all processes have their roots in an operating system process, which consists of the program code, allocated system resources, physical and logical access permissions, and data structures to start, control, and coordinate execution activity.

A process is an act of carrying out instructions after they have been loaded from the disk into memory, as opposed to a computer program, which is a passive collection of instructions normally saved in a file on disk. The same program may be connected with several processes; for instance, opening up multiple instances of the same software frequently results in the execution of multiple processes:

There are various ways in which threads differ from conventional multitasking operating system processes. Threads are often subgroups of processes, whereas processes are typically autonomous. Compared to threads, processes carry a lot more state information. A process’s multiple threads can share system memory, other resources, and the state of the process.

Processes have independent address spaces, whereas threads share their address space. Only system-provided inter-process communication channels allow processes to communicate. Typically, context switching within a single process happens more quickly than context switching between processes.

2.1. Advantages and Disadvantages of Threads Versus Processes

A program can use fewer resources when employing threads than it would when using several processes. For inter-process communication (IPC), processes need a shared memory or message transmission mechanism, whereas threads can communicate using data, code, and files they already share.

An unauthorized operation carried out by a thread can cause the process to crash since threads share the same address space. Therefore, one bad thread can stop all the other threads in the application from processing their data.

A group of threads running within the same process is referred to as a thread group. They can access the same global variables, collection of file descriptors, and heap memory because, as we just mentioned, they share the same memory.

These threads are all running concurrently. Use time slices or true parallel processing if the machine has more than one processor. Performing numerous tasks in simultaneously is one of the benefits of employing a thread group rather than a process group. This makes it possible to manage events as they happen.

Context switching is a benefit of employing a thread group rather than a process group. It is substantially quicker to swap contexts across threads than between processes.

2.2. The Case of Creating Too Many Threads

Our job will take longer to finish if we generate thousands of threads since we’ll have to spend time switching between their contexts. Use the thread pool to complete our task rather than creating new threads manually so that the OS can balance the ideal number of threads.

The prevailing consensus is that having more physical cores is preferable to having more threads. In comparison, a CPU with 8 cores and 8 threads would perform better than one with 2 cores and 8 threads. However, the more threads our CPU can manage, the better it will perform while multitasking. Additionally, for some particularly intense apps, it will employ more than one core simultaneously.

The core count refers to the actual number of cores on the CPU die, whereas the thread count refers to the total number of concurrently running application threads. This is the same as the core count and requires no additional hardware. However, some CPUs will have more threads than cores.

2.3. Too Many Threads Hurts Performance

Too many threads might have two negative effects. First, when a fixed quantity of work is divided among too many threads, each thread receives so little work that the overhead associated with initiating and stopping threads overwhelms the productive work. Second, running an excessive number of threads results in overhead due to the way they compete for limited hardware resources.

It’s critical to distinguish between hardware and software threads. Programs create threads, which are referred to as “software threads.” Threads on hardware are actual physical resources. The chip may have one hardware thread or more per core.

Limiting the number of runnable threads to the number of hardware threads is a smart solution. If cache contention is an issue, we might also want to limit it to the number of outer-level caches. Avoid hard-coding our software to a particular number of threads because target platforms differ in the number of hardware threads. Let the amount of threading in our software adjust to the hardware.

Although there isn’t much memory overhead for each thread, there is overhead for the thread scheduler to manage them. If we only have 4 cores, only 4 threads can execute instructions simultaneously. Even if some of our threads are blocking on IO, we still have a large number of idle threads that require management overhead.

3. The Optimum Cut-off for the Number of Threads

We need to be able to divide up problems into smaller jobs that can be completed concurrently to make use of concurrency. Making an issue concurrent may not yield much performance improvement if it contains a sizable portion that cannot be partitioned.

We might require more threads to reach that sweet spot if the IO operations had a larger latency because more threads would be stuck waiting for responses more frequently. In contrast, if the latency were lower, I could only need a smaller number of threads to achieve optimal performance because there would be less waiting time because each thread would be released more rapidly.

Code that is CPU-bound is intrinsically constrained by the speed at which the CPU can carry out instructions. Thus, while my code is running concurrently over 4 threads, I fully utilize the processing capabilities of my 4-core CPU with no overhead:

Our application is probably not as straightforward in reality. Our app might be CPU-bound in certain areas while being IO-bound in others. Our app might not be constrained by IO or CPU. It can be memory-bound or simply not make use of all available resources.

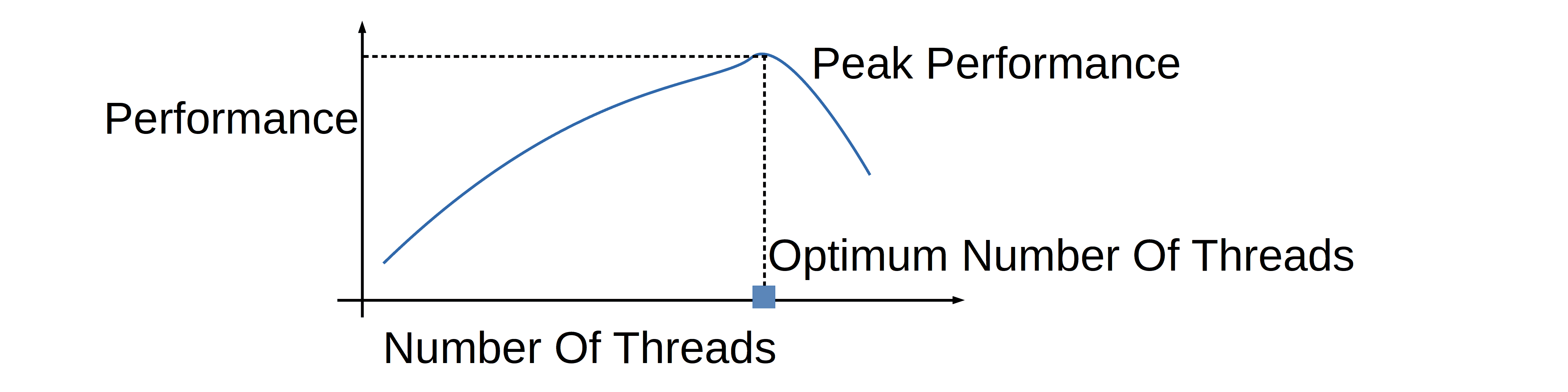

What all of this means is that there is an overhead associated with managing each thread we create. More threads don’t always mean faster creation. On the other hand, increasing the number of threads in these two samples increased performance anywhere between 100% and 600%. It is worth it to find that sweet spot.

Measuring is the only way to get a certain answer. Run our code with various thread counts, evaluate the outcomes, and make a decision. We might never arrive at the “correct” solution without measuring. Finding the ideal location is crucial. After doing this a few times, we can probably start to estimate the number of threads to employ, but the sweet spot will vary depending on the IO workload or the hardware.

The maximum number of threads running concurrently under load is what we should pay close attention to measuring. Then increase the first estimate by 20% as a safety margin. Adding more threads won’t improve speed once we hit a bottleneck, whether it is the CPU, database, disk, etc. But before we get there, keep adding threads.

4. Conclusion

In this article, we talked about a good thread count threshold that servers should use. We could determine this threshold by measuring the performance of the process across several thread counts. To estimate the ideal cut-off, or the answer to the question “How many threads is too many?”, one should measure and plot the performance and find the number of threads that gives the peak performance.