1. Introduction

In this tutorial, we’ll explain the Quality of Service (QoS) in networks and show how to quantify it. Then, we’ll discuss tools and mechanisms to control and improve QoS.

2. What Is the Quality of Service?

Quality of Service (QoS) measures the capability of a network to provide high-quality services to an end-user. More specifically, it designates the mechanisms and technologies for managing data traffic and controlling network resources.

The primary goal of QoS is to prioritize critical applications and specific types of data. For example, real-time transmissions such as video telephony require a high QoS.

QoS technologies seek to increase throughput, lower latency and reduce packet loss. We can measure it in several ways.

3. How to Measure QoS?

A network flow is a sequence of packets going from one device to another. To quantify the QoS in a network, we need to measure the flow. There are several metrics for that.

3.1. Reliability

Reliability is the degree to which a network guarantees delivering data packets, regardless of a node/link failure or any other network disruption.

We measure reliability with the error and loss rates.

There are several ways to define the error rate. The bit error rate (BER) is the ratio of the number of erroneous bits and the total number of received bits. An alternative is the packet error rate (PER), which is the number of corrupted packets divided by the total number of received packets.

When the routing process is interrupted, and data packets fail to attain their intended destination, we’re talking about packet loss. The packet loss rate is the proportion of lost packets among all the sent ones.

A high error or loss rate can originate from several causes such as network congestion, problems with hardware, software bugs, and security threats, especially DoS attacks.

3.2. Delay or Latency

This metric shows how much time a data packet needs to travel from its source to its destination.

Applications such as file transfer and emailing tolerate high latency. However, video gaming and conferencing require low latency. Otherwise, they would have so poor quality that users would be very dissatisfied.

3.3. Jitter

The jitter refers to the scenario when packets aren’t delivered at regular intervals of time. It is the change in the amount of latency for a set of packets delivering a singular service. This parameter is crucial in real-time applications as it can totally damage the reception of a video or a phone call. Its causes are typically path changes and traffic congestion.

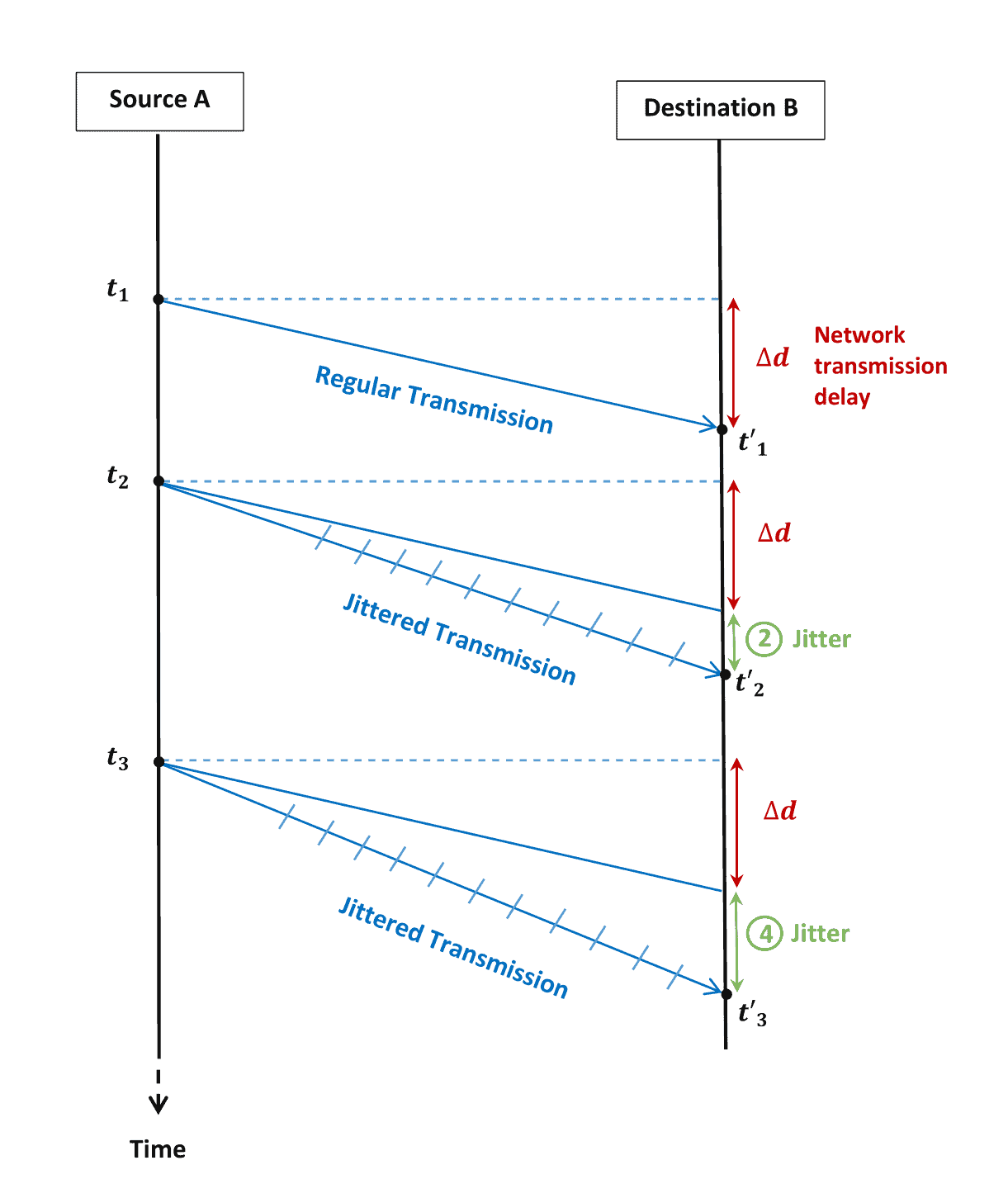

Let’s say we have three packets sent respectively at ,

, and

from a source

to a receiver

. If we assume that the network transmission delay is

from

to

, then typically, these packets will reach the destination by

,

and

respectively. However, we assume that only the first packet is received at a regular timing

. The second and third packets are received at

and

instead:

In this case, these two packets will have jitters of 2 and 4, respectively, distorting the quality of the application delivered to the end user.

3.4. Bandwidth

The bandwidth refers to the transmission capacity of the network, i.e., how much data we can transfer at a given time.

We estimate it as the maximum number of bits we can transfer per second. When the network throughput (the actual load of data it needs to handle) exceeds the bandwidth, the traffic will suffer from congestion, and the QoS will degrade.

4. Techniques to Improve the QoS

We can apply several mechanisms to improve the QoS in a network. They rely mainly on organizing data routing based on their sensitivity to real-time traffic.

4.1. Classification and Marking

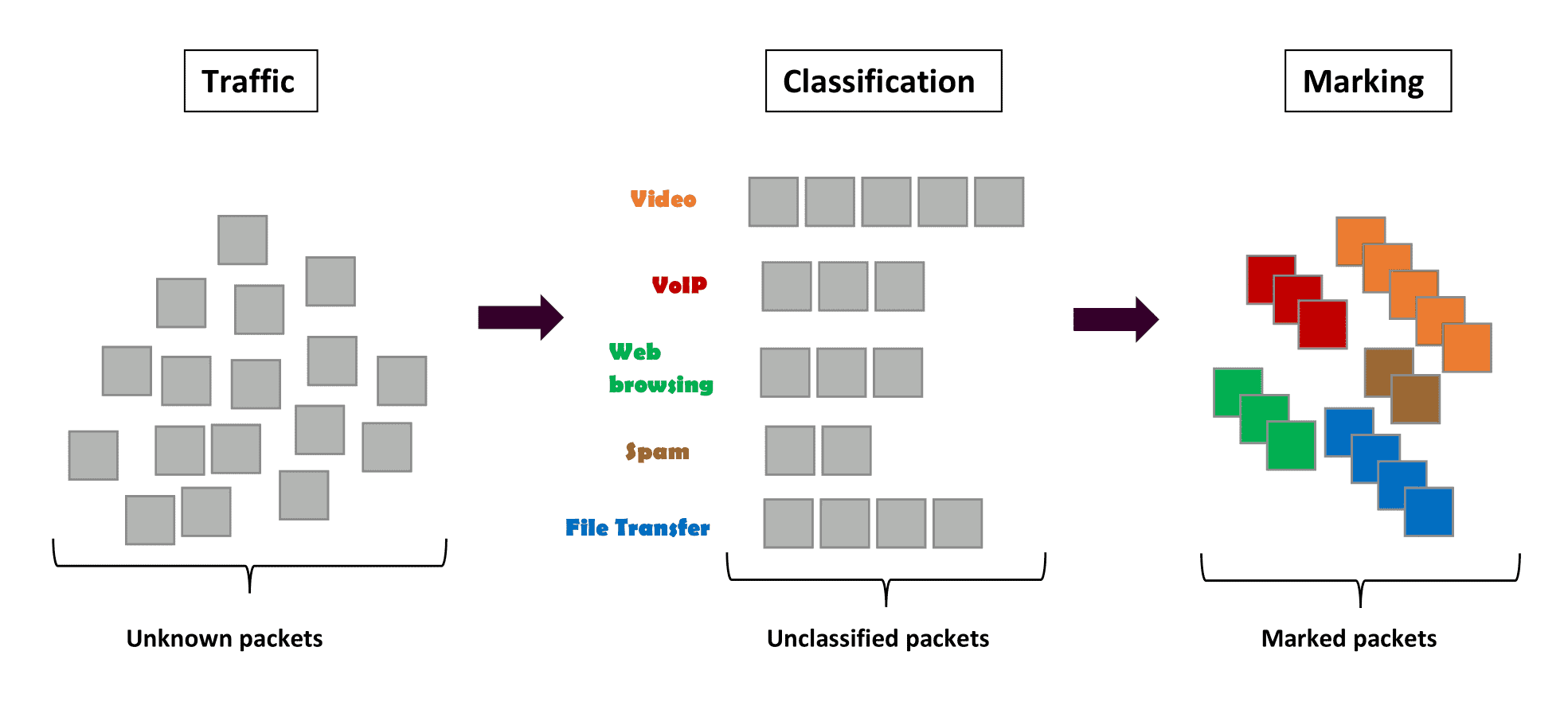

Here, we split the network traffic into different classes. Grouping distinct packets having the same class (video, audio, web browsing, etc.) helps us know what types of data streams flow across the network and how to assign priorities.

Usually, we distinguish traffic classes by their level of priority as sensitive traffic (such as voice over IP (VoIP) and video conferencing), best-effort traffic like emailing, and undesirable traffic such as spam.

We label each packet with the appropriate class by changing a field in the packet header. This process is called marking, ensuring the network recognizes and prioritizes the sensitive ones.

Classification is sorting the packets for labeling. Both are implemented within a router or a switch:

4.2. Queuing and Scheduling

When a router (switch) receives packets from different flows, it stores them in different buffers we call queues. We differentiate the traffic in queues by order of priority. Packets belonging to the same type of class form a singular queue. Based on the result of classification, each traffic receives a specific type of treatment.

There are three techniques for scheduling queues: First-In-First-Out (FIFO) queuing, priority queuing, and weighted fair queuing, where we weigh the queues using their priorities.

4.3. Policing and Shaping

When the traffic load exceeds the link capacity in the network, it leads to deteriorating QoS. Policing and shaping are bandwidth-modeling techniques to manage the amount and rate of traffic.

Essentially, traffic policing imposes a particular bandwidth restriction and discards the packets that don’t adhere to it. This is a part of congestion avoidance mechanisms.

Meanwhile, traffic shaping is a softer technique as compared to traffic policing. Instead of immediately dropping the excess packets, it stores them in queues. Two common methods to shape the traffic are leaky and token buckets.

5. Conclusion

In this article, we explained QoS in networking and introduced different metrics and tools used to improve network performance.

Packet loss and delay are the two most significant metrics to consider when evaluating QoS and user experience.