Learn through the super-clean Baeldung Pro experience:

>> Membership and Baeldung Pro.

No ads, dark-mode and 6 months free of IntelliJ Idea Ultimate to start with.

Last updated: May 2, 2023

In this tutorial, we’ll make an introduction to end-to-end deep learning. First, we’ll define the term and describe the intuition behind it. Then, we’ll present some common applications and challenges of end-to-end deep learning.

We define end-to-end deep learning as a machine learning technique where we train a single neural network for complex tasks using as input directly the raw input data without any manual feature extraction.

Due to the creation of large-scale datasets, end-to-end deep learning has revolutionized a variety of domains like speech recognition, machine translation, face detection, etc.

First, let’s focus on the intuition behind end-to-end deep learning.

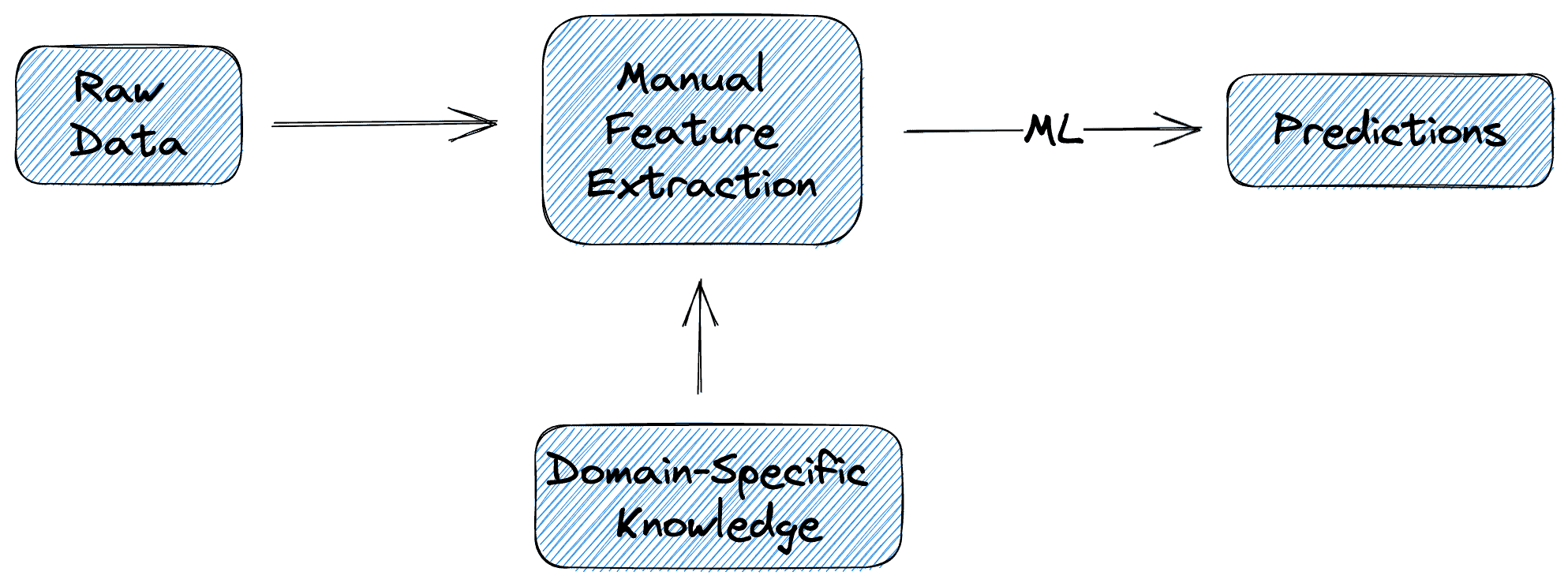

In traditional machine learning, the training pipeline consists of at least 2 stages:

Despite its success, the above procedure is very time-consuming and requires a lot of domain-specific knowledge.

In the diagram below, we can see what the pipeline of a traditional machine-learning model approach looks like:

The latest increase in the size of available datasets enabled the rise of end-to-end deep learning that aims to learn the input-output mapping directly from the data without manually extracting handcrafted features. So, we take the input data and pass them through a (usually large) neural network. Then, the network processes the input data and automatically extracts relevant features that are then used to generate predictions.

The whole procedure is performed without the need for manual engineering reducing the amount of time and effort needed. The success of this technique lies in the enormous amount of data that we use during training and the ability of neural networks to learn high-level features just by training on a lot of data.

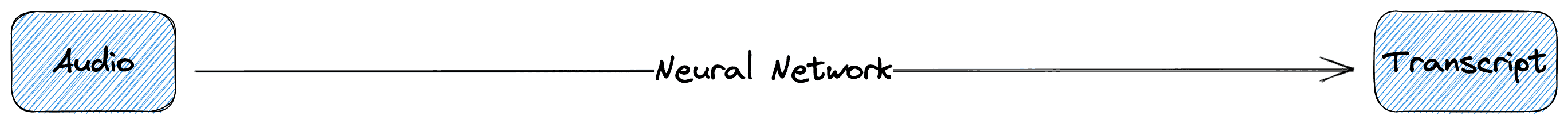

In the diagram below, we can see what the pipeline of an end-to-end deep learning approach looks like:

There are many domains where end-to-end deep learning has been used very successfully surpassing a lot of the traditional handcrafted methods. Let’s describe some of them.

In speech recognition, end-to-end deep learning has achieved amazing performance thanks to the large-scale speech datasets that were recently developed. These achievements have significant implications for healthcare, finance, and telecommunications industries.

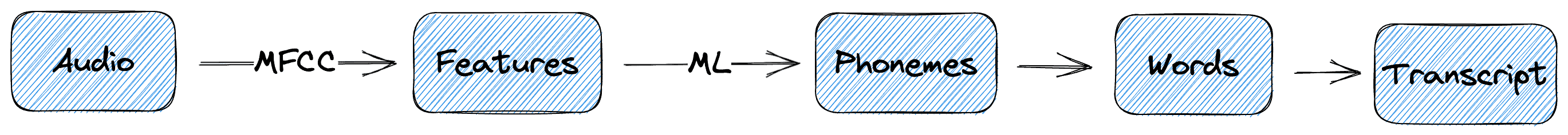

In traditional machine learning, converting an audio clip into a transcript consists of many intermediate steps. First, we usually extract some MFCC features from the audio clip and classify them into phonemes. Then, we convert the phonemes to words and the words to the output transcript. The whole procedure is illustrated in the diagram below:

However, using end-to-end deep learning we can just pass the audio clip through a neural network and directly predict the output transcript without having to worry about all the intermediate steps:

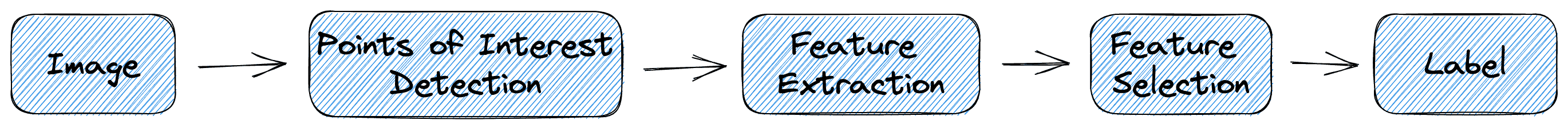

End-to-end deep learning proved very beneficial in image classification as well. Previously, image classification consisted of 3 necessary steps:

However, the rise of convolutional neural networks gave us the opportunity to train a model in an end-to-end way using directly the raw input pixels.

Below, we can see the training pipeline without and after end-to-end deep learning:

Despite its benefits, end-to-end deep learning comes with some crucial challenges that we should also consider.

The most important of them is the need for a large amount of training data. Specifically, the most common architectures for end-to-end deep learning are various types of neural networks (like RNNs, CNNs, and Transformers). These networks require a large amount of data to learn useful features from the raw input data.

These data may be easy and cheap to get in domains like speech and vision. However, in tasks like cancer diagnosis, for example where we have to work with medical data, data acquisition may be a very challenging and expensive task. So, the available data are limited and end-to-end deep learning is more challenging.

Another challenge in end-to-end deep learning is the lack of model interpretability. Specifically, it is very hard to tell why a large neural network gave a specific output given the input. For example, in the previous task, a doctor was not able to check why the model predicted that a patient has cancer or not. So, the doctor should just trust the model in such an important task that is unacceptable and risky.

In this article, we talked about end-to-end deep learning, First, we presented the term and the intuition behind it. Then, we discuss some of its common applications and challenges.