Learn through the super-clean Baeldung Pro experience:

>> Membership and Baeldung Pro.

No ads, dark-mode and 6 months free of IntelliJ Idea Ultimate to start with.

Last updated: March 18, 2024

In this tutorial, we’ll explore the different techniques file systems use to manage concurrent read and write operations.

We’ll also investigate the implications of the existing file management techniques. Finally, we’ll discuss how to design and develop efficient storage solutions.

Understanding how file systems handle concurrent read and write operations is essential for designing and developing efficient storage solutions. A file system is a software layer that allows users to interact with physical storage devices. Furthermore, it enables us to store and retrieve files in an organized fashion. However, it needs to handle multiple requests simultaneously.

Additionally, it’s responsible for keeping track of all the files as well as the location of each file on the computer. Moreover, it provides a way for the operating system and other software to access and manipulate those files.

There’re many different types of file systems, each with its own set of rules and features. Some common types of file systems include NTFS, FAT, HFS+, and Ext4.

NTFS is a proprietary file system used by Microsoft Windows. It supports advanced features such as file permissions, encryption, and efficient management of huge files. On the other hand, FAT is an older file system that was widely used but has been replaced by modern file systems like NTFS. Although, we still use FAT in USB drives and other removable storage devices.

We can find the use of Ext4 in Linux filesystems. It supports features such as file permissions and journaling. Additionally, it has in-built features to handle very large files. We use the HFS+ file system on Apple’s Mac OS operating system.

In general, a file system provides a structure for storing and organizing files on a computer. It’s an essential part of any operating system.

Concurrent reading refers to the ability of multiple processes or threads to access and read data from a shared resource simultaneously. It can be useful in a variety of contexts, such as when multiple users need to access a shared database or when a program needs to perform multiple tasks concurrently. Concurrent read operations don’t change the data contained in a file. For example, reading the first line of a file or the first 10 bytes is a read operation.

We can implement concurrent read operations using various synchronization mechanisms, such as locks and semaphores. These mechanisms ensure the data being read is not modified by another process or thread while it is accessed. It can help to prevent race conditions and other types of data corruption that can occur when multiple processes or threads try to access and modify shared data concurrently.

Concurrent write refers to the ability of multiple processes or threads to access and modify a shared resource simultaneously. These operations change the data contained in a file. For example, appending new data to the end of a file or replacing the first line of a file is a write operation.

Concurrent write operations can be more complex to implement than concurrent read operations. They involve modifying shared data and can lead to race conditions if not properly synchronized.

Concurrent read and write operations can occur when multiple processes or threads need to access and potentially modify shared data. Some scenarios where concurrent read and write operations may occur include multi-user database systems, concurrent programming, distributed systems, and web applications.

File systems use various techniques to handle concurrent read and write operations. Here, we’ll present four techniques along with their implications on system performance: concurrent data structure, exclusive locks, semaphores, and caching.

File systems use concurrent data structures to handle parallel read and write operations. Using this technique, we can store data needed for writing operations in a concurrent data structure. Additionally, it doesn’t block data for read operations. The main disadvantage of this technique is that it requires more memory to store data. Therefore, it reduces system performance due to the extra processing overhead.

Exclusive locks are the most straightforward technique for handling concurrent read and write operations. When a process or thread holds an exclusive lock on a resource, no other process or thread can access or modify the resource until we release the lock.

Exclusive locks are easy to use but not efficient in practice. The readers need to acquire a lock before reading. Additionally, the writers need to obtain a lock before writing. The usage of exclusive locks may create scenarios where readers and writers compete for access to the same resource, potentially resulting in a bottleneck.

A semaphore is a synchronization object that controls access by multiple processes or threads to shared resources in a parallel programming environment. It’s a value stored in memory, and different processes can modify it. The main disadvantage of this technique is that it requires additional coordination between multiple processes. As a result, it increases the system’s complexity.

Caching is another method that can reduce the impact of concurrent read and write operations by temporarily storing data in a separate, high-speed memory location. It requires additional space on the physical storage device, resulting in the reduction of overall system capacity.

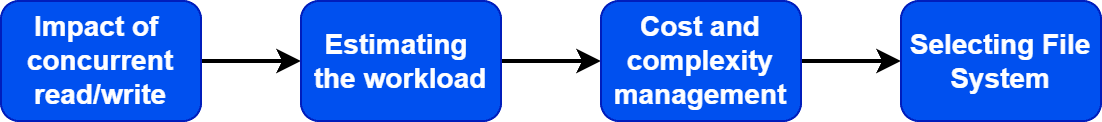

Now we know the various techniques which can handle concurrent read and write operations. Let’s discuss how to design a solution for efficient storage with four simple steps:

The first step in designing an efficient storage solution is understanding the implications of concurrent read and write operations. Additionally, we need to estimate the expected workload. Generally, it’s critical to implement an efficient storage solution if the number of concurrent read and writes operations are more.

Furthermore, we should also consider the cost and complexity of the existing strategies for managing concurrent read and write operations. Cost and complexity directly impact the performance of the system. For example, fast storage technologies like SSDs are well-suited for storing frequently accessed data but are costly. On the other hand, HDDs are better for storing infrequently accessed data and are less costly compared to SSDs. However, file operations in HDDs are much slower than SSDs.

Finally, selecting the appropriate file system for the job is essential. Some file systems are designed to handle heavy write operations and simultaneous access better than other techniques.

Additionally, monitoring and analyzing usage patterns can help us to identify opportunities to optimize storage infrastructure and improve efficiency.

Let’s discuss an example to understand how file systems handle concurrent read and write operations.

Suppose we have a text file data.txt stored on a file system. This file is accessed by two processes: Process A and Process B. Process A wants to read the file, while Process B wants to write to the file.

In this case, the file system can use a lock to prevent Process B from modifying the file until Process A has finished reading it. It ensures that the file remains consistent and prevents data corruption.

Once Process A finishes reading the file, the file system releases the lock, allowing Process B to access and write to it. The file system would then temporarily use cache memory to store the new data. Additionally, it ensures the timely completion of the write operation.

After we complete the write operation, the file system updates the file on disk with the new data. Therefore, the changes will be visible to other processes that want to access the file. In this way, we can efficiently handle concurrent read and write operations. Additionally, we also ensure that the file system remains consistent.

In this tutorial, we explored the different strategies that file systems use to manage concurrent read and write operations.

Finally, we also discussed how to design and develop efficient storage solutions.