Learn through the super-clean Baeldung Pro experience:

>> Membership and Baeldung Pro.

No ads, dark-mode and 6 months free of IntelliJ Idea Ultimate to start with.

Last updated: August 26, 2024

In this tutorial, we’ll study binomial heaps. We use them to implement priority queues and discrete-event simulation for queuing systems.

A binomial heap is based on a binomial tree. So first, we’ll briefly introduce binomial trees.

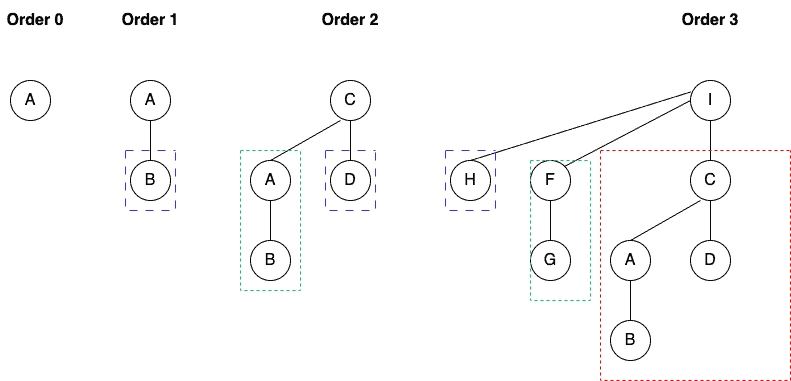

Formally, we can define a binomial tree of order

recursively:

The root of the binomial tree has

children (i.e., its degree is

), where the

child (

) is again a binomial tree of order

:

As we see, it’s a recursive and ordered data structure.

A binomial tree of order

has

nodes, and its height is

.

We define a binomial heap as a set of binomial trees satisfying the min-heap property.

That means that the value of each node is the minimum of the values in its sub-tree.

Further, we link root nodes of the binomial trees in the binomial heap to get a linked list .

Formally, a binomial heap is a collection of binomial trees

such that:

The first property tells us the minimal elements of the trees are in the linked list. The second property tells us that a binomial heap with

nodes has

binomial trees.

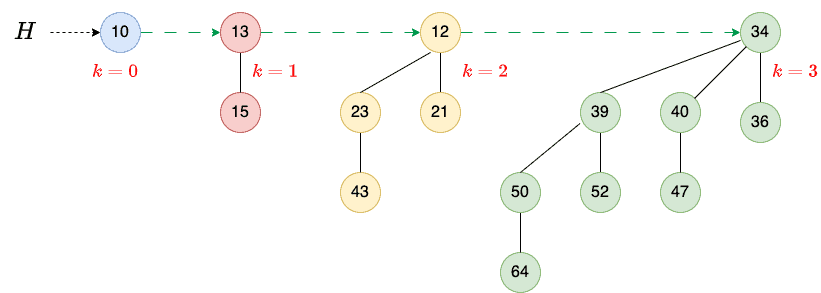

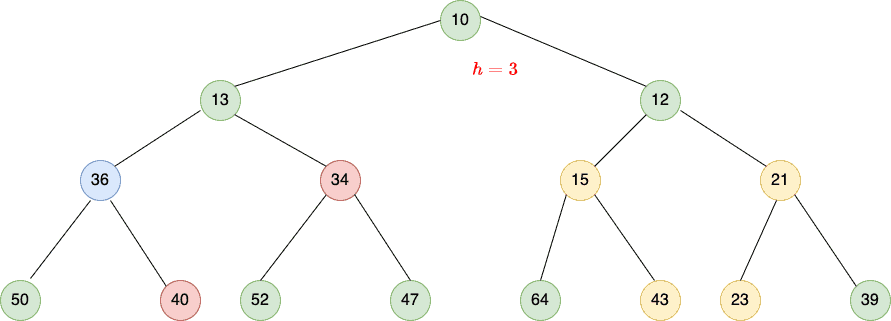

Let’s check out an example:

Here, the heap (15 nodes) consists of four binomial trees:

(1 node),

(2 nodes),

(4 nodes), and

(8 nodes).

Each binomial tree is heap-ordered, and the trees’ orders are unique. We can also see a linked list containing the root nodes in the increasing order of their degrees.

Let’s learn the basic operations we can perform on a binomial heap:

A binomial heap has other interesting operations, e.g., deleting a node, extracting a node with a minimum key, and changing the key of a particular node. However, we won’t cover them in this tutorial.

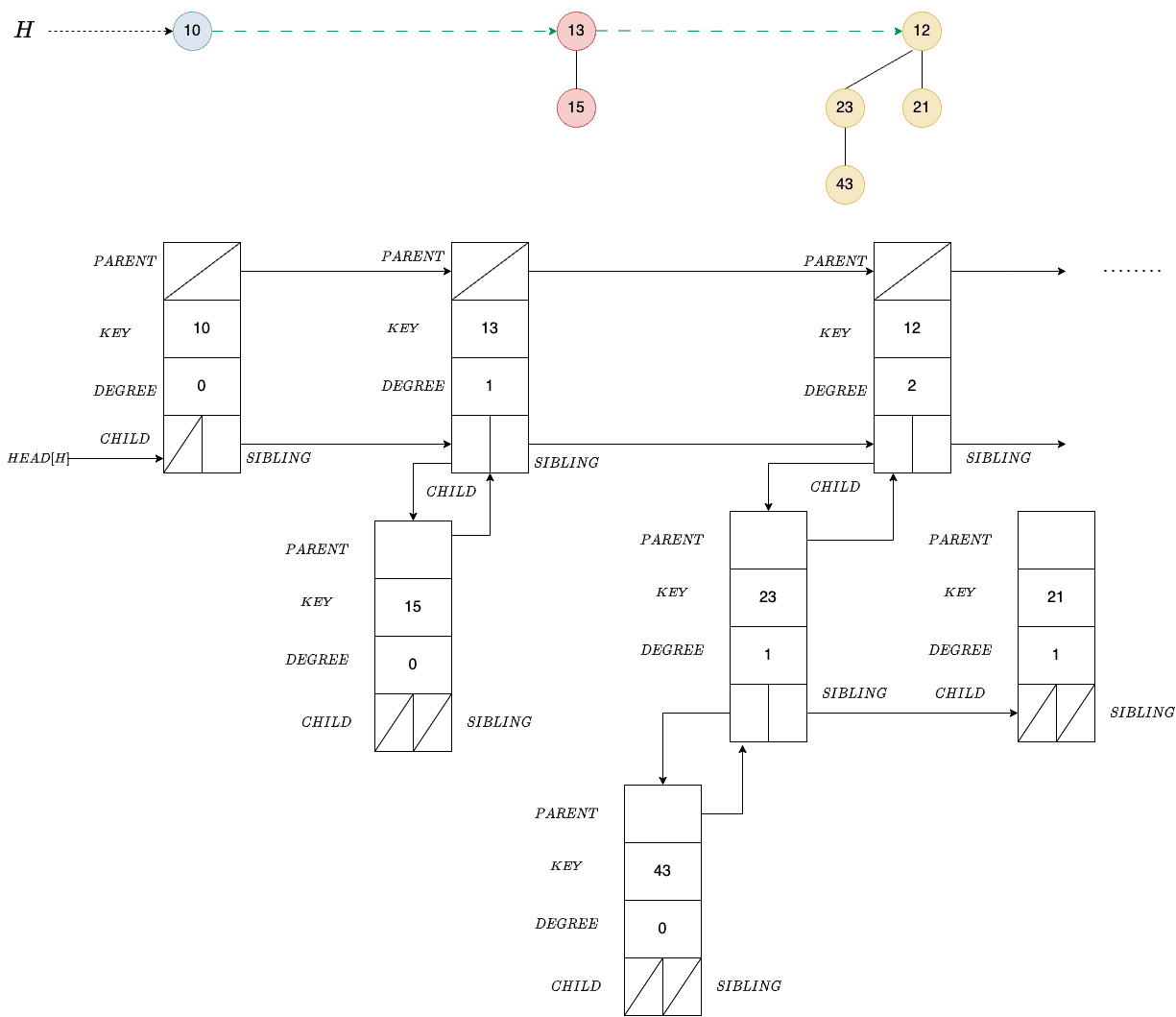

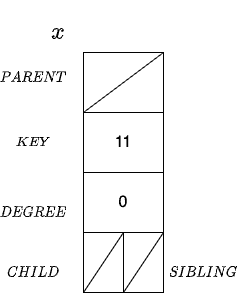

We can represent each node in the binomial heap

with the following data structure:

We can implement the heap without PARENT and DEGREE, but they make the algorithms for the operations easier to understand and formulate.

Additionally, we’ll use HEAD to point to the leftmost binomial tree of the heap . Each node in the binomial tree in a heap keeps track of all its children. For instance:

The parent pointers of the root and child and sibling pointers of the leaves are empty. The sibling pointer of a root points to the next tree’s root.

To create an empty heap, we allocate the memory for the head node and set the pointers to empty values (usually denoted as , NIL, or NULL in textbooks).

This is an operation regarding space and time.

We already know that the root of a binomial tree in a binomial heap contains the tree’s minimum key.

Since the roots are in a linked list, we must traverse it to find the minimum.

This operation’s time complexity is , where

is the total number of nodes in the binomial heap

. This is so because a binomial heap with

nodes has at most

trees.

Let and

be two binomial heaps with

and

nodes.

We’ll use a helper method of merging two binomial trees of the same degree. The result of merging two trees of order is a tree of order

:

algorithm MergeBinomialTree(T1, T2):

// INPUT

// T1 = binomial tree of the order k

// T2 = binomial tree of the order k

// OUTPUT

// binomial tree of the order k+1 with total nodes as sum of nodes of T1 and T2

// get root nodes of both trees

R1 <- ROOT(T1)

R2 <- ROOT(T2)

// Make a tree with the larger key a child of the other tree to keep the min-heap property

if KEY(R1) >= KEY(R2):

R2 <- PARENT(R1)

SIBLING(R1) <- CHILD(R2)

CHILD(R2) <- R1

DEGREE(R2) <- DEGREE(R2) + 1

return T2

else:

R1 <- PARENT(R2)

SIBLING(R2) <- CHILD(R1)

CHILD(R1) <- R2

DEGREE(R1) <- DEGREE(R1) + 1

return T1We first compare the root nodes’ keys. To keep the min-heap property, the root with a smaller key should become the parent of the other tree’s root.

Since we use a fixed number of pointers and variables in a node structure, this operation has a constant time complexity .

The idea is to merge the sorted root lists of the input heaps and

so that it’s also sorted. We can do that using MergeSort for sorted linked lists. Then, we traverse the list merging the successive pairs of roots with the same degree to make sure the resulting heap has at most one tree of any particular order:

algorithm MergeBinomialHeaps(H1, H2):

// INPUT

// H1 = the first binomial heap

// H2 = the second binomial heap

// OUTPUT

// H = the merged heap

LH <- merge the root lists of H1 and H2 into a linked list sorted in non-decreasing order

if LH is empty:

return an empty heap

// Start with the beginning of the list

current <- the first node in LH

next <- SIBLING(current)

// Traverse LH merging the successive pairs of the root nodes with the same degrees

while next != null:

if DEGREE(current) != DEGREE(next):

if DEGREE(current) > DEGREE(next):

// Fix the nodes that are out of order

Switch current and next

// Move on to the next pair

current <- next

next <- SIBLING(current)

else:

// The current and next nodes have the same degree, so we merge their trees

SIBLING(current) <- SIBLING(next)

current <- the root of the tree we get by merging next and current

next <- SIBLING(current)

H <- the head of the heap whose root list is LH

return HThe list is sorted in the non-decreasing order. For any possible degree, it contains at most two roots of that degree. Furthermore, any two roots with the same degree will be next to each other in the list.

In each while loop iteration, we examine the degrees of two neighboring roots ( and

) and merge their trees if they’re equal. If so, we store the resulting tree’s root in the place of the left neighbor.

Otherwise, we check if the left neighbor has a greater degree than the right one. This can happen if the degree sequence contains the pattern :

Without switching, we won’t correctly merge the trees if the first degree after the pattern is . So, for example, we would have

and miss merging the

trees if we don’t switch the first two in the triple.

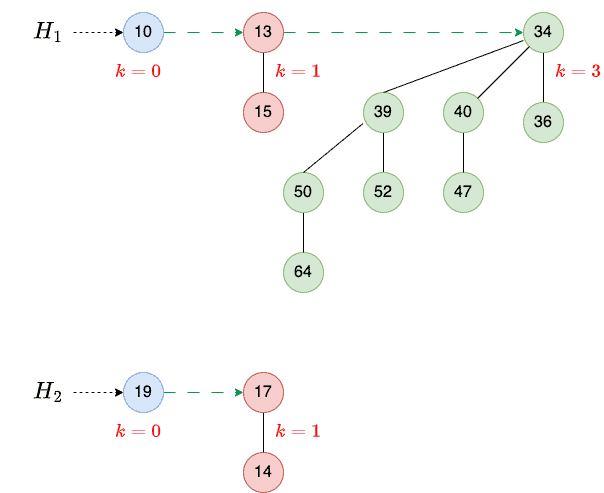

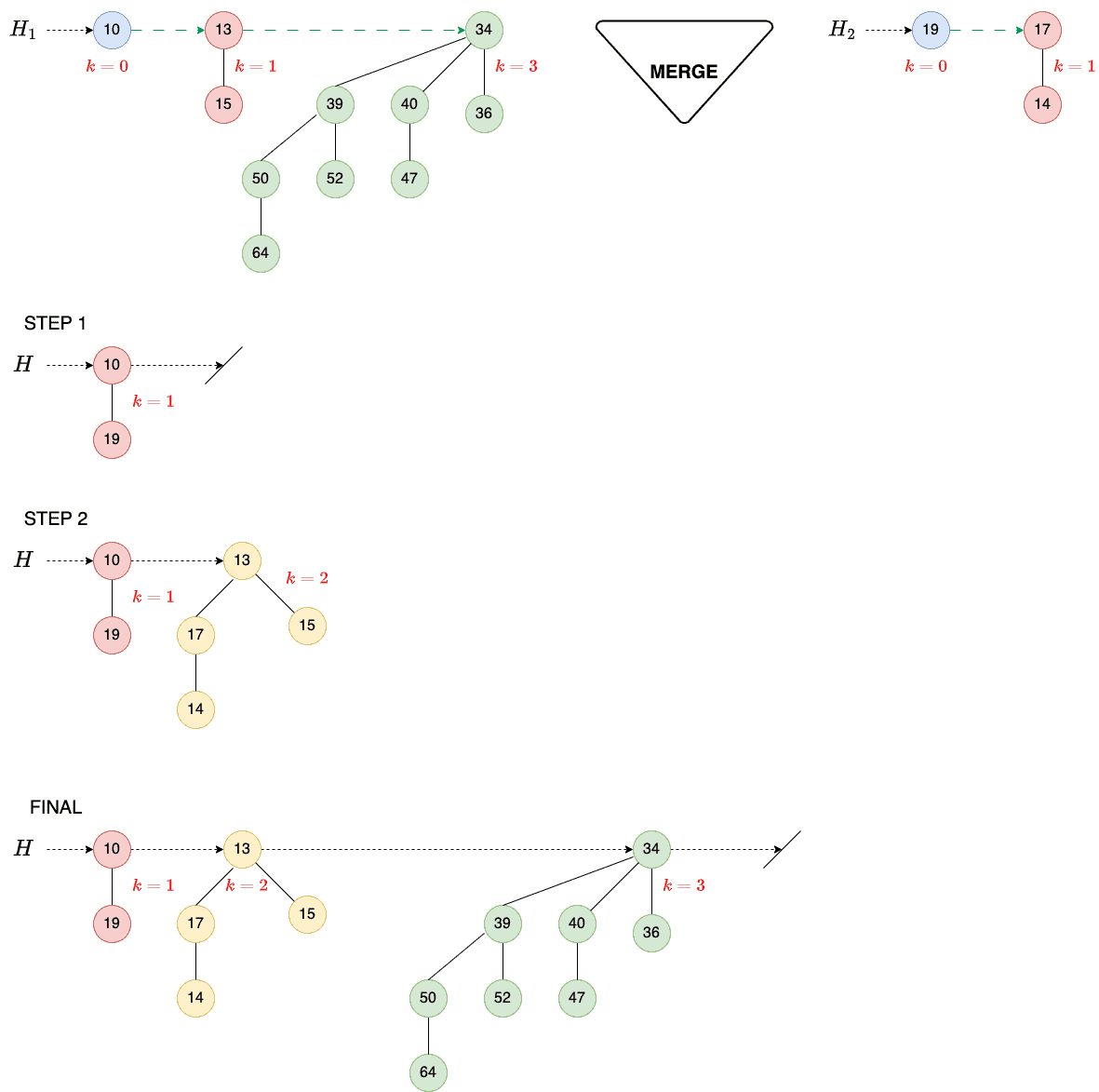

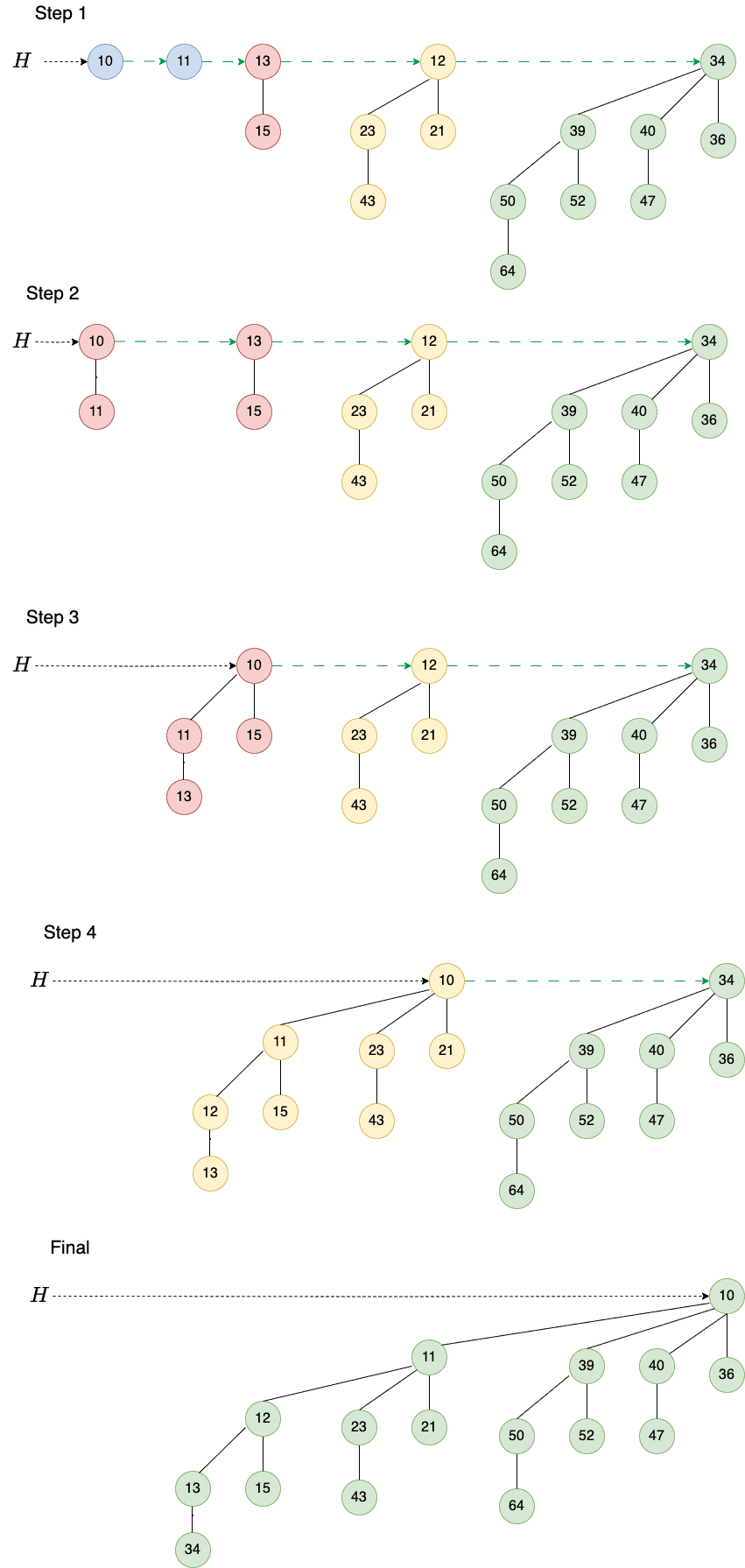

Let’s understand the Merge operation with an example. So, we have two heaps:

We first look at and

to find binomial trees of order 0. We combine them to get a binomial tree of order 1 (step 1). Then, we combine trees of order 1 to get a tree of order 2 (step 2). There’s only one tree of order 3, so we add it to the new heap:

So, our final heap contains three trees of orders 1, 2, and 3.

Our heaps and

have

and

nodes. The resulting heap

has

nodes.

Now, contains at most

trees and

contains at most

trees, so

will contain at most

trees.

Merging the sorted lists takes time. Since no more than

mergers, switches, and pointer movements are possible, the worst-case time complexity is

.

The right-hand side follows from taking the logarithms of:

To insert a new key into

, we first initialize a new node (

) and set its key to

. Then, we create a one-node binomial heap

with

as its root and merge it with

:

algorithm InsertKeyIntoHeap(H, x):

// INPUT

// H = the binomial heap

// x = the new key to be inserted

// OUTPUT

// H with x inserted

H1 <- make a new heap containing only a node with x as its key

H <- merge H and H1

Delete H1

return HWe complete the procedure by deleting the temporary binomial heap , i.e., freeing its memory.

This operation’s time and space complexity is governed by the time and space complexity of merging two heaps.

For the insert, we have and

=1. Thus, its time complexity is

, and its space complexity is

.

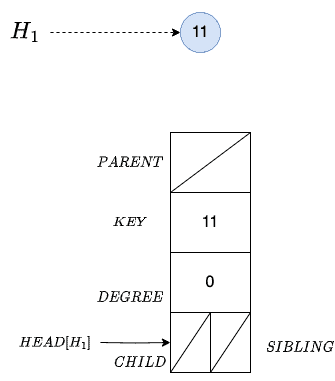

Let’s add a node with key 11 to our heap

. So, we first assign memory and create node

:

Then, we create an empty heap and set its head to node x:

As a result, we now have two heaps, and

:

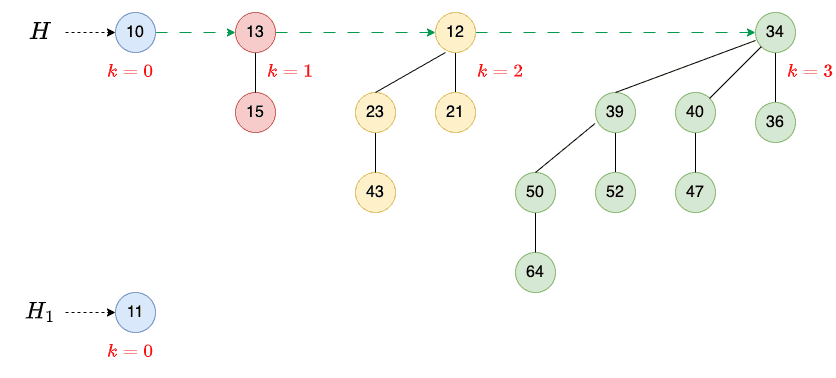

Then, we merge them:

The result is a binomial heap containing a single binomial tree of order 4.

A binary heap with height is a binary tree that can hold any number of elements up to

:

On the other hand, a binomial heap is a collection of smaller binomial trees where the number of elements in a binomial tree of order is

. So, we know the number of nodes in the binomial tree without looking into it.

Further, the merge operation for two binomial heaps with

nodes and

with

nodes has the complexity of

. This is faster than that of a binary heap (

with the same number of nodes).

On the flip side, finding the minimum key in a binary heap is a constant-time operation since the root of a binary heap always contains the minimum key. In contrast, we need to search a list of roots in a binomial heap to find the minimum.

In this article, we explained binomial heaps and their properties and studied the operations we can perform on them.

We primarily use binomial heaps to implement priority queues. We merge them more efficiently than binary heaps, although finding the minimum in a binary heap is faster.