1. Introduction

Today, it’s not uncommon for applications to serve thousands or even millions of users concurrently. Such applications need enormous amounts of memory. However, managing all that memory may easily impact application performance.

To address this issue, Java 11 introduced the Z Garbage Collector (ZGC) as an experimental garbage collector (GC) implementation. Moreover, with the implementation of JEP-377, it became a production-ready feature in JDK 15.

In this tutorial, we’ll see how ZGC manages to keep low pause times on even multi-terabyte heaps.

2. Main Concepts

To understand how ZGC works, we need to understand the basic concepts and terminology behind memory management and garbage collectors.

2.1. Memory Management

Physical memory is the RAM that our hardware provides.

The operating system (OS) allocates virtual memory space for each application.

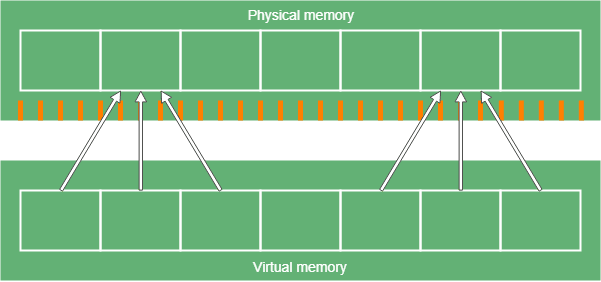

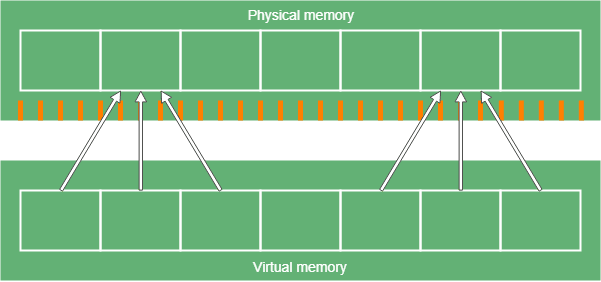

Of course, we store virtual memory in physical memory, and the OS is responsible for maintaining the mapping between the two. This mapping usually involves hardware acceleration.

2.2. Multi-Mapping

Multi-mapping means that there are specific addresses in the virtual memory, which points to the same address in physical memory. Since applications access data through virtual memory, they know nothing about this mechanism (and they don’t need to).

Effectively, we map multiple ranges of the virtual memory to the same range in the physical memory:

At first glance, its use cases aren’t obvious, but we’ll see later, that ZGC needs it to do its magic. Also, it provides some security because it separates the memory spaces of the applications.

2.3. Relocation

Since we use dynamic memory allocation, the memory of an average application becomes fragmented over time. It’s because when we free up an object in the middle of the memory, a gap of free space remains there. Over time, these gaps accumulate, and our memory will look like a chessboard made of alternating areas of free and used space.

Of course, we could try to fill these gaps with new objects. To do this, we should scan the memory for free space that’s big enough to hold our object. Doing this is an expensive operation, especially if we have to do it each time we want to allocate memory. Besides, the memory will still be fragmented, since probably we won’t be able to find a free space which has the exact size we need. Therefore, there will be gaps between the objects. Of course, these gaps are smaller. Also, we can try to minimize these gaps, but it uses even more processing power.

The other strategy is to frequently relocate objects from fragmented memory areas to free areas in a more compact format. To be more effective, we split the memory space into blocks. We relocate all objects in a block or none of them. This way, memory allocation will be faster since we know there are whole empty blocks in the memory.

2.4. Garbage Collection

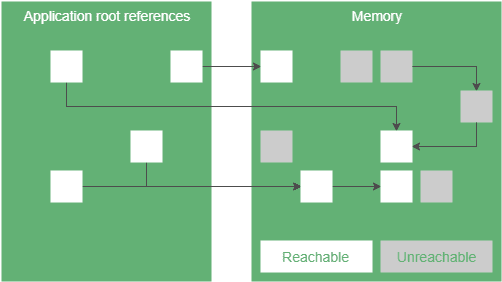

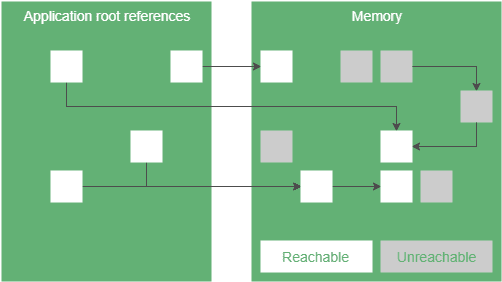

When we create a Java application, we don’t have to free the memory we allocated, because garbage collectors do it for us. In summary, GC watches which objects can we reach from our application through a chain of references and frees up the ones we can’t reach.

A GC needs to track the state of the objects in the heap space to do its work. For example, a possible state is reachable. It means the application holds a reference to the object. This reference might be transitive. The only thing that matters that the application can access these objects through references. Another example is finalizable: objects which we can’t access. These are the objects we consider garbage.

To achieve it, garbage collectors have multiple phases.

2.5. GC Phase Properties

GC phases can have different properties:

- a parallel phase can run on multiple GC threads

- a serial phase runs on a single thread

- a stop-the-world phase can’t run concurrently with application code

- a concurrent phase can run in the background, while our application does its work

- an incremental phase can terminate before finishing all of its work and continue it later

Note that all of the above techniques have their strengths and weaknesses. For example, let’s say we have a phase that can run concurrently with our application. A serial implementation of this phase requires 1% of the overall CPU performance and runs for 1000ms. In contrast, a parallel implementation utilizes 30% of CPU and completes its work in 50ms.

In this example, the parallel solution uses more CPU overall, because it may be more complex and have to synchronize the threads. For CPU heavy applications (for example, batch jobs), it’s a problem since we have less computing power to do useful work.

Of course, this example has made-up numbers. However, it’s clear that all applications have their characteristics, so they have different GC requirements.

For more detailed descriptions, please visit our article on Java memory management.

3. ZGC Concepts

ZGC intends to provide stop-the-world phases as short as possible. It achieves it in such a way that the duration of these pause times doesn’t increase with the heap size. These characteristics make ZGC a good fit for server applications, where large heaps are common, and fast application response times are a requirement.

On top of the tried and tested GC techniques, ZGC introduces new concepts, which we’ll cover in the following sections.

But for now, let’s take a look at the overall picture of how ZGC works.

3.1. Big Picture

ZGC has a phase called marking, where we find the reachable objects. A GC can store object state information in multiple ways. For example, we could create a Map, where the keys are memory addresses, and the value is the state of the object at that address. It’s simple but needs additional memory to store this information. Also, maintaining such a map can be challenging.

ZGC uses a different approach: it stores the reference state as the bits of the reference. It’s called reference coloring. But this way we have a new challenge. Setting bits of a reference to store metadata about an object means that multiple references can point to the same object since the state bits don’t hold any information about the location of the object. Multimapping to the rescue!

We also want to decrease memory fragmentation. ZGC uses relocation to achieve this. But with a large heap, relocation is a slow process. Since ZGC doesn’t want long pause times, it does most of the relocating in parallel with the application. But this introduces a new problem.

Let’s say we have a reference to an object. ZGC relocates it, and a context switch occurs, where the application thread runs and tries to access this object through its old address. ZGC uses load barriers to solve this. A load barrier is a piece of code that runs when a thread loads a reference from the heap – for example, when we access a non-primitive field of an object.

In ZGC, load barriers check the metadata bits of the reference. Depending on these bits, ZGC may perform some processing on the reference before we get it. Therefore, it might produce an entirely different reference. We call this remapping.

3.2. Marking

ZGC breaks marking into three phases.

The first phase is a stop-the-world phase. In this phase, we look for root references and mark them. Root references are the starting points to reach objects in the heap, for example, local variables or static fields. Since the number of root references is usually small, this phase is short.

The next phase is concurrent. In this phase, we traverse the object graph, starting from the root references. We mark every object we reach. Also, when a load barrier detects an unmarked reference, it marks it too.

The last phase is also a stop-the-world phase to handle some edge cases, like weak references.

At this point, we know which objects we can reach.

ZGC uses the marked0 and marked1 metadata bits for marking.

3.3. Reference Coloring

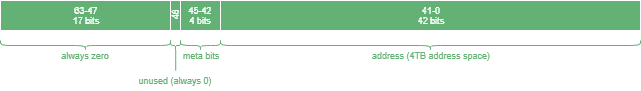

A reference represents the position of a byte in the virtual memory. However, we don’t necessarily have to use all bits of a reference to do that – some bits can represent properties of the reference. That’s what we call reference coloring.

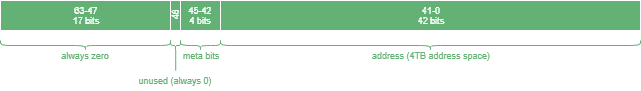

With 32 bits, we can address 4 gigabytes. Since nowadays it’s widespread for a computer to have more memory than this, we obviously can’t use any of these 32 bits for coloring. Therefore, ZGC uses 64-bit references. It means ZGC is only available on 64-bit platforms:

ZGC references use 42 bits to represent the address itself. As a result, ZGC references can address 4 terabytes of memory space.

On top of that, we have 4 bits to store reference states:

- finalizable bit – the object is only reachable through a finalizer

- remap bit – the reference is up to date and points to the current location of the object (see relocation)

- marked0 and marked1 bits – these are used to mark reachable objects

We also called these bits metadata bits. In ZGC, precisely one of these metadata bits is 1.

3.4. Relocation

In ZGC, relocation consists of the following phases:

- A concurrent phase, which looks for blocks, we want to relocate and puts them in the relocation set.

- A stop-the-world phase relocates all root references in the relocation set and updates their references.

- A concurrent phase relocates all remaining objects in the relocation set and stores the mapping between the old and new addresses in the forwarding table.

- The rewriting of the remaining references happens in the next marking phase. This way, we don’t have to traverse the object tree twice. Alternatively, load barriers can do it, as well.

Before JDK 16, it performed relocation by using a heap reserve. However, starting JDK 16, ZGC got support for in-place relocation, and it helps avoid OutOfMemoryError situations when garbage collection is required on a completely filled heap.

3.5. Remapping and Load Barriers

Note that in the relocation phase, we didn’t rewrite most of the references to the relocated addresses. Therefore, using those references, we wouldn’t access the objects we wanted to. Even worse, we could access garbage.

ZGC uses load barriers to solve this issue. Load barriers fix the references pointing to relocated objects with a technique called remapping.

When the application loads a reference, it triggers the load barrier, which then follows the following steps to return the correct reference:

- Checks whether the remap bit is set to 1. If so, it means that the reference is up to date, so can safely we return it.

- Then we check whether the referenced object was in the relocation set or not. If it wasn’t, that means we didn’t want to relocate it. To avoid this check next time we load this reference, we set the remap bit to 1 and return the updated reference.

- Now we know that the object we want to access was the target of relocation. The only question is whether the relocation happened or not? If the object has been relocated, we skip to the next step. Otherwise, we relocate it now and create an entry in the forwarding table, which stores the new address for each relocated object. After this, we continue with the next step.

- Now we know that the object was relocated. Either by ZGC, us in the previous step, or the load barrier during an earlier hit of this object. We update this reference to the new location of the object (either with the address from the previous step or by looking it up in the forwarding table), set the remap bit, and return the reference.

And that’s it, with the steps above we ensured that each time we try to access an object, we get the most recent reference to it. Since every time we load a reference, it triggers the load barrier. Therefore it decreases application performance. Especially the first time we access a relocated object. But this is a price we have to pay if we want short pause times. And since these steps are relatively fast, it doesn’t impact the application performance significantly.

4. How to Enable ZGC?

We can enable ZGC for JDK 15 and newer versions by using the -XX:+UseZGC VM flag as part of the command-line options:

java -XX:+UseZGC <java_application>

However, before JDK 15, ZGC was an experimental feature, so we also need to add the -XX:+UnlockExperimentalVMOptions VM flag to use it when running our application:

java -XX:+UnlockExperimentalVMOptions -XX:+UseZGC <java_application>

That’s it! We can use one of these approaches to enable ZGC. However, using the latest LTS version of JDK is usually recommended to benefit from the latest fixes and features.

5. Latest Features

In this section, let’s get to know some of the remarkable features of ZGC introduced in the recent versions of JDK.

5.1. Heap on NVRAM

Over the last decade, NVRAM (Non-volatile RAM) technology advancements have made it faster and cheaper. So, placing the entire Java heap on NVRAM is a cost-effective option for many workloads.

With the implementation of JEP316, we could specify an alternate memory-device path for the allocation of Java heap space in JDK 10 onwards:

--XX:AllocateHeapAt=<path>

The good news is that ZGC has added support for NVRAM heap allocation with JDK 15.

5.2. Sub-Millisecond Max Pause Times

One of the goals for the ZGC project was to minimize garbage collection (GC) pause times, initially targeting 10ms. The goal was eventually realized with JDK 16, where ZGC now has O(1) pause times of under 1ms that don’t increase with the heap or root-set size.

The ZGC team implemented a mechanism called the Stack Watermark Barrier to achieve this goal. This mechanism enables concurrent scanning of thread stacks while the Java application continues to run.

5.3. Compressed Class Pointers and Class Data Sharing

The Compressed Class Pointers feature reduces heap usage by compressing the size of object headers in HotSpot, allowing the class pointer field to be 32 bits instead of 64 bits. Previously, this feature required enabling Compressed Oops as well, but in JDK 15, the dependency was broken, allowing ZGC to work with Compressed Class Pointers independently.

Additionally, Class Data Sharing, which reduces startup time and memory footprint, now works with ZGC even when the Compressed Oops feature is disabled.

5.4. Dynamic Number of GC Threads

JDK’s -XX:+UseDynamicNumberOfGCThreads option enables the garbage collector to dynamically adjust the number of GC threads based on workload and system conditions. With ZGC’s support for this feature in JDK 17, it optimizes thread usage to collect garbage without excessive CPU consumption efficiently, ensuring more CPU time is available for Java threads.

Moreover, the -XX:ConcGCThreads option sets the maximum number of threads used by ZGC when used in conjunction with -XX:+UseDynamicNumberOfGCThreads.

5.5. Fast JVM Termination

In some cases, terminating a Java process using ZGC could take a while due to coordination with the garbage collector. However, in JDK 17, ZGC was improved to quickly reach a safe state on demand by aborting ongoing garbage collection cycles. As a result, terminating a JVM running ZGC is now nearly instantaneous.

6. Conclusion

In this article, we saw that ZGC intends to support large heap sizes with low application pause times.

To reach this goal, it uses techniques, including colored 64-bit references, load barriers, relocation, and remapping.