1. Overview

Documenting APIs is an essential part of building applications. It’s a shared contract that we have with our clients. Moreover, it documents in detail how our integration points work. The documentation should be easy to access, understand and implement.

In this tutorial, we’ll look at Springwolf for documenting event-driven Spring Boot services. Springwolf implements the AsyncAPI specification, an adaption of the OpenAPI specification for event-driven APIs. Springwolf is protocol-agnostic and covers the Spring Kafka, Spring RabbitMQ, and Spring CloudStream implementations.

Using Spring Kafka as our event-driven system, Springwolf generates an AsyncAPI document from code for us. Some consumers are auto-detected. Other information is provided by us.

2. Setting up Springwolf

To get started with Springwolf, we add the dependency and configure it.

2.1. Adding the Dependency

Assuming we have a running Spring application with Spring Kafka, we add springwolf-kafka to our Maven project as a dependency in the pom.xml file:

<dependency>

<groupId>io.github.springwolf</groupId>

<artifactId>springwolf-kafka</artifactId>

<version>0.14.0</version>

</dependency>

The latest version can be found on Maven Central, and support for other bindings besides Spring Kafka is mentioned on the project’s website.

2.2. application.properties Configuration

In the most basic form, we add the following Springwolf configuration to our application.properties:

# Springwolf Configuration

springwolf.docket.base-package=com.baeldung.boot.documentation.springwolf.adapter

springwolf.docket.info.title=${spring.application.name}

springwolf.docket.info.version=1.0.0

springwolf.docket.info.description=Baeldung Tutorial Application to Demonstrate AsyncAPI Documentation using Springwolf

# Springwolf Kafka Configuration

springwolf.docket.servers.kafka.protocol=kafka

springwolf.docket.servers.kafka.url=localhost:9092

The first block sets the general Springwolf configuration. This includes the base-package, which is used by Springwolf for the auto-detection of listeners. Also, we set general information under the docket configuration key, which appears in the AsyncAPI document.

Then, we set springwolf-kafka specific configuration. Again, this appears in the AsyncAPI document.

2.3. Verification

Now, we are ready to run our application. After the application has started, the AsyncAPI document is available at the /springwolf/docs path by default:

http://localhost:8080/springwolf/docs

3. The AsyncAPI Document

The AsyncAPI document follows a similar structure as the OpenAPI document. First, we look at the key sections only. The specification is available on the AsyncAPI website. For brevity, we’ll only look at a subset of properties.

In the following subsections, we’ll look at the AsyncAPI document in JSON format in incremental steps. We start with the following structure:

{

"asyncapi": "2.6.0",

"info": { ... },

"servers": { ... },

"channels": { ... },

"components": { ... }

}

3.1. info Section

The info section of the document contains information about the application itself. This includes at least the following fields: title, application version, and description.

Based on the information we added to the configuration, the following structure is created:

"info": {

"title": "Baeldung Tutorial Springwolf Application",

"version": "1.0.0",

"description": "Baeldung Tutorial Application to Demonstrate AsyncAPI Documentation using Springwolf"

}

3.2. servers Section

Similarly, the servers section contains information about our Kafka broker and is based on the application.properties configuration above:

"servers": {

"kafka": {

"url": "localhost:9092",

"protocol": "kafka"

}

}

3.3. channels Section

This section is empty at this point because we didn’t configure any consumers or producers in our application yet. After configuring them in a later section, we’ll see the following structure:

"channels": {

"my-topic-name": {

"publish": {

"message": {

"title": "IncomingPayloadDto",

"payload": {

"$ref": "#/components/schemas/IncomingPayloadDto"

}

}

}

}

}

The generic term channels refers to topics in Kafka terminology.

Each topic may provide two operations: publish and/or subscribe. Notably, the semantics may appear mixed up from the viewpoint of an application:

- publish messages to this channel so that our application can consume them.

- subscribe to this channel to receive messages from our application.

The operation object itself contains information like a description and a message. The message object contains information like a title and payload.

To avoid duplicating identical payload information in multiple topics and operations, AsyncAPI uses the $ref notation to indicate a reference in the components section of the AsyncAPI document.

3.4. components Section

Again, this section is empty at this point but will have the following structure:

"components": {

"schemas": {

"IncomingPayloadDto": {

"type": "object",

"properties": {

...

"someString": {

"type": "string"

}

},

"example": {

"someEnum": "FOO2",

"someLong": 1,

"someString": "string"

}

}

}

}

The components section contains all the details of the $ref references, including #/components/schemas/IncomingPayloadDto. Besides the type of data and properties of the payload, the schema can also contain an example (JSON) of the payload.

4. Documenting Consumers

Springwolf auto-detects all @KafkaListener annotations, which are shown next. Additionally, we use the @AsyncListener annotation to provide more details manually.

4.1. Auto-detection of @KafkaListener Annotation

By using Spring-Kafka’s @KafkaListener annotation on a method, Springwolf finds the consumer within the base-package automatically:

@KafkaListener(topics = TOPIC_NAME)

public void consume(IncomingPayloadDto payload) {

// ...

}

Now, the AsyncAPI document does contain the channel TOPIC_NAME with the publish operation and IncomingPayloadDto schema as we saw earlier.

4.2. Manually Documenting Consumer via @AsyncListener Annotation

Using auto-detection and @AsyncListener together may lead to duplicates. To be able to add more information manually, we disable the @KafkaListener auto-detection completely and add the following line to the application.properties file:

springwolf.plugin.kafka.scanner.kafka-listener.enabled=false

Next, we add the Springwolf @AsyncListener annotation to the same method and provide additional information for the AsyncAPI document:

@KafkaListener(topics = TOPIC_NAME)

@AsyncListener(

operation = @AsyncOperation(

channelName = TOPIC_NAME,

description = "More details for the incoming topic"

)

)

@KafkaAsyncOperationBinding

public void consume(IncomingPayloadDto payload) {

// ...

}

Also, we add the @KafkaAsyncOperationBinding annotation to connect the generic @AsyncOperation annotation with the Kafka broker in the servers section. Kafka protocol-specific information is also set using this annotation.

After the change, the AsyncAPI document contains the updated documentation.

5. Documenting Producers

Producers are documented manually by using the Springwolf @AsyncPublisher annotation.

5.1. Manually Documenting Producers via @AsyncPublisher Annotation

Similar to the @AsyncListener annotation, we add the @AsyncPublisher annotation to the publisher method and add the @KafkaAsyncOperationBinding annotation as well:

@AsyncPublisher(

operation = @AsyncOperation(

channelName = TOPIC_NAME,

description = "More details for the outgoing topic"

)

)

@KafkaAsyncOperationBinding

public void publish(OutgoingPayloadDto payload) {

kafkaTemplate.send(TOPIC_NAME, payload);

}

Based on this, Springwolf adds for the TOPIC_NAME channel a subscribe operation to the channels section using the information provided above. The payload type is extracted from the method signature in the same way as it’s done for the @AsyncListener.

6. Enhancing the Documentation

The AsyncAPI specification covers even more features than we have covered above. Next, we document the default Spring Kafka header __TypeId__ and improve the documentation of the payload.

When running a native Spring Kafka application, Spring Kafka automatically adds the header __TypeId__ to assist in the deserialization of the payload in the consumer.

We add the __TypeId__ header to the documentation by setting the headers field on the @AsyncOperation of the @AsyncListener (or @AsyncPublisher) annotation:

@AsyncListener(

operation = @AsyncOperation(

...,

headers = @AsyncOperation.Headers(

schemaName = "SpringKafkaDefaultHeadersIncomingPayloadDto",

values = {

// this header is generated by Spring by default

@AsyncOperation.Headers.Header(

name = DEFAULT_CLASSID_FIELD_NAME,

description = "Spring Type Id Header",

value = "com.baeldung.boot.documentation.springwolf.dto.IncomingPayloadDto"

),

}

)

)

)

Now, the AsyncAPI document contains a new field headers as part of the message object.

6.2. Adding Payload Details

We use the Swagger @Schema annotation to provide additional information about the payload. In the following code snippet, we set the description, an example value, and whether the field is required:

@Schema(description = "Outgoing payload model")

public class OutgoingPayloadDto {

@Schema(description = "Foo field", example = "bar", requiredMode = NOT_REQUIRED)

private String foo;

@Schema(description = "IncomingPayload field", requiredMode = REQUIRED)

private IncomingPayloadDto incomingWrapped;

}

Based on this, we see the enriched OutgoingPayloadDto schema in the AsyncAPI document:

"OutgoingPayloadDto": {

"type": "object",

"description": "Outgoing payload model",

"properties": {

"incomingWrapped": {

"$ref": "#/components/schemas/IncomingPayloadDto"

},

"foo": {

"type": "string",

"description": "Foo field",

"example": "bar"

}

},

"required": [

"incomingWrapped"

],

"example": {

"incomingWrapped": {

"someEnum": "FOO2",

"someLong": 5,

"someString": "some string value"

},

"foo": "bar"

}

}

The full AsyncAPI document of our application is available in the linked example project.

7. Using Springwolf UI

Springwolf has its own UI, although any AsyncAPI conforming document renderer can be used.

7.1. Adding the springwolf-ui Dependency

To use springwolf-ui, we add the dependency to our pom.xml, rebuild and restart our application:

<dependency>

<groupId>io.github.springwolf</groupId>

<artifactId>springwolf-ui</artifactId

<version>0.8.0</version>

</dependency>

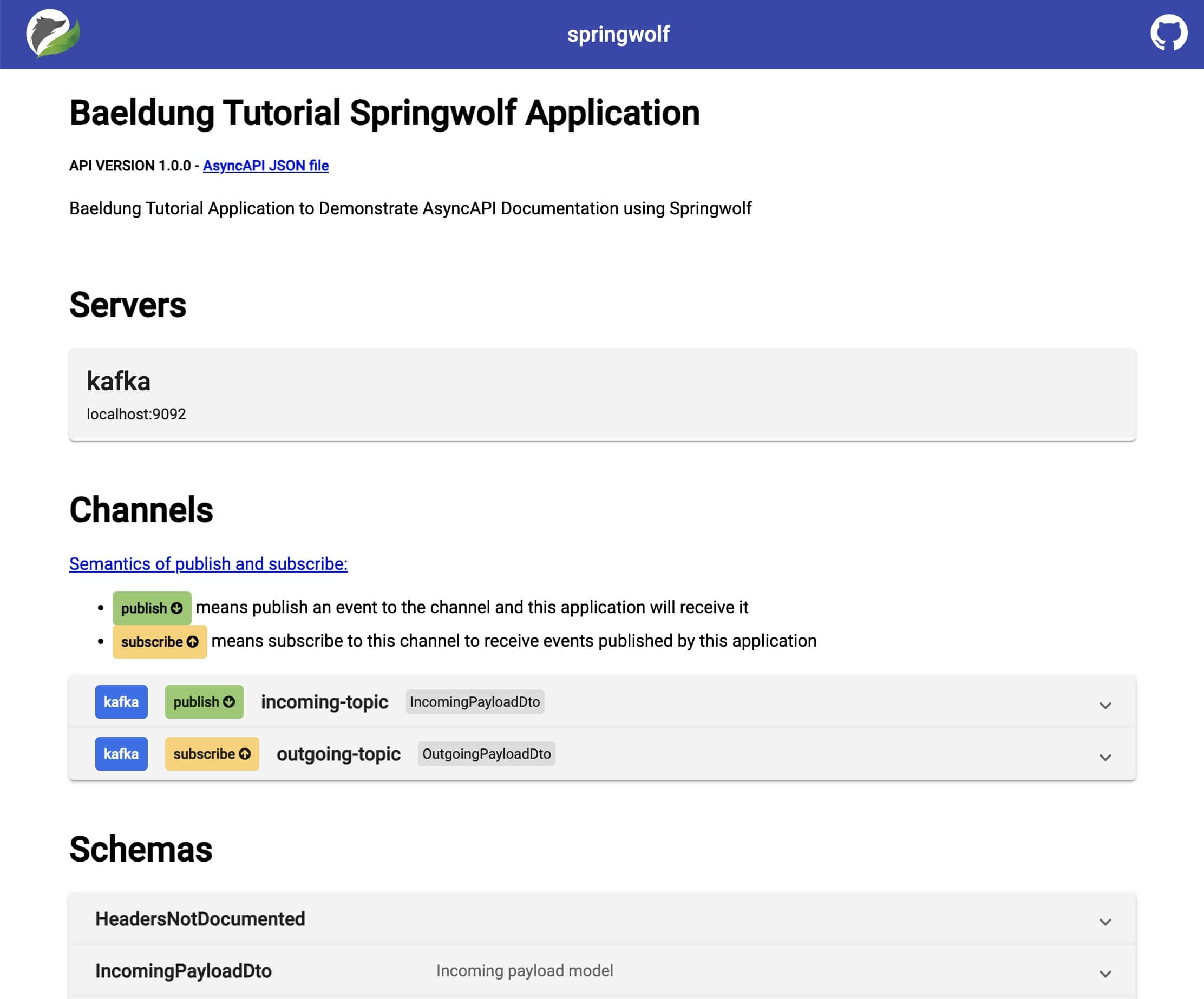

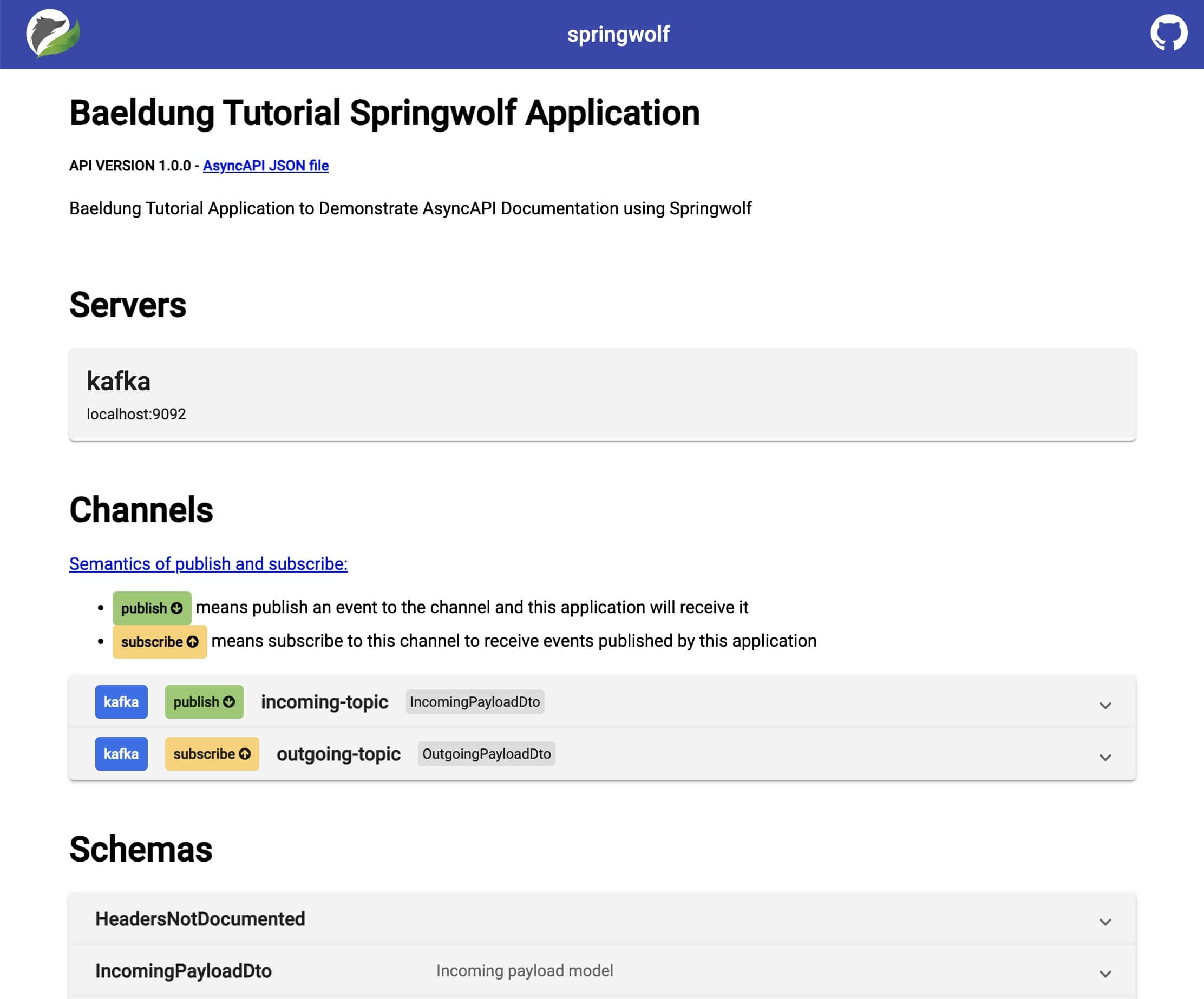

7.2. Viewing the AsyncAPI Document

Now, we open the documentation in our browser by visiting http://localhost:8080/springwolf/asyncapi-ui.html.

The webpage has a similar structure compared to the AsyncAPI document and displays information about the application, details about the servers, channels, and schemas:

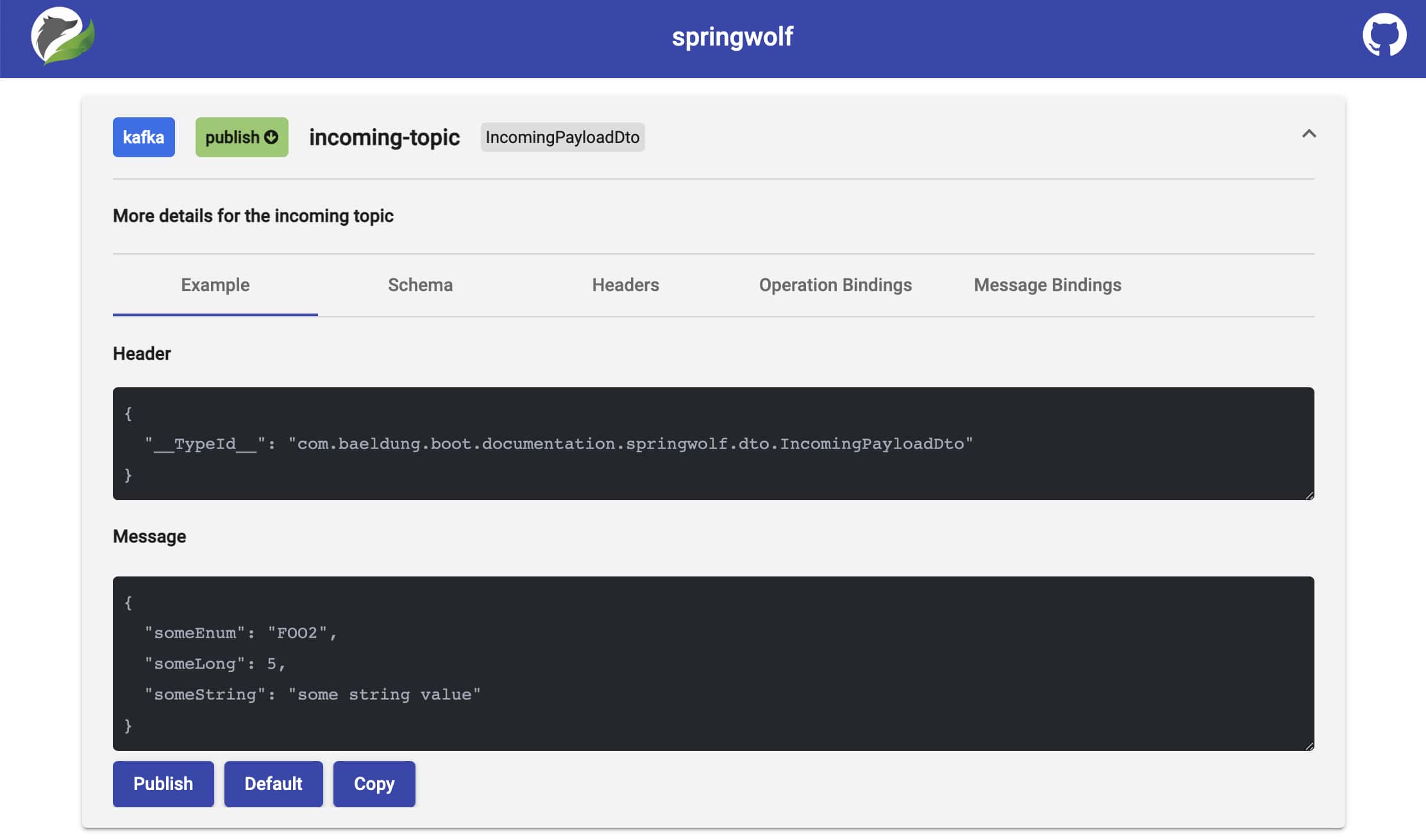

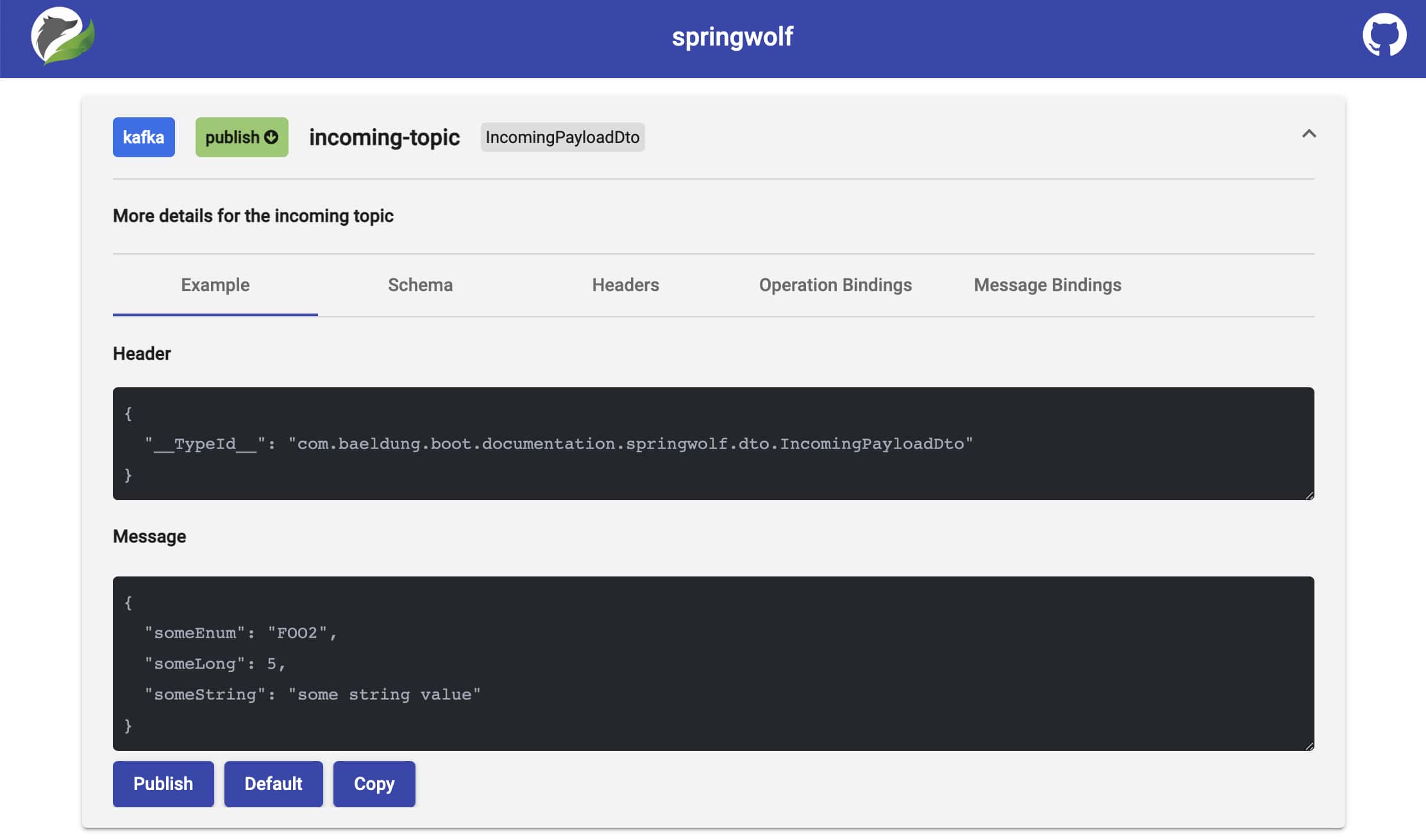

7.3. Publishing Messages

Springwolf allows us to publish messages from the browser directly. After expanding a channel, clicking the Publish button puts a message on Kafka directly. The message binding (including Kafka Message Key), headers, and message are adjustable as needed:

Due to security concerns, this feature is disabled by default. To enable publishing, we add the following line to our application.properties file:

springwolf.plugin.kafka.publishing.enabled=true

8. Conclusion

In this article, we have set up Springwolf in an existing Spring Boot Kafka application.

Using the consumer auto-detection, an AsyncAPI conform document is generated automatically. We further enhanced the documentation through manual configuration.

Apart from downloading the AsyncAPI document through the provided REST endpoint, we used springwolf-ui to view the documentation in a browser.

As always, the source code for the examples is available over on GitHub.