1. Overview

In this tutorial, we’ll discuss three popular data compression techniques: zlib, gzip, and ZIP. We’ll also present a comparison between them.

2. Introduction to Data Compression

Data compression is the process of reducing the size of a file using some compression algorithm. We can reduce the size of a given file using different processes. Some of them are encoding, restructuring, and modifying. The main goal of the data compression process is to reduce the size while ensuring no data loss in the original file. After compressing a file, we apply a decoding algorithm in order to generate the original file:

Now let’s talk about why we need to compress the size of a file? When the internet was introduced, the size of the data that could be sent was limited. Hence, we were not able to send large size data files. To overcome this issue, mathematicians came up with different types of algorithms to rearrange the bits and bytes of the file in such a way that size of the file can be reduced. However, there were some issues with the compression algorithms.

When a user sends the compressed data over the internet, the file received may or may not contains the same data as the original file. Hence, we can divide the data compression algorithms into two broad categories: lossless and lossy data compression.

Lossy compression is a type of data compression in which the original file size is reduced by removing irrelevant bits of information. Therefore, we can use this type of compression when perfect reconstruction of the data file is not required. Hence, the data in the original file is not the same as the file received.

The advantage of lossy compression is the significant reduction of the size of files. Additionally, it’s widely used to compress audio and video files.

In lossless compression, the size of the file is reduced by removing the redundancies in the data file. Therefore, actual bits of information remain the same. Additionally, it’s primarily used in PNG and FLAC files.

The reduction of the size of a file is less compared to lossy compression. Hence, the main advantage of lossless compression is to reduce the size while ensuring the data is intact. Therefore, we can use lossless compression over lossy compression when we can’t afford to lose any information in a file.

3. zlib

zlib is a free, open-source software for the compression and decompression of files. Additionally, it comes under the lossless data compression category. zlib creates the .zlib extension. The compression ratio of zlib ranges between 2:1 to 5:1. Additionally, it provides ten compression levels, and different levels have different compression ratios and speeds.

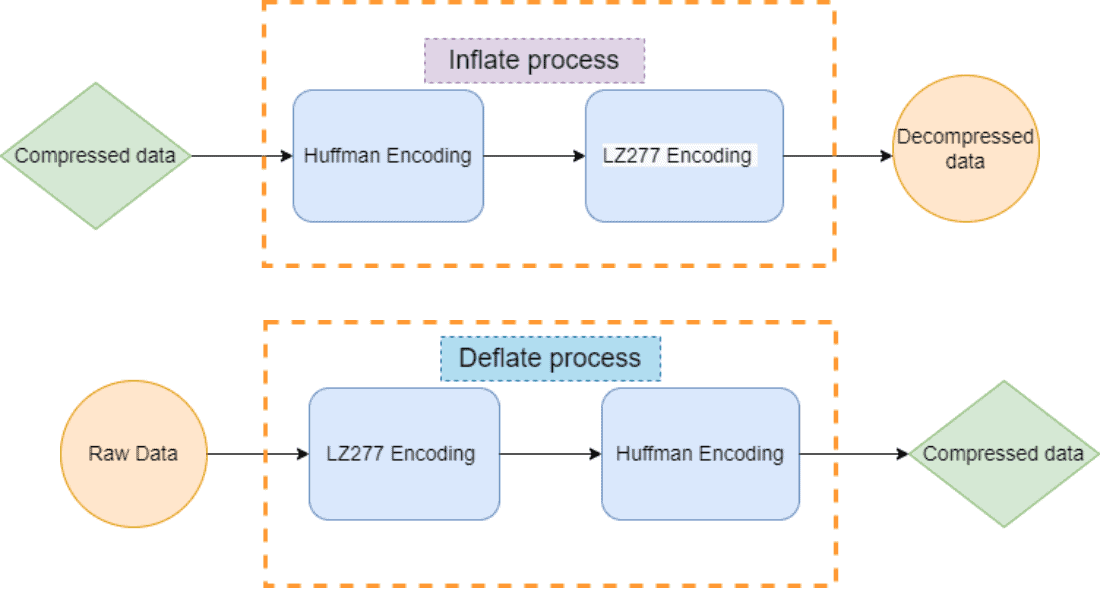

zlib algorithm uses Deflate method for compression and Inflate method for decompression. Deflate method encodes the data into compressed data. Additionally, Inflate method decodes the inflate bits from the compressed data. zlib generates the original file without losing any bit of data:

Therefore, the Deflate and Inflate are vice-versa of each. These methods represent the encoding and decoding algorithm in zlib. Both the Deflate and Inflate use LZ277 and Huffman’s encoding. Huffman’s encoding is a type of entropy encoding technique that uses the binary tree to generate an optimal compressed result. Lz277 algorithm works by matching text and maintaining a sliding window.

The main drawback of zlib is that it doesn’t have any checksum mechanism to maintain the integrity of data.

4. gzip

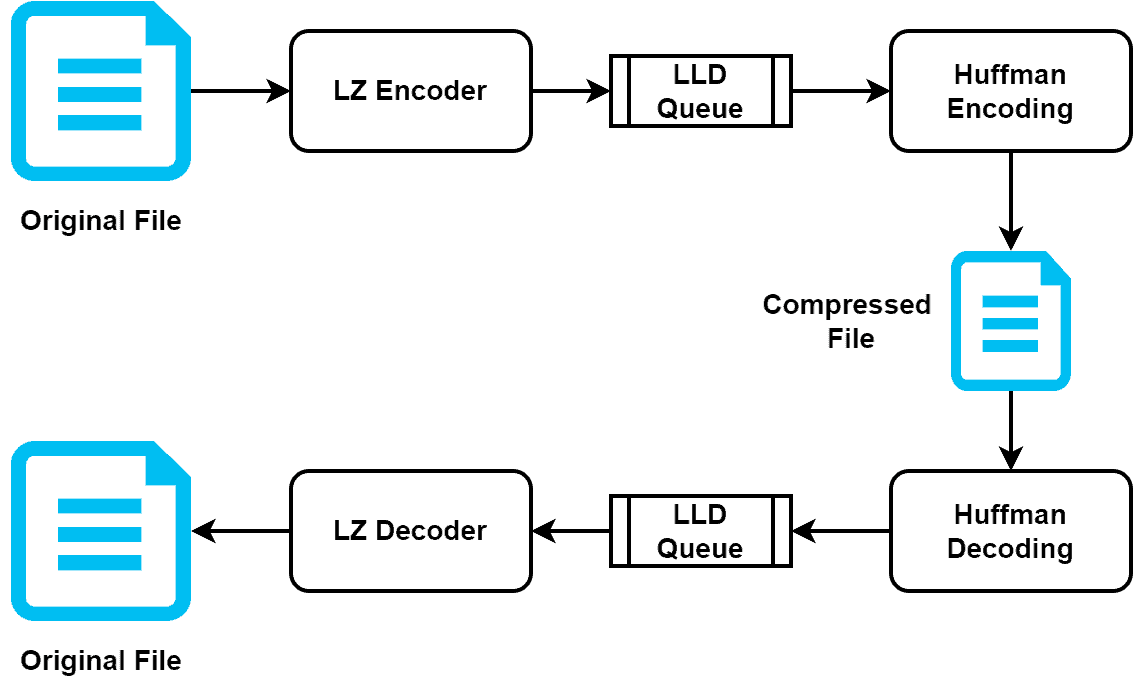

gzip is a popular data compression and decompression method. It’s mainly used to compress a single file and is found in Unix/Linux systems. Additionally, we can also utilize gzip to compress the HTTP content. gzip also uses LZ and Huffman’s method for encoding and decoding files. Additionally, it uses literal and length/distance queue (LLD) in order to store the intermediate results:

When we have a single large file, gzip performs better compared to other compression methods. Therefore, the compression and decompression process is very fast in gzip. gzip is an ideal choice for compressing a file sent over the internet. Hence, it compresses the file effectively and reduces the file size significantly. Additionally, it uses less network bandwidth.

The loading time of a website has a crucial impact on the incoming traffic. Particularly, the sales of e-commerce websites highly depend on the loading time. Therefore, as gzip takes less network bandwidth and reduces the size of any uploaded files significantly, it helps in boosting the speed of the website loading time.

gzip can create several file extensions including .gz, .tgz, .gz. Additionally, it also provides ten compression levels. Level 0 is the lowest level that performs no compression but has the highest speed in the compression and decompression process. Additionally, we can set the level manually in gzip. By default, gzip uses level 6 for the compression and decompression process.

gzip not only compresses the given file but also saves information about the input file. Additionally, compared to zlib, gzip contains more header fields in its internal file format. Therefore, it adds a lot of CPU overhead, creating pressure on the server. Hence, if a large-scale website doesn’t use a powerful server, the website may go down because of the extra overhead created by gzip.

5. ZIP

ZIP is the most common and popular compression method. It comes under the lossless data compression method. In the ZIP technique, we can use various methods of compression data, including Deflate, Deflate64, bzip2, LZMA, and WavPack. However, the most used method in ZIP for compression is Deflate method.

The big advantage of the ZIP technique is that it can take multiple files and compress them together to generate a single compressed file. Similar to gzip, it also has ten compression levels. Additionally, it uses the CRC-32 checksum for maintaining data integrity.

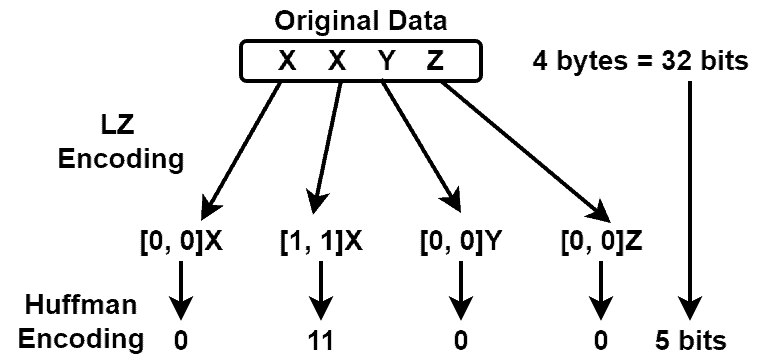

Let’s take a look at an example:

Here, the size of the original file is 32 bits. After applying ZIP, the size becomes 5 bits.

In the early versions of ZIP, the input file size was limited to 4 Gb. In the current version of ZIP, the input file size can be up to 16 exabytes. The main disadvantage of the ZIP technique is the limitation in the compression. ZIP doesn’t perform well for file formats, including MP3 and JPEG.

6. Comparison

Let’s see comparisons between zlib, gzip, and ZIP:

7. Conclusion

In this tutorial, we discussed three popular data compression techniques: zlib, gzip, and ZIP.

We also presented a comparison between them.