1. Introduction

In this tutorial, we’ll show how to find the pair of 2D points with the minimal Manhattan distance.

2. The Minimal Manhattan Distance

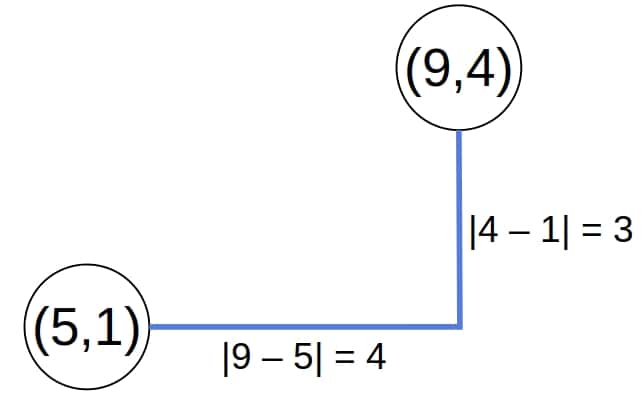

Let’s say that we have two-dimensional points

. We want to find the two closest per the Manhattan distance. It measures the length of the shortest rectilinear path between two points that contains only vertical and horizontal segments:

So, the formula for computing the Manhattan distance is:

(1)

3. Algorithm

We can follow the brute-force approach and iterate over all pairs of points, calculating the distance between each two and returning the closest pair. However, that algorithm’s time complexity is quadratic.

Instead, we could design a more efficient algorithm using the divide-and-conquer strategy. Finding the closest pair is a fundamental problem of computational geometry, so it’s necessary to have a fast method for solving it.

3.1. The Divide-and-Conquer Approach

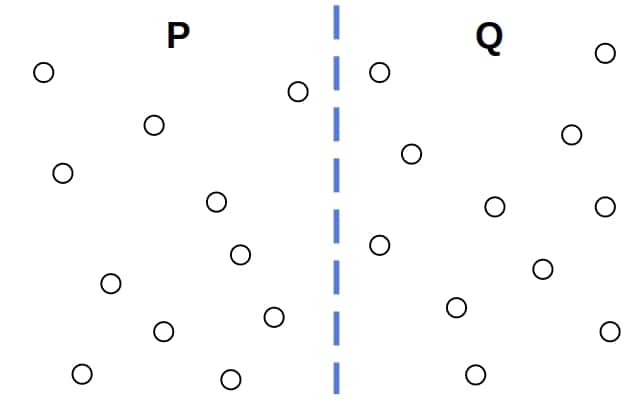

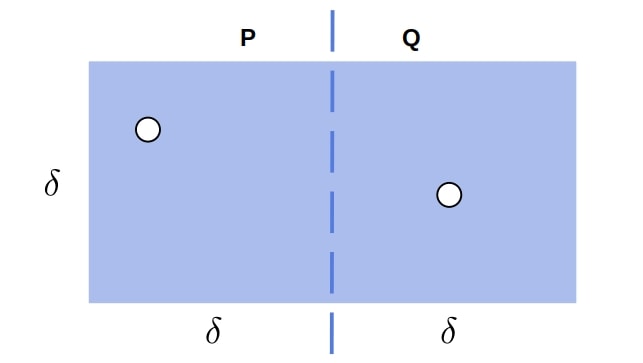

Let’s draw a line through to split it into the left and right halves,

and

:

Now, the closest pair in the whole is either the closest pair in

, the closest pair in

, or the closest pair whose one point is in

and the other in

:

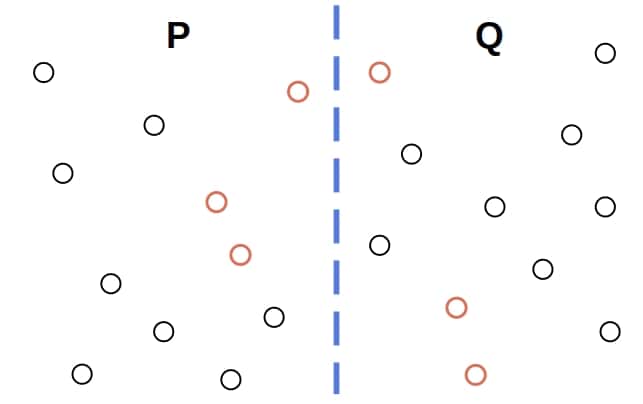

Therefore, to find the closest pair in , we first need to determine the closest pair in its left and right halves. That’s the step where we break the original problem into two smaller sub-problems. Let’s say that

is the minimal distance in

and

is the minimal distance in

. With

, the closest two points in

are at a distance shorter than

only if they reside in the

-wide strip along the dividing line:

So, the combination step consists of finding the strip’s minimal distance and comparing it to

.

That gives a recursive algorithm. As the base case, we’ll take that contains three points and solve it by inspecting all three pairs, but there are other choices.

3.2. The Worst-Case Running Time Analysis

Let be the worst-case running time for the input with

points, and let

denote the cost of finding

and dividing

into

and

. Then, we have the following recurrence:

(2)

Scanning through and

to remove all the points not in the

-wide strip takes

time, so

. In the worst case, all the pairs

can be in the strip, so an enumerative approach performs

steps and the recurrence becomes:

Using the Master Theorem, we get . But, that isn’t any better than the inefficient brute-force algorithm, and we didn’t even consider the cost of splitting

. Can we do anything to make the algorithm faster? Luckily, the points in the strip have a special structure that will enable us to find

quickly. Indeed, if we implemented the algorithm so that

, the recurrence (2) would evaluate to an

solution.

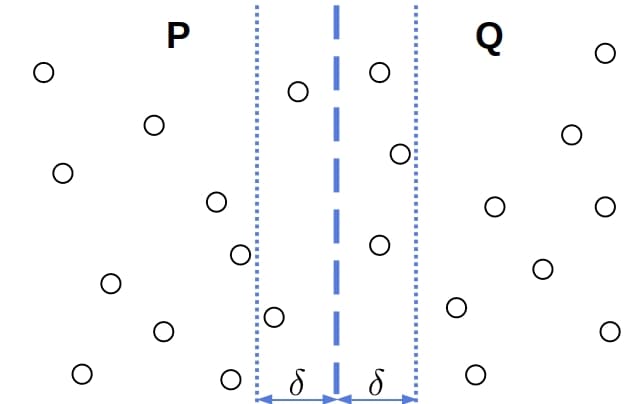

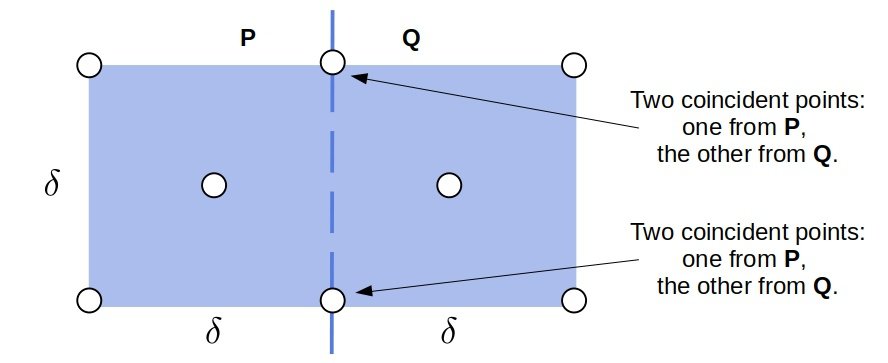

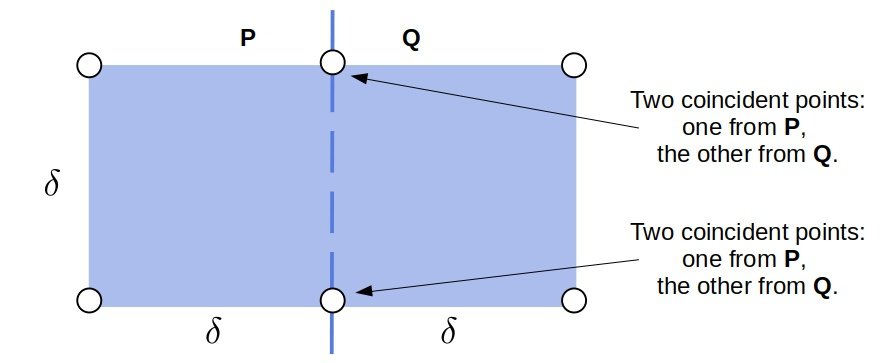

3.3. The Vertical Manhattan Strip Has a Special Structure

Let’s assume that the closest two points in

are in the strip (

). Because

, we can be sure that vertically, they can’t be more than

apart:

How many other points from and

can be in the

rectangle containing

and

? Since all the points

are at distances

, at most five points from

can reside in the left half of the rectangle, and the same goes for

:

So, if we sorted the points in the strip by the -coordinates, the indices of

and

would differ at most by 9. Therefore, for each point in the strip, we need to check only the nine that follow it in the

-sorted array. As a result, we can find

in a linear time. But, if we sort the points in each recursive call, we’ll have

, and we need

to be linear in

for the whole algorithm to be log-linear.

3.4. Sorting

We achieve that by presorting. If we sorted by the

-axis beforehand, we could split the points into the

-sorted

and

in each call in a linear time. Let’s denote the

-sorted copy of

as

.

Since we partition by the points’

-coordinates, we should also keep a copy of

sorted by

(let’s denote it as

). Then, we can use the middle copy’s middle index to split

into the left and right halves in

time. Before making the recursive calls, we need to split

and

into their

and

parts just as we split

into

and

. We do that in linear time by reversing the merge step of Merge sort:

Presorting can be done in the worst-case time by Merge sort. With

, our algorithm also takes

time, so the total complexity doesn’t suffer by presorting.

3.5. The Pseudocode

Finally, here’s the pseudocode of the whole algorithm:

3.6. What If We Didn’t Use the Manhattan Distance?

The only part where we use the properties of the Manhattan distance is the analysis of the maximal number of points we can fit into a rectangle centered at the vertical line so that the distance of each two from the same half is at most

.

If we use other distance functions, the rectangle’s structure will change. For example, the Euclidean distance admits at most 8 points:

So, we wouldn’t need to check more than seven points following each one in the filtered .

4. Conclusion

In this article, we showed how to find the closest two points in a two-dimensional space endowed with the Manhattan distance.