1. Introduction

Nowadays, applications that involve pictures and videos are becoming more popular as time goes by. There are many: face recognition, parking lot surveillance, and cancer detection, to mention a few.

Two fields develop new methods for these applications: computer vision and image processing.

In this tutorial, we’ll discuss the definitions of these two areas. We’ll also talk about their differences.

2. Computer Vision vs. Image Processing

Before focusing on their differences, let’s first define each field.

2.1. Image Processing

We can think of image processing as a black box that receives an image as input, transforms it internally, and returns a new image as output.

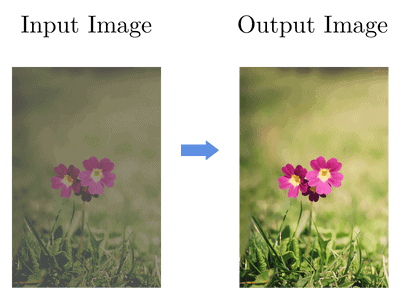

The transformations applied to the input image will vary depending on our needs. One simple example is to adjust the brightness and contrast of an image:

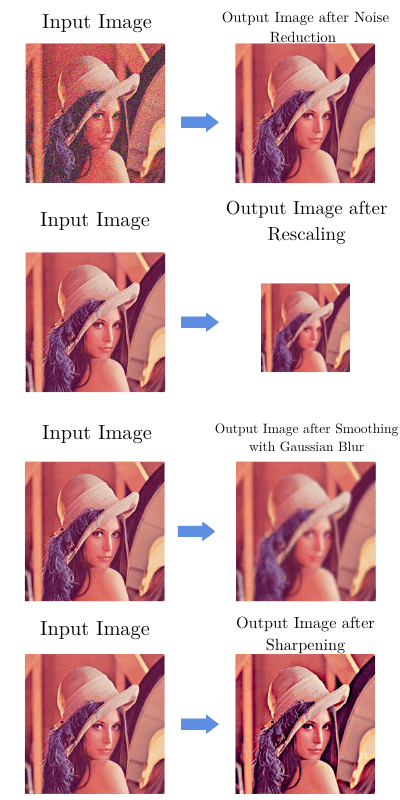

We can also implement noise reduction, rescaling, smoothing, and sharpening:

To put it in a simple way, in image processing, we’ll always have an image as input and an image as output.

This area is crucial in a large number of fields. Usually, we use image-processing techniques as the first step in our applications.

For instance, we can apply the sharpening operation in a picture representing cell samples to make the edges more evident. As a result, we’ll be able to isolate the cells with higher precision in further steps.

2.2. Computer Vision

When we need to recognize what is represented in an image or detect any type of pattern, that’s a job for computer vision algorithms.

As the name suggests, our aim is to replicate human vision. For example, we want our computer vision systems to be able to recognize a bird in the tree just as humans can do it.

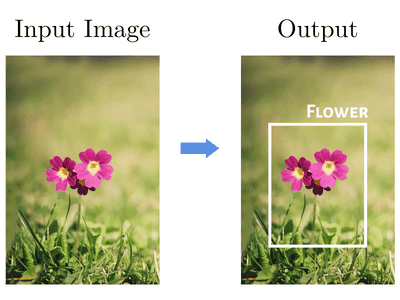

Let’s go back to our flower picture from a previous example. Let’s also consider that we’re talking about an object detection application, which is a computer vision task.

If we use the same image as the input, our output wouldn’t be a new image as it was in image processing.

Instead, we’d have a bounding box and a label with the detected object:

Besides object recognition in images, there are other application scenarios for computer vision, such as classifying hand-written digits in images or detecting faces in a video.

3. Main Differences

The differences between these two areas are defined by the goal, not the method.

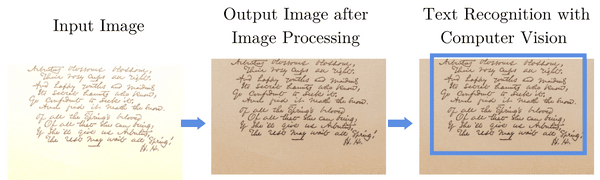

It’s common to find applications where we use image processing as a preprocessing stage for a later computer vision algorithm.

For instance, we can apply image-processing techniques for improving brightness and contrast in order to get a clearer view of some text. As a result, that will improve the performance of an object detector that finds the text and identifies words in it:

3.1. Summary

Here’s the summary of key differences:

4. Conclusion

Even with overlap and interdependence, image processing and computer vision are still different fields.

In this article, we discussed how we can differentiate them.

We should remember that an image-processing method alters its input image’s properties. In contrast, computer vision will try to interpret what is represented in the picture or video.