Learn through the super-clean Baeldung Pro experience:

>> Membership and Baeldung Pro.

No ads, dark-mode and 6 months free of IntelliJ Idea Ultimate to start with.

Last updated: March 18, 2024

We always leave invisible traces of our activities when using a disk. Someone could retrieve our deleted and forgotten files after years even if we changed the partitioning or file system type.

In this tutorial, we’ll look at various solutions to remove confidential data from a disk’s free space.

When we delete a file, Linux marks its inode and blocks as unused. This behavior is generally true for speed reasons, but what actually happens depends on the file system type.

In any case, the contents of the file remain on the disk. Whether the new data will overwrite the deleted data is not a given. It depends on many factors, such as the wear-leveling technology used by the disk.

Cleaning the free space always means overwriting it, but some myths and misunderstandings can mislead us. Let’s try to get some clarity.

All our directions will refer to file systems mountable with Linux. Still, we must exercise caution if other operating systems or network shares are using our disks because overwriting programs may conflict with other processes.

Moreover, typical overwriting solutions temporarily use all free space on the disk so that other concurrent write actions may fail. So, the golden rule is that no program should use the disk while cleaning it up.

The examples below will refer to a test device used exclusively by our Linux machine to wipe its free space. As we will see later, another issue is that not all free space is overwritable on mounted partitions. So, we’ll also see how to clean up disks after unmounting their partitions.

There is a belief that several overwrites are necessary to avoid recovering confidential data, perhaps with different random patterns. But in today’s drives, multiple overwrites aren’t more effective than a single overwrite with 0s. And even two overwrites can take more than a day to erase a large capacity drive, resulting in time and wear issues.

The fact that intelligence agencies require the physical destruction of disks is sometimes argued as evidence that the data would be recoverable even if overwritten. This reasoning is incorrect. Even commercial data recovery software manufacturers assert the impossibility of recovering overwritten data. Physical destruction is only a quick and foolproof way to ensure data erasure, but of course, we don’t need such drastic and destructive methods.

Virtual disks can use a sparse allocation or a non-sparse allocation. Sparse allocation creates a sparse disk, so its size is initially tiny. It’ll increase according to disk usage. On the other hand, non-sparse allocation creates the entire fixed-size disk from the beginning.

Zeroing a block on a sparse virtual device may not wipe the block on the underlying physical disk. In fact, it’s unlikely to do so. Instead, the virtual disk manager will probably mark the block as no longer used so that it can be allocated to something else later.

Even for fixed-size virtual devices, we may have no control over where the device blocks live physically because administrators could move them anytime to new locations. This issue is valid for all cloud services such as VPSs and blockstorages since we have no control of the server farm. In this case, zeroing a block can wipe its current location, not any previous locations the block may have resided in the past.

For these reasons, we should not trust any wipe action if we don’t have complete control of both virtual and host machines.

Beyond conceptual discussions, the surest way to realize the actual usefulness of a wiping technique is to check how many files are recoverable before and after wiping. For this purpose, we’ll use PhotoRec, an open-source data recovery software that ignores the file system and goes after the underlying data. So, it’ll still work even if our media’s file system is damaged or reformatted.

Our test device is an old 16GB USB stick containing a single ext4 partition. All our tests involve these steps:

Of course, our expectation is that PhotoRec won’t find any recoverable files after the wiping, but we’ll see that this isn’t always the case.

Many of today’s privacy-preserving tools create a big file that fills up a storage device to overwrite all the deleted files that the media contain. But while this technique is widespread, some simple tests such as ours below invalidate it.

A critical evaluation of this dominant free-space sanitization technique found the big file effective in overwriting file data on FAT32, NTFS, and HFS, but not on ext2/3 or ReiserFS. After this wiping technique, we could still recover up to 17% of the data from Linux ext2. Also, file metadata such as filenames are rarely overwritten.

The primary problem with this technique is that it sanitizes deleted files as a side effect of another file system operation, that is, the creation of a big file. Results are inconsistent because the behavior of this side effect is not specified.

According to that evaluation, we can significantly improve the big file technique by creating multiple small files a sector at a time after the big file is created but before it’s deleted.

BleachBit appears to use a similar strategy.

BleachBit has many features to help us easily clean our computers and maintain privacy.

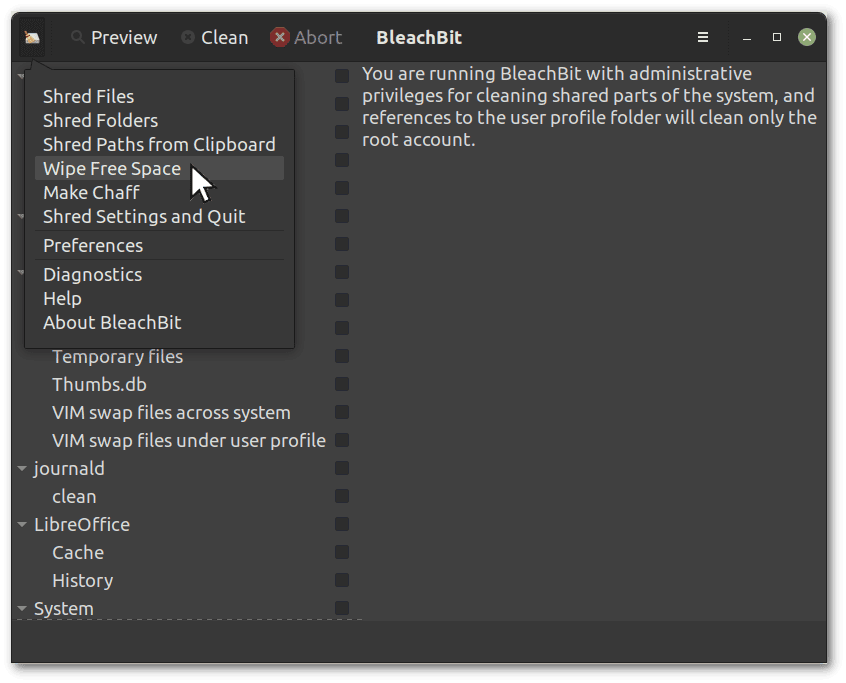

We’ll test the “Wipe Free Space” feature, which is available from the menu that opens by clicking the icon in the upper left corner:

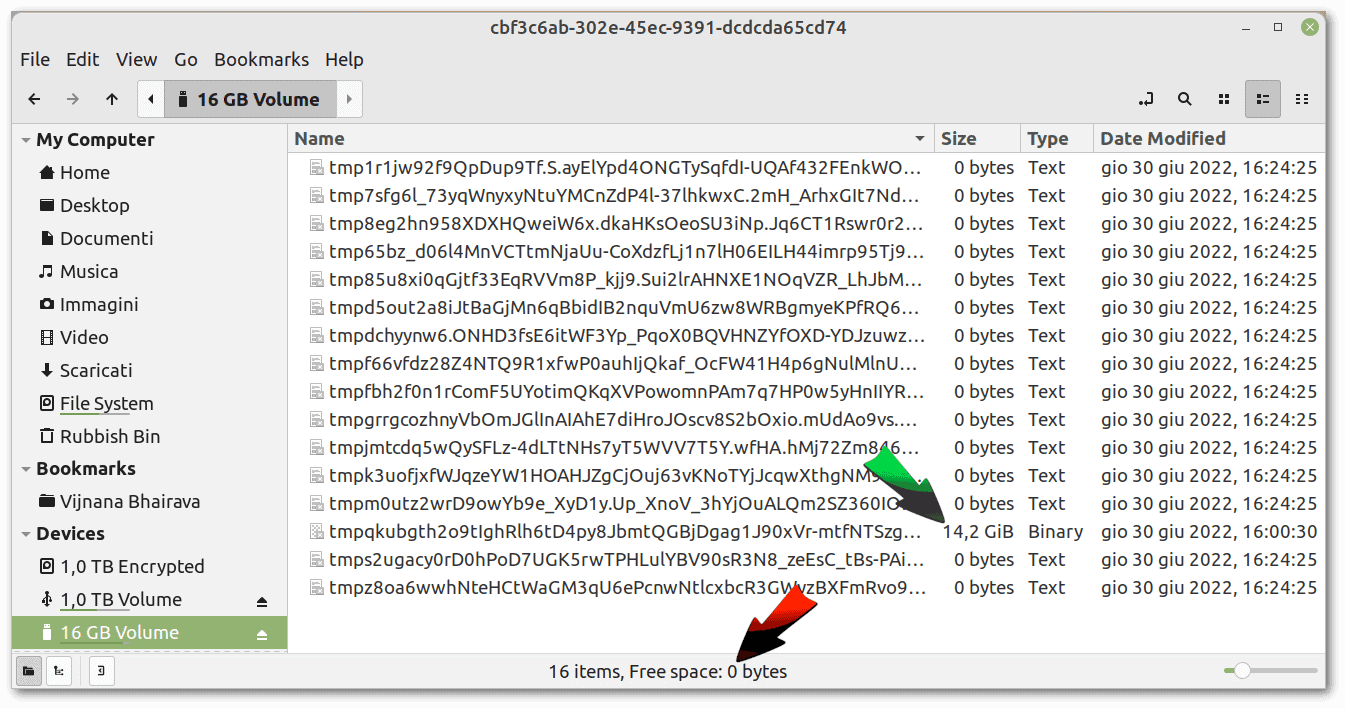

We observe that BleachBit first creates a large file to fill all the free space. Finally, it makes a set of zero byte files before deleting all the files it created.

We observe that BleachBit first creates a large file to fill all the free space. Finally, it makes a set of zero byte files before deleting all the files it created.

The following screenshot shows all the files created by BleachBit:

Before BleachBit’s wiping, PhotoRec recovered 2185 files. These recovered files include many of those recently added and removed for our test and also other years-old files stored in the free space of the USB stick. After the wiping, BleachBit recovered only 12.

Before BleachBit’s wiping, PhotoRec recovered 2185 files. These recovered files include many of those recently added and removed for our test and also other years-old files stored in the free space of the USB stick. After the wiping, BleachBit recovered only 12.

Although the overall result is good, we couldn’t clean up the free space entirely.

cat together with sync is the easiest solution to create a big file that fills all the free space:

$ cat /dev/zero > zero.file

cat: write error: No space left on device

$ sync

$ rm zero.file

$ syncBefore creating our file full of zeros, PhotoRec recovered 1712 files. Afterward, it still managed to recover 396. Overall, therefore, the result is worse than BleachBit’s.

All this confirms that the big file approach is not valid. To this, we must add other problems, including the 4GB file limit on FAT32 and the lack of a progress percentage.

We must also remember that ext4, used in our test device, has 5% reserved space by default, so we can’t perform a full overwrite this way.

Completely overwriting our test device, with the destruction of the partition table as well, is a drastic but effective solution.

Therefore, let’s umount the partition, overwrite the entire device with zeros, and recreate the partition with parted and mkfs.ext4:

# umount /dev/sdd1

# cat /dev/zero > /dev/sdd

cat: write error: No space left on device

# sync

# parted -s /dev/sdd mklabel gpt

# parted -s /dev/sdd unit mib mkpart primary 0% 100%

# mkfs.ext4 /dev/sdd1

mke2fs 1.45.5 (07-Jan-2020)

Creating filesystem with 3811840 4k blocks and 954720 inodes

Filesystem UUID: 9df314f1-da16-4898-b417-e70b18f9de5d

Superblock backups stored on blocks:

32768, 98304, 163840, 229376, 294912, 819200, 884736, 1605632, 2654208

Allocating group tables: done

Writing inode tables: done

Creating journal (16384 blocks): done

Writing superblocks and filesystem accounting information: done

# partprobeThe last partprobe command is probably not necessary. We only ran it to ensure the kernel sees the new partitioning.

Before this extreme cleanup, we had 396 recoverable files. After that, there’s not a single one. So this approach is valid.

Under normal conditions, we should save all our data to another device, entirely overwrite the disk we want to wipe, and finally re-copy the data after recreating the partitions. In this scenario, cloning the file system hierarchy with rsync could help migrate an operating system from one disk to another.

However, suppose one disassembles an SSD disk, removes flash memory chips, and reads them directly. In that unlikely case, they may obtain some data even after SSD has all its sectors zeroed. Exactly how much and what data is recoverable is determined by SSD controller algorithms. But no software application can prevent this issue. However, it’s likely that the data thus recoverable are only unusable fragments of files. So in real life, it’s not an actual problem.

This solution is less drastic and easier to use than complete device overwriting. zerofree finds the unallocated blocks with non-zero value content in an unmounted ext2, ext3, or ext4 filesystem and fills them with zeroes (or another octet of our choice).

Let’s give it a try:

# umount /dev/sdd1

# zerofree /dev/sdd1

# syncBefore running zerofree, PhotoRec found 1730 recoverable files. After running it, it didn’t find a single one, thus proving the validity of this cleaning tool. However, the same limitations we discussed already about the possibility of entirely overwriting SSDs apply.

In this article, we learned that cleaning the free space of a disk is far from trivial because there are many limitations, complications, and mistaken beliefs.

We looked at multiple tools and approaches to wipe free space. Among the various solutions, zerofree is the simplest one. However, it requires the file system to be ext2/3/4. Otherwise, we can do a complete disk overwrite, but that requires special care.

Instead, we should avoid the tools that fill the free space with a big file because, often, the result is not the desired one.