Learn through the super-clean Baeldung Pro experience:

>> Membership and Baeldung Pro.

No ads, dark-mode and 6 months free of IntelliJ Idea Ultimate to start with.

Last updated: May 26, 2020

In Unix, we can pipe multiple processes, one after the other, to form a pipeline, so that messages can pass between them linearly. In this tutorial, we’ll learn about the tee command and use it as a T-splitter within a pipeline.

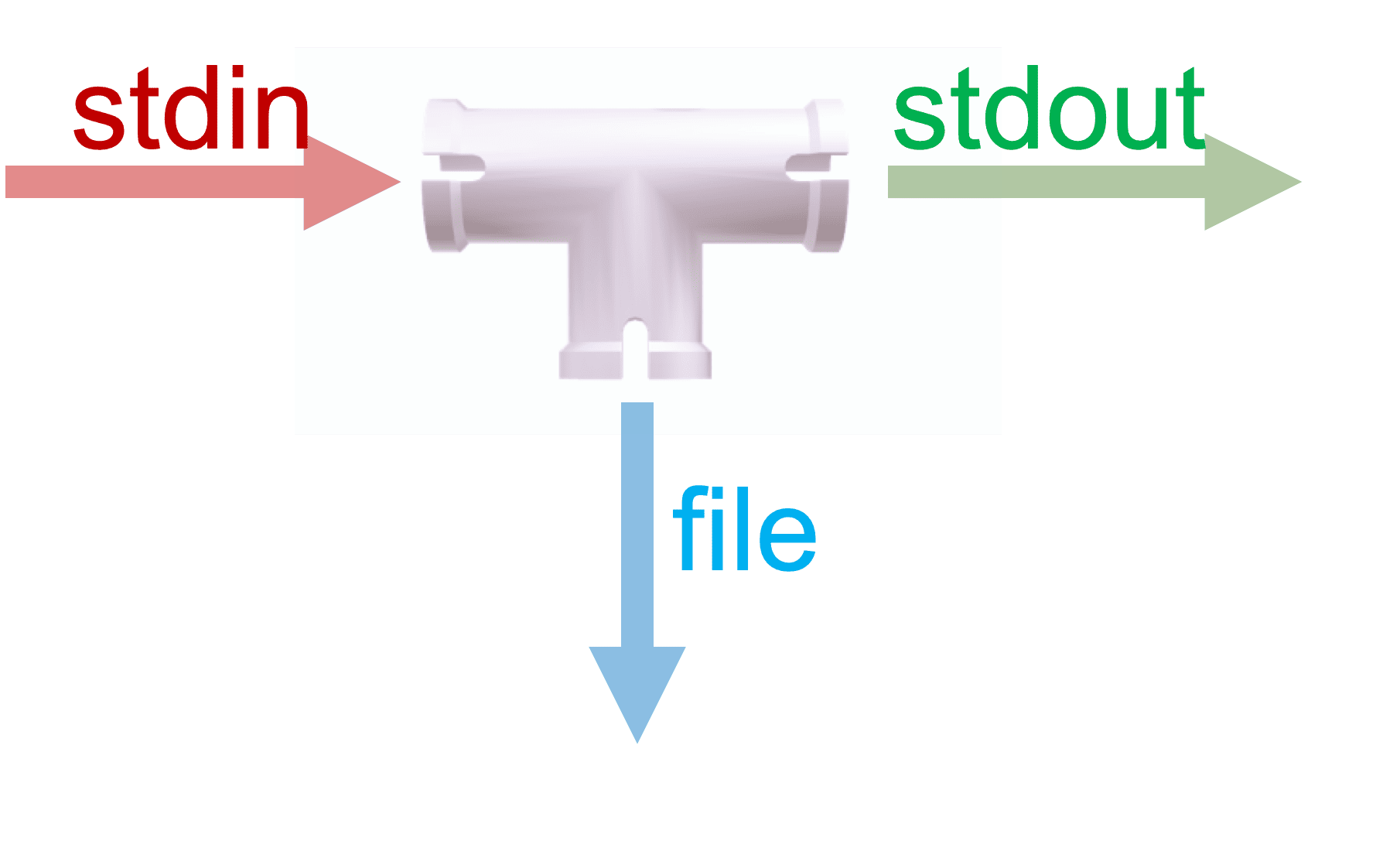

As the name suggests, we can use the tee command to create a T-splitter with one inlet and one or more outlets. Let’s acquaint ourselves with tee‘s invocation syntax and strategies:

tee [-ai] [file ...]Essentially, the inlet is connected with the standard input stream, while one of the outlets is connected with the standard output stream. Additionally, we can have as many more outlets as we need by providing filenames during invocation:

We must note that, by default, tee always overwrites the files unless we use the -a switch to use it in append mode.

Now that we know the basics, let’s see tee in action:

We must note that by pressing Ctrl+C keys, we can send the SIGINT signal to a program. And, by default, tee handles this interrupt by terminating its execution. However, if need be, we can disable it with the -i switch.

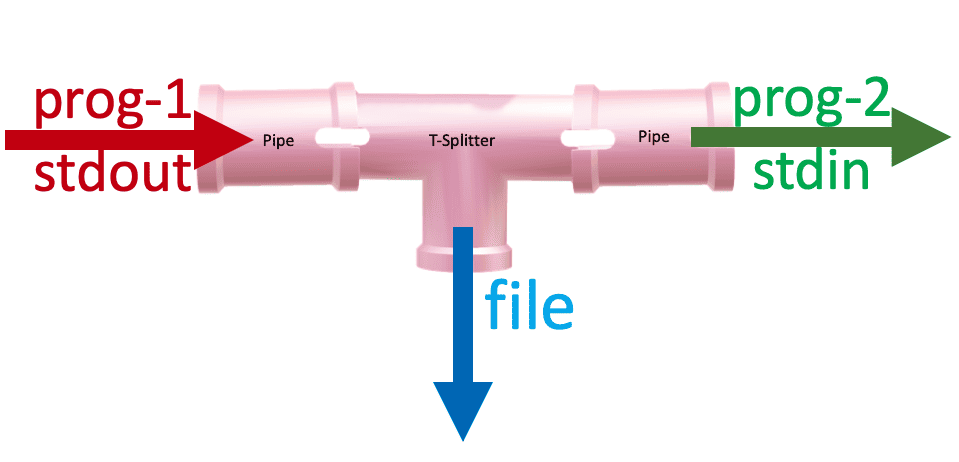

More practical use of a T-splitter is when we use it in conjunction with pipes within a pipeline. By doing so, we can capture the intermediary output from the first program into a file, before the second program transforms it:

Let’s imagine that we have an unsorted list of grocery items in the file grocery-supply.txt:

$ cat grocery-supply.txt

Milk

Eggs

Almonds

Sugar

SaltFurther, we have two requirements to fulfil:

As the input for the second task depends on the output from the first task, using pipes would be the right choice to make. On each side of the pipe, we can use the sort and head commands, respectively:

$ sort grocery-supply.txt | head -1

Almonds

Well, we can see that our output only includes the first item from the sorted version of the list. But, we’ve lost the sorted version of the list.

For such a scenario, using a T-splitter is quite apt:

$ sort grocery-supply.txt | tee grocery-supply-sorted.txt | head -1

Almonds

$ cat grocery-supply-sorted.txt

Almonds

Eggs

Milk

Salt

SugarIt looks like we’ve nailed it!

So far, so good. Now, we’re well equipped to use these concepts as building blocks to solve much more complex problems that involve using process substitution and filter commands such as awk, sort, cut, and so on.

Let’s imagine that we’re maintaining a list of our grocery shopping in the grocery-shopping.txt file:

$ cat grocery-shopping.txt

Day-1:Milk:4

Day-3:Butter:2

Day-5:Eggs:12

Day-8:Milk:2

Day-9:Milk:2

Day-10:Eggs:6

Now, we have a requirement to generate a few reports using the shopping data:

With time, our requirements for individual reports could change. So, our strategy should incorporate for future use cases of addition, removal, and modification of the individual reports.

Now, as all the reports depend on the contents of grocery-shopping.txt, and we want to minimize the coupling between individual reports. So, it’d be wise if we keep the logic of report generation in their individual scripts, namely report-quantity.sh, report-rev-chronological.sh, and report-last-shopping-day.sh:

$ ./report-quantity.sh < grocery-shopping.txt

$ ./report-rev-chronological.sh < grocery-shopping.txt

$ ./report-last-shopping-day.sh < grocery-shopping.txtFurther, we can use the cut, awk, and tail commands within these individual scripts to filter and aggregate values.

Our reverse chronological shopping list report can be generated by using -r switch of the tail command:

$ cat report-rev-chronological.sh

#!/bin/sh

tail -r > rev-grocery-shopping.txtOn the other hand, our item-wise quantity report can make use of awk arrays to loop through the line records. For each record, it increases the quantity of an item by the quantity available as the third input field ($3):

$ cat report-quantity.sh

#!/bin/sh

awk -F':' \

'

{

items[$2]+=$3

}

END {

for (item in items) {

reportname="quantity-"item".txt"

print items[item] >reportname

}

}

'In the end block, each item’s quantity is written into a file named quantity-<item>.txt.

And, lastly, our last day shopping report can use a combination of tail and cut filters:

$ cat report-last-shopping-day.sh

#!/bin/sh

tail -1 | cut -f1 -d':' > last-shopping-day.txtInstead of invoking each report separately, we can use the tee command along with process substitution to make effective use of a common data source. Well, the idea is that instead of writing stdin to stdout and files, we can feed stdin directly into processes:

$ tee >(prog1) >(prog2) >(prog3) ...So, we can now invoke each of our report generation scripts using process substitution and tee:

$ cat grocery-shopping.txt \

| tee \

>(./report-quantity.sh) \

>(./report-rev-chronological.sh) \

>(./report-last-shopping-day.sh) \

1 >/dev/null

As all the reports are writing to their individual report files, we don’t need anything to be printed on the screen. So, the last line discards anything from stdout to the /dev/null device.

Finally, let’s verify the reports generated:

$ grep '.*' quantity-* last-shopping-day.txt rev-grocery-shopping.txt

quantity-Butter.txt:2

quantity-Eggs.txt:18

quantity-Milk.txt:8

last-shopping-day.txt:Day-10

rev-grocery-shopping.txt:Day-10:Eggs:6

rev-grocery-shopping.txt:Day-9:Milk:2

rev-grocery-shopping.txt:Day-8:Milk:2

rev-grocery-shopping.txt:Day-5:Eggs:12

rev-grocery-shopping.txt:Day-3:Butter:2

rev-grocery-shopping.txt:Day-1:Milk:4We started this tutorial with a motivation to learn about the tee command for creating T-splitters within a pipeline. And, on the way, we discovered the significance of pipes and process substitution to leverage the real power of the tee utility.

With these strong foundations, we’re only limited by our creativity to solve more advanced problems with tee and filter utilities.