Learn through the super-clean Baeldung Pro experience:

>> Membership and Baeldung Pro.

No ads, dark-mode and 6 months free of IntelliJ Idea Ultimate to start with.

Last updated: February 28, 2025

In this tutorial, we’ll make an introduction to neural style transfer. First, we’ll briefly discuss the definition of the term, and then we’ll present the algorithm in detail. Finally, we’ll mention some applications and challenges of neural style transfer.

Neural Style Transfer has become increasingly popular in recent years thanks to the incredible capabilities of deep learning models. Specifically, it is an algorithm where we combine the style of one input image with the content of another input image

to generate a new image

with the style of the first and the content of the second image.

But what do content and style refer to?

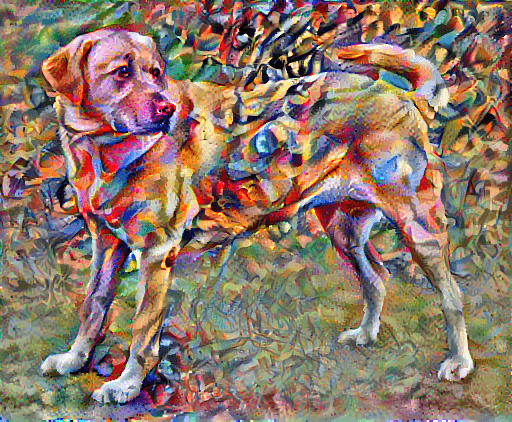

The content of an image consists of the objects, the shapes, and the overall structure and geometry of the given image. For example, the content of the image below contains a dog:

On the other hand, the style of an image has to do with the textures, the colors, and the patterns. For example, the image below depicts a painting by Wassily Kandinsky (Composition 7). The style of this image is unique and is related to the artistic style of Kandinsky:

Using neural style transfer, we can combine the content of the first image and the style of the second image and generate the image below:

To better understand the concept of neural style transfer, let’s dive into the details of the algorithm.

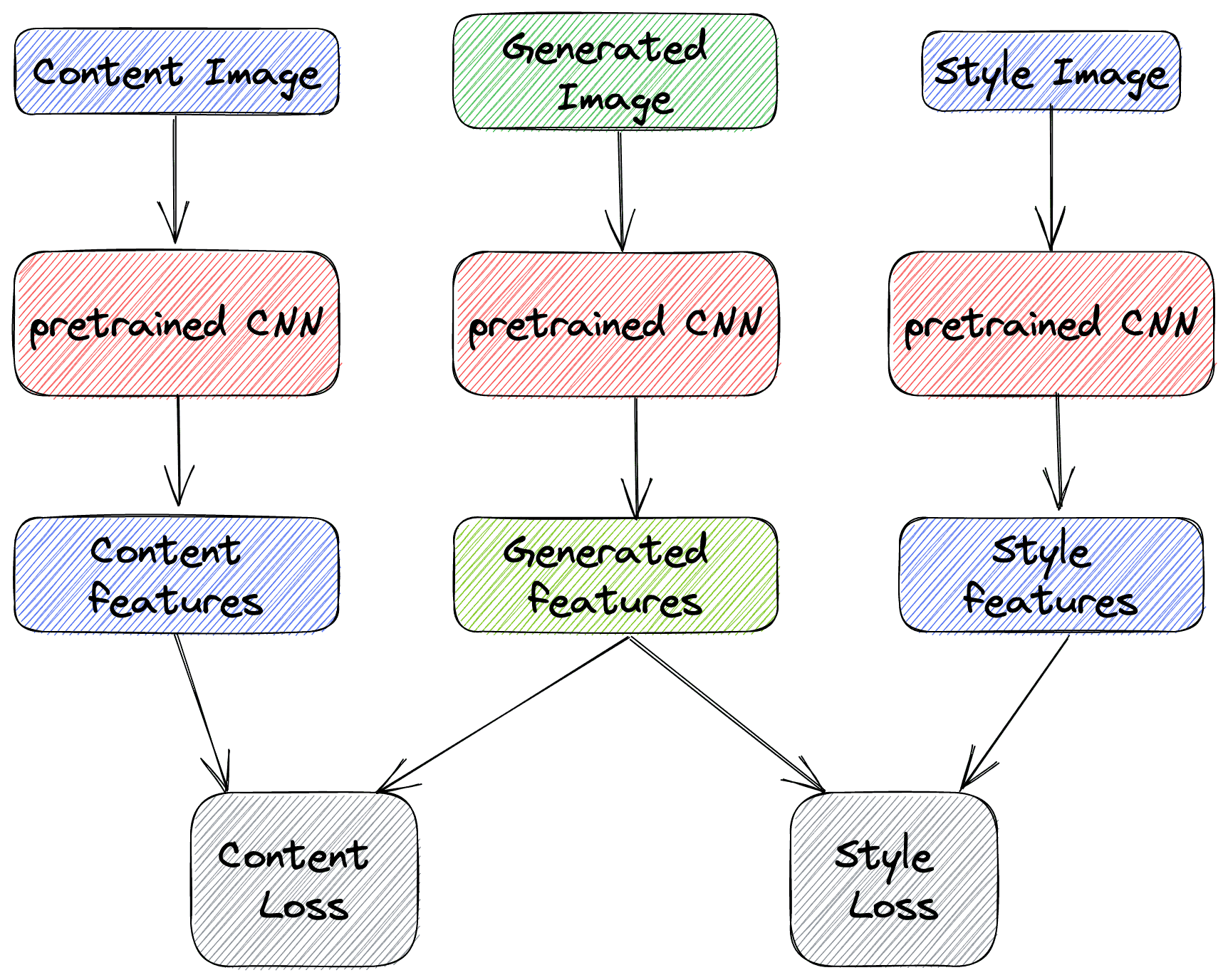

The algorithm can be divided into the following steps:

Many times as an extra step, the generated image is further processed to increase its visual quality using various visual filters.

In the diagram below, we can see what the algorithm looks like:

As we can easily understand, NST is a very powerful technique that enables us with a vast number of capabilities. Here, we’ll discuss some of them.

The most common application of NST is in the field of art, where AI can generate unique and impressive artwork, opening up new possibilities in the field. For example, the style of famous artists can be combined with any content you want to generate new works of art.

NST can be very useful in the gaming community since new visual gaming environments can be created easily without spending many hours designing them from scratch. Similarly, in the film industry, visual effects can be generated through NST, creating an immersive experience for the audience.

Finally, another field where NST is beneficial is fashion. Specifically, the style of one image and the pattern of a textile can be combined to create unique, visually appealing textile designs. These capabilities have revolutionalized the fashion domain since now fashion designers can experiment with many different styles and create entirely new designs.

Despite the success of NST in generating realistic images, there are also many challenges that we should always take into account. Let’s focus on two of them.

Suppose we recall the algorithm that we mentioned previously. In that case, we will see that only two hyperparameters are connected to the output pixels of the generated image: the number of epochs and the weight between the content and the style loss. So, a serious problem in NST is that we have very limited control over the content and the style of the generated image. Of course, the output image will combine the style of one and the content of the other input image. However, the amount of each component cannot be easily determined.

As in many domains of deep learning, NST lacks interpretability since we don’t know exactly why the generated image ends up in its final form. We can only visually inspect if it retained the style and the content we wished for, but we can’t specify why and how the generated image ended up like this.

In this article, we discussed the algorithm of neural style transfer. First, we defined the term and talked about the algorithm in detail. Then, we described some applications and challenges of neural style transfer.