Learn through the super-clean Baeldung Pro experience:

>> Membership and Baeldung Pro.

No ads, dark-mode and 6 months free of IntelliJ Idea Ultimate to start with.

Last updated: May 15, 2024

In this tutorial, we’re going to define the simple concept, endianness, and where it comes from. We’ll also give differences between big-endian and little-endian.

While most modern languages are written left-to-right like this very sentence, some others are written right-to-left and even not horizontally. Endianness is similar to languages written from left to right or right to left. It is an excellent analogy to understand the basic idea behind endianness.

In computing systems, bytes are the key structures. Their representation in different formats in different machines creates endianness. While machines can read their own data, problems arise when one computing system stores and another tries to read. The solution is simple, we need to agree to a standard format and always include a header that defines the data format. If the header is backward, it means it’s another format, and the system should convert it.

So, in computing, we define endianness as the order of bytes inside a word of data stored in computer memory. Big-endian and little-endian are the two main ways to represent endianness. Big-endian keeps the most significant byte of a word at the smallest memory location and the least significant byte at the largest. On the other hand, little-endian keeps the least significant address at the smallest memory location. Many computer architectures offer adjustable endianness for instruction fetches, data fetches, and storage to support bi-endianness. There are also some other orderings like middle-endian or mix-endian.

Endianness can also refer to the order in which bits are transferred via a communications channel. When creating ports using the C programming language, we have to make declarations to adjust the communication between parties.

Even though it sounds weird, we understand the concept better when we learn where the “endianness” word comes from. While the terms big-endian and little-endian are coined by Danny Cohen, who is one of the key figures behind the separation of Transmission Contol Protocol (TCP) and Internet Protocol (IP), the adjective endian has its origin in the novel Gulliver’s Travels. In this novel, Jonathan Swift depicts the fight between Lilliputians who are separated into two groups that break an egg’s shell from the big end or the little end:

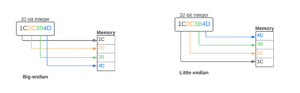

The figure below summarizes the differences between the big-endian and little-endian:

As we can see, in the case of big-endian, we locate the most significant byte of the 32-bit integer at the byte with the lowest address in the memory. The rest of the data is located in order in the next three bytes in memory.

On the other hand, when we look at the little-endian, in that case, we locate the least significant byte of the data at the byte with the lowest address. After that, we find the rest of the data in the order in the next three bytes in memory.

Two different computers can use this two different endianness to store a 32-bit or 4-byte integer with the value of 0x1C2C3B4D. As can be seen, in both cases, we divide the integer into four bytes. And those bytes are ordered in a sequential matter.

Everything works fine under the hood when the computers use the same endianness to store and retrieve the integer. However, when we try to share some memory contents among computers using different endianness, we face some issues.

In different layers of computing, different endianness can be dominant. For example, big-endianness is the dominant ordering in networking protocols, including the internet protocol suite. On the other hand, little-endianness is the dominant ordering for processor architectures such as x86, ARM, and RISC-V and their related memory. File formats can use both of them. Even some use a combination of the two or have a flag indicating which ordering is dominant throughout the file.

In this article, we’ve defined endianness and clarified what big-endianness and little-endianness are. We’ve analyzed how they work and where they are dominant. It’s one of the basic concepts in computing and a crucial concept at almost every level of computing.