Learn through the super-clean Baeldung Pro experience:

>> Membership and Baeldung Pro.

No ads, dark-mode and 6 months free of IntelliJ Idea Ultimate to start with.

Last updated: March 18, 2024

In this tutorial, we’ll explain statistical independence.

Merriam-Webster lists several meanings of the word independent. In mathematics, we usually go with a variation of “not determined by or capable of being deduced or derived from or expressed in terms of members (such as axioms or equations) of the set under consideration“.

Something similar holds in probability theory and statistics. We say that two events are statistically independent if the probability of one doesn’t change if we learn that the other event got realized (or didn’t occur) and vice versa.

But why do we bother with this?

Let’s say we’re developing a vaccine and want to check if an expensive component improves the protection. We need to test the vaccine on two randomly collected groups of test subjects. One group gets the expensive version, and the other receives the version without the component. If the incidence of the disease is the same in both groups, the chance that the vaccine protects against it won’t change if we add the component.

So, the events “the vaccine contains this very expensive component” and “the vaccine protects those who get a shot” will be independent. Consequently, we can produce the vaccine without an expensive part and save money.

The independence of events has a precisely defined meaning in statistics. Events and

are independent of one another (which we write as

) if their joint probability is the product of their individual probabilities:

(1)

For instance, if and

, the joint probability

needs to be 0.35 for

and

to be independent.

We can make independence more intuitive by using conditional probabilities. Let’s recall that:

So, if and

are mutually independent, we get:

From there:

Therefore, if , the realization of

doesn’t change the probability that

will happen and vice versa.

For instance, if we flip a coin and get a head, the chances for the second toss to result in either side remain unaffected by the first outcome.

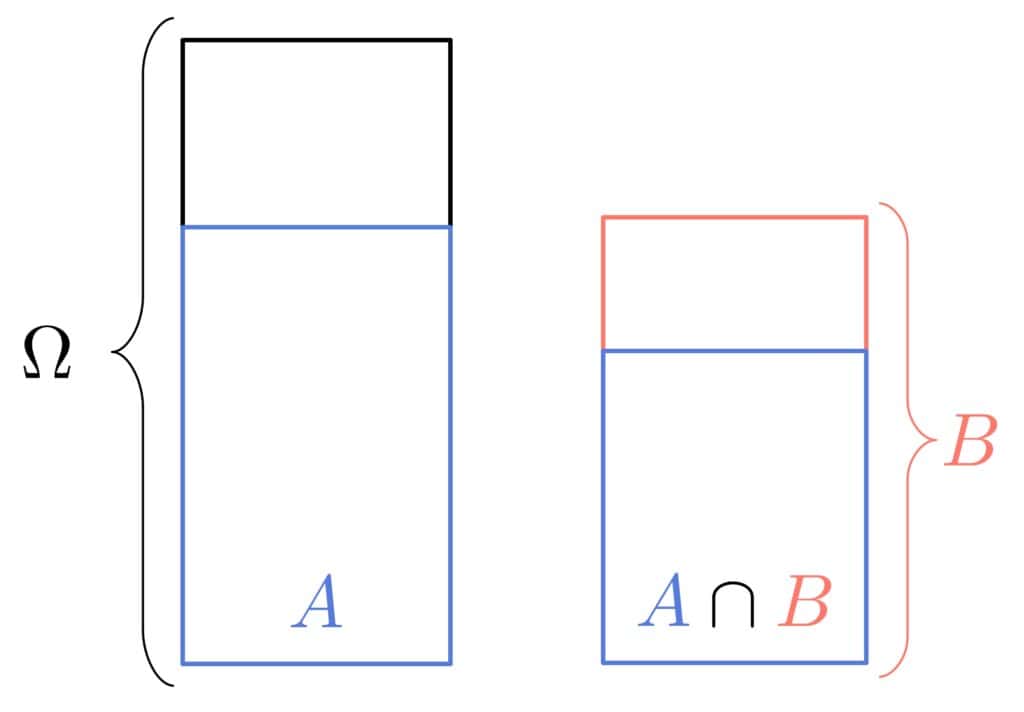

Let’s visualize the probabilities in question as surfaces. If , the proportion the surface that

takes in the area of

should be the same as the proportion

takes in the entire event space

:

There are two notions of independence for multiple events.

We say that events are pairwise independent if any two events

are independent of one another in the sense of Equation (1}:

(2)

However, that doesn’t mean that the intersections of three and more events decompose to the individual events’ probabilities.

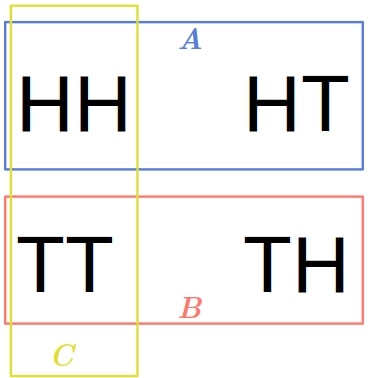

Here’s a classical example illustrating this. If we toss a coin two times, we have four possible outcomes: . Let’s define three events:

Visually:

Assuming that the coin is fair, all the outcomes are equally likely, so .

Further:

As we see, the events are pairwise independent. However:

So, isn’t independent of

,

of

, and

of

. That’s what we have mutual independence for.

Events are mutually independent if any event is independent of any intersection of other events from the set.

So, we want the following to hold for any and

such that

and

for any

:

But, this also needs to hold for all the events and intersections in . So, we can write the condition compactly as:

(3)

Therefore, mutually independent events are also pairwise independent.

Since conditional probability is also a probability, there’s also conditional independence:

(4)

If the above holds for events ,

, and

, we say that

and

are conditionally independent given

.

Intuitively, that means that if happened, the realization of

doesn’t affect the chance of

occurring. Or, in line with Bayesianism, any information on

reveals nothing about

provided that we know

has happened.

We can use the above notions of independence to define the independence of random variables. Since the same reasoning holds for pairwise and mutually independent random variables, we’ll focus on the independence of only two variables.

Variables and

are stochastically independent if the events

and

are independent for any

and

.

If and

denote the variables’ cumulative distribution functions, and

their joint CDF, the definition comes down to:

(5)

The notions of independence in everyday language differ from those in probability and statistics.

Non-statisticians and non-mathematicians usually understand the independence of events and

to mean they are completely unrelated. For example, when flipping a coin two times, people could say that the two outcomes aren’t independent because the same coin is used both times.

In contrast, a statistician would argue that the outcome of one flip doesn’t affect the realization of the other. So they would consider the two events statistically independent.

In this article, we explained statistical independence or events and random variables.