Learn through the super-clean Baeldung Pro experience:

>> Membership and Baeldung Pro.

No ads, dark-mode and 6 months free of IntelliJ Idea Ultimate to start with.

Last updated: December 10, 2022

In this tutorial, we’re going to explain what simultaneous localization and mapping (SLAM) is, and why we need it. It’s quite an interesting subject since it includes different research domains such as computer vision and sensors. It’s popular in the research area of self-driving vehicles. We’ll also briefly mention how it works and what techniques can be used to implement it.

SLAM is the technique of estimating a map of the environment and at the same time localizing our sensors and our robot in that map that our robot is currently building. It’s one of the essential aspects that mobile robots need whenever they move into unknown or partially unknown environments. SLAM algorithms pave the way for mapping out unknown environments. In that way, engineers can use the map information to utilize other tasks such as obstacle avoidance, and route planning.

In robotics, SLAM has been investigated since the beginning of the 1990s. Because we can think that in order to make robots do something, first, we make them figure out where they are, and what their limitations are. So, for this purpose SLAM has a significant role:

Individual mapping and localization techniques are also other solutions, doing both tasks at once brings difficulty for the SLAM. At the beginning of the research studies for the SLAM, researchers thought that constructing the map where the robots wander while localizing itself is not quite possible. Therefore, they called this the chicken-egg problem. However, significant approximate solutions can solve this complex algorithmic problem today.

In this context, it’s crucial to keep in mind that SLAM isn’t actually just one technological advancement or one system. SLAM, on the other hand, is a far more general idea with practically endless variation. It can be utilized in a robot cleaner as well as a self-driving car as can be seen in the figure above. The important thing is that a slam-based system may be built using a variety of software programs and algorithms. All of these aspects depend on the surrounding environment, use case, and the other technologies at play.

As mentioned before, researchers have been working on SLAM for many years. With the improvements in computing, and the availability of low-cost sensors such as cameras, and laser range finders, SLAM becomes more practical to utilize in many applications in different domains.

When we look at the vacuum cleaner robots’ reviews before we buy one, we can see that some of them can’t able to map the environment and therefore can’t perform the proper cleaning operation in that environment. Actually, that’s where SLAM and Light Detection and Ranging (LiDAR) come into the stage. The performance differences between vacuum cleaners mostly depend on their sensors and the SLAM technology that they have.

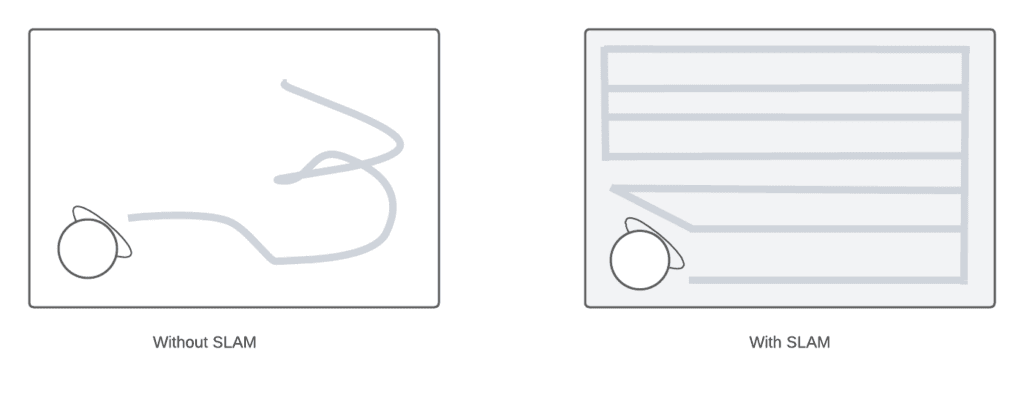

When robots don’t have the SLAM technology, they aren’t able to map the environment and localize themselves, and complete cleaning tasks may abort because of that. Of course, other sensors and cameras have an important role in the localization and mapping. We can see the difference when we have a robot without SLAM and with SLAM in the figure below:

Engineers also use SLAM in different domains such as navigating mobile robots in a warehouse, parking a self-driving car in an empty spot, or delivering packages by navigating robots in an unknown environment. Also, object tracking, path planning, and finding can be implemented along with the SLAM algorithms in an application.

Let’s look at how SLAM works. We can divide the SLAM into two parts, front-end and back-end technologies that are used in it. The front-end part is mostly dependent on the sensors used such as cameras, distance sensors, and Lidar sensors. On the other hand, the back-end includes pose-graph optimization.

We can divide the front-end part into two parts as well:

Visual SLAM or vSLAM utilizes images captured by cameras and other image sensors, as we can guess from its name. It can exploit simple cameras, RGB-D cameras, and compound eye cameras. Utilizing relatively cheap cameras allows for the implementation of visual SLAM at a low cost.

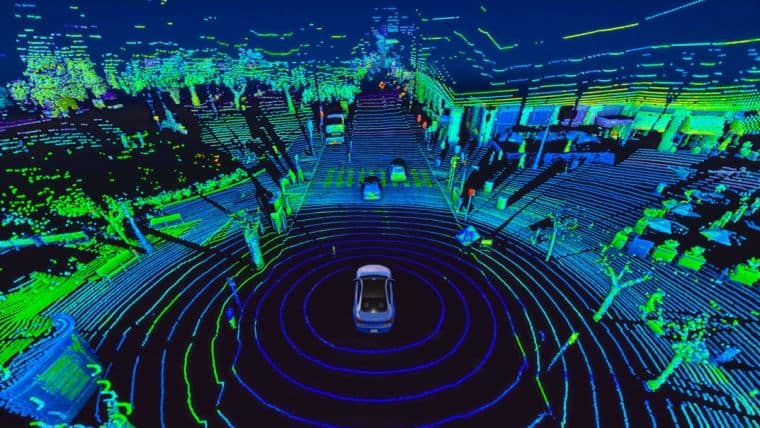

They may also be utilized to recognize landmarks because cameras can provide a lot of information. In addition to that, landmark detection and graph-based optimization come together to achieve flexibility in SLAM implementation. We can see an example of vSLAM 3D point cloud map by generated the Non-profit Association of Robotics and Artificial Intelligence JdeRobot:

Light detection and ranging (LiDAR) is a technique that typically utilizes a laser or distance sensor. Laser sensors are generally more precise than camera sensors. For example, vacuum cleaner robots with lidar are generally more successful at creating maps. Not only in vacuum cleaners but also researchers and engineers use this technique while they’re designing self-driving cars and drones.

Laser sensors often provide point cloud vectors in 2D or 3D format. For the purpose of creating maps using SLAM, the laser sensor point cloud offers highly accurate distance measurements. In principle, movement is calculated by matching the point clouds in a sequential manner. The robot uses calculated movement to localize itself.

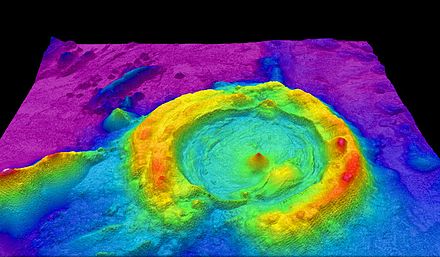

Registration algorithms such as iterative closest point, and robust point matching come into the stage to calculate the distance between cloud points. After that, researchers generally prefer to use grip maps and voxel maps to represent the point cloud maps. We can see an example of 3D point cloud maps generated using a drone with a LiDAR sensor on it:

In this article, we’ve explained the SLAM and why we need it. It has a wide range of applications from vacuum cleaners to self-driving cars, and from medicine to drones. As the cost of sensors decreases, the practical use of SLAM will probably continue to increase in the future. In the scope of the article, we’ve also mentioned how it works and which methods the researchers use to implement it.