1. Overview

In this tutorial, we’ll make an introduction to self-supervised learning. First, we’ll define the term and talk about its importance in machine learning. Then, we’ll present some examples of self-supervised learning and some limitations.

2. Preliminaries

Over the past few years, the field of machine learning has revolutionized many aspects of our life with applications ranging from self-driving cars to predicting deadly diseases. A crucial factor in this tremendous progress is the availability of massive amounts of carefully labeled data.

However, it is quite clear that there is a limit on how far the field of machine learning can go with supervised learning and labeled data. For example, there are many tasks where it is difficult or expensive to annotate a lot of data like translation for low-resource languages. Therefore, to bring AI closer to human-level intelligence we should focus on methods that include training a model without annotations. One of the most promising methods is self-supervised learning.

3. Definition

The motivation behind self-supervised learning is to first learn general feature representations using unlabeled data and then fine-tune these representations on downstream tasks using a few labels. The question that arises is how to learn these useful representations without knowing the labels.

In self-supervised learning, the model is not trained using a label as a supervision signal but using the data itself. For example, a common self-supervised method is to train a model to predict a hidden part of the input given an observed part of the input.

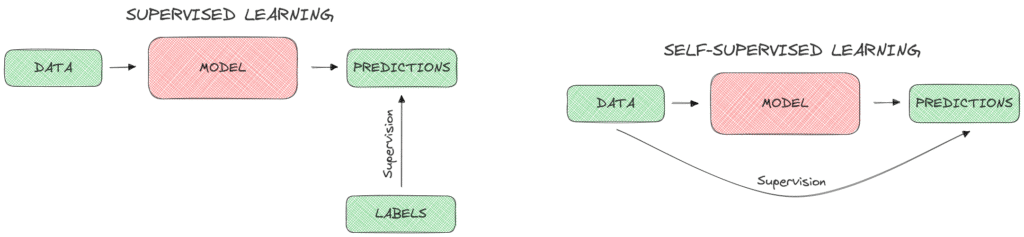

Below, we can see diagrammatically how a supervised (left) and a self-supervised (right) model works:

4. Importance

More and more self-supervised models are proposed nowadays that aim to substitute purely supervised learning models. Self-supervision is very important to the progress of machine learning for a number of reasons.

First, self-supervised learning reduces the necessity of annotating a lot of data saving time and money. Also, it enables the use of machine learning in areas where annotating is difficult or even impossible. Another factor that illustrates the importance of self-supervision is the vast amount of unlabeled data that are available everywhere. Due to the extensive use of the internet and social media, there is a huge amount of images, videos, and audio clips that can be easily used to train self-supervised machine learning models.

5. Examples

Now, let’s present some examples of self-supervised learning to better illustrate how it works.

5.1. Visual

A lot of self-supervised methods have been proposed for learning better visual representations. As we’ll see below, there are many ways that we can manipulate an input image so as to generate a pseudo-label.

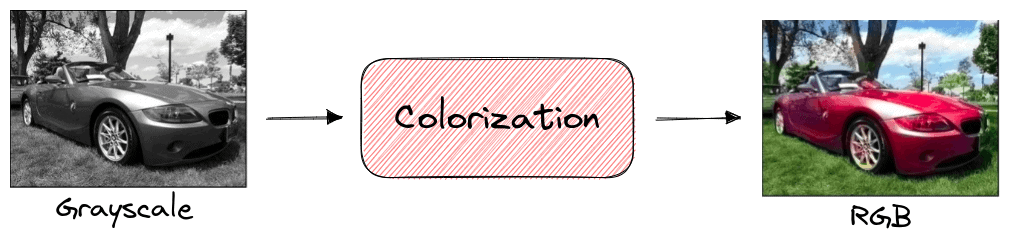

In image colorization, a self-supervised model is trained to color a grayscale input image. In a previous tutorial we have presented how to convert an RGB image to grayscale. So, during training, there is no need for labels since the same image can be used in its grayscale and RGB format. After training, the learned feature representation has captured the important semantic characteristics of the image and can then be used in other downstream tasks like classification or segmentation:

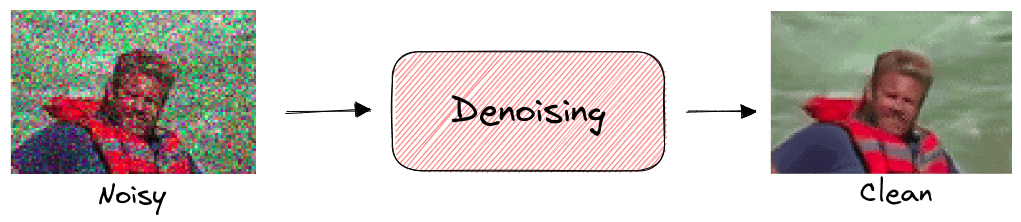

Another task that is common in self-supervision is denoising where the model learns to recover an image from a corrupted or noisy version. The model can be trained in a dataset without labels since we can easily add any type of image noise in the input image:

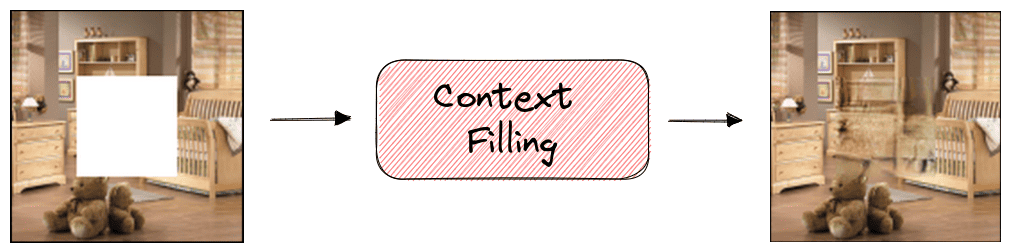

In image inpainting, our goal is to reconstruct the missing regions in an image. Specifically, the model takes as input an image that contains some missing pixels and tries to fill these pixels so as to keep the context of the image consistent. Self-supervision can be applied here since we can just crop random parts of every image to generate the training set:

5.2. Audio-Visual

Self-supervised learning can also be applied in audio-visual tasks like finding audio-visual correspondence.

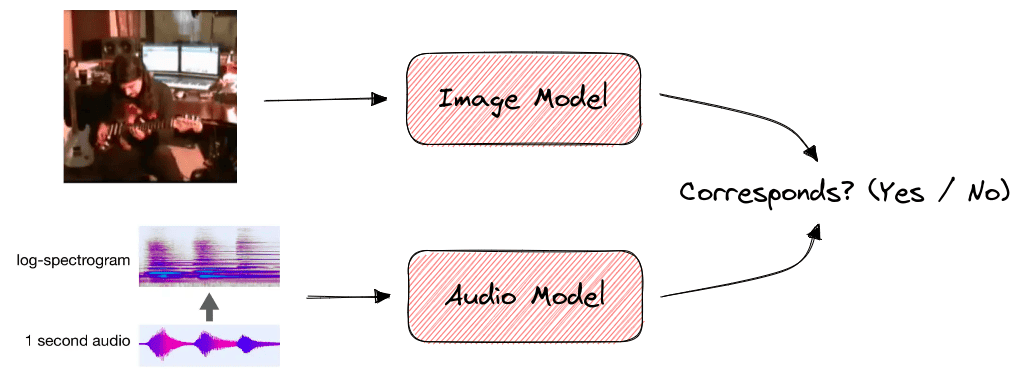

In a video clip, we know that audio and visual events tend to occur together like a musician plucking guitar strings and the resulting melody. We can learn the relationship between visual and audio events by training a model for audio-visual correspondence. Specifically, the model takes as input a video and an audio clip and decides if the two clips correspond to the same event. Since both the audio and visual modality of a video are available beforehand, there is no need for labels:

5.3. Text

When training a language model it is very challenging to define a prediction goal so as to learn rich word representations. Self-supervised learning is widely used in the training of large-scale language models like BERT.

To learn general word representations without supervision, two self-supervised training strategies are used:

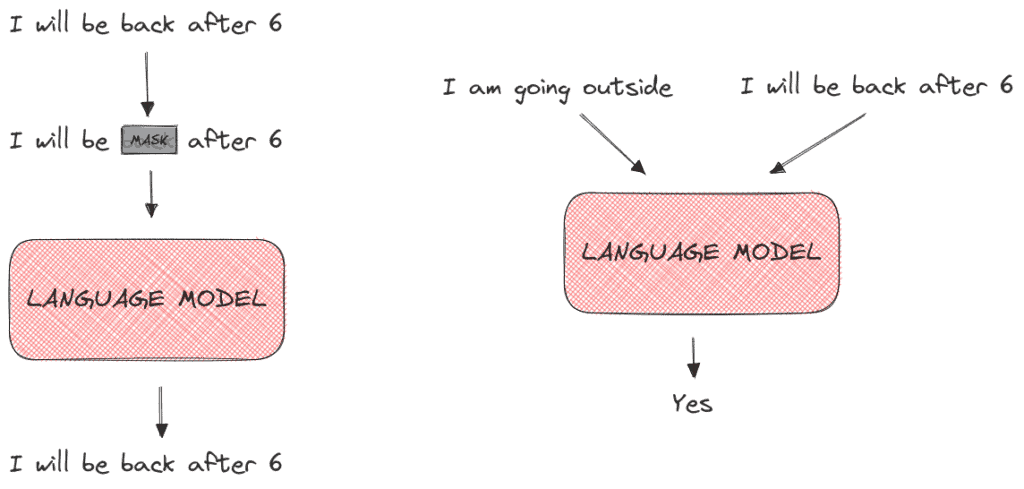

- MaskedLM where we hide some words from the input sentence and train the language model to predict these hidden words.

- Next Sentence Prediction where the model takes as input a pair of sentences and learns their relationship (if the second sentence comes after the first sentence).

Below, we can see an example of the MaskedLM (left) and the Next Sentence Prediction (right) training objectives:

6. Limitations

Despite its powerful capabilities, self-supervised learning presents some limitations that we should always take into account.

6.1. Training Time

There is already a lot of controversy over the high amount of time and computing power that the training of a machine learning model takes with a negative impact on the environment. Training a model without the supervision of real annotations can be even more time-consuming. So, we should always compare this extra amount of time with the time it takes to just annotate the dataset and work with a supervised learning method.

6.2. Accuracy of Labels

In self-supervised learning, we generate some type of pseudo-labels for our models instead of using real labels. There are cases where these pseudo-labels are inaccurate hurting the overall performance of the model. So, we should always check the quality of the generated pseudo-labels.

7. Conclusion

In this tutorial, we talked about self-supervised learning. First, we defined the term and talked about how useful it is for the area of machine learning. Then, we presented some practical examples of self-supervised learning and some of its limitations.