Learn through the super-clean Baeldung Pro experience:

>> Membership and Baeldung Pro.

No ads, dark-mode and 6 months free of IntelliJ Idea Ultimate to start with.

Last updated: May 4, 2020

In this tutorial, we’ll understand how to integrate Play Framework and Akka Actors with the Lagom Framework. We’ll also understand why we need such integration and what are the possible ways to achieve it.

Also, we’ll build a sample Lagom-based application to demonstrate the integrations.

Lagom is a highly opinionated framework for building flexible, resilient, and responsive systems in Java and Scala.

It’s an open-source framework maintained by Lightbend. It offers libraries and development environments to build systems based on reactive microservices with best practices.

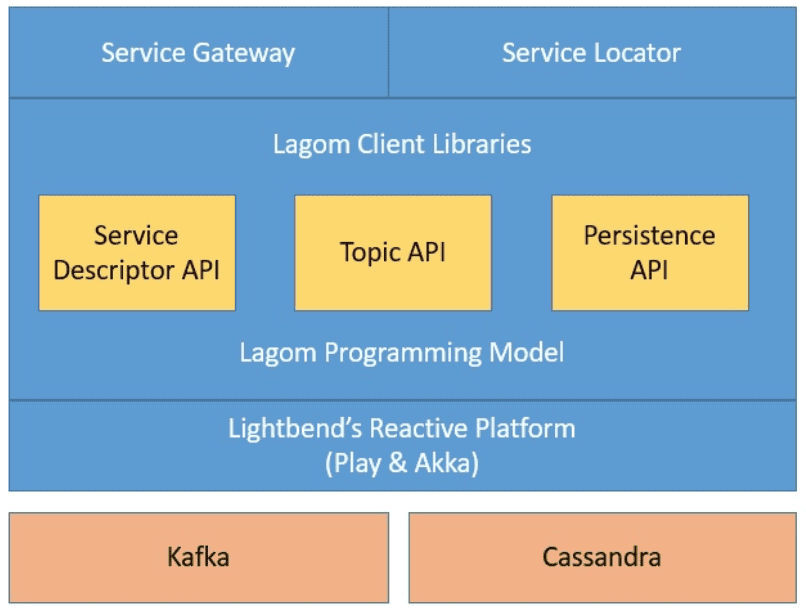

Lagom supports multiple aspects from development to deployment by leveraging other reactive frameworks from Lightbend like Play and Akka:

We design microservices to be isolated and autonomous, with loosely coupled communication between them. Lagom facilitates synchronous or asynchronous communication through HTTP or WebSocket. Further, it also offers message-based communication through a broker like Kafka, providing at-least-once delivery semantics.

We also design microservices to own their data exclusively and have direct control over it; this draws from the principles of Bounded Context. Lagom facilitates data persistence through well-known design patterns like Event Sourcing and CQRS. Lagom persists the event stream in the database through asynchronous APIs. The default database in Lagom is Cassandra.

Furthermore, other critical parts in developing loosely coupled microservices are service discovery and service gateway. We require them to provide location transparency in the way services communicate with each other and external clients.

A service locator is embedded in the Lagom’s development environment, which allows services to discover and communicate with each other. There’s a Service Gateway embedded as well, to allow external clients to connect to the Lagom services.

To explore the options to integrate Lagom with either Play of Akka, we first need a working example. In this section, we’ll build a simple microservice-based application leveraging the Lagom Framework.

Further, we’ll use this example to understand ways to integrate Lagom with Play or Akka. Please note that this example is based on the one provided in the standard documentation of Lagom but sufficient to cover the basics that we need.

Typically, Lagom is useful when we have a full-blown microservice architecture that can benefit from the features that it has to offer. However, since the objective of this tutorial is to show how to integrate Play and Akka APIs in Lagom, we’ll keep it simple. We’ll just define a single microservice without any persistence and later expand it with Akka and Play integrations.

Lagom provides APIs in Java and Scala; however, we’ll use Scala for our example in this tutorial. Further, Lagom has an option of using Maven or sbt with Java, but sbt is the only choice with Scala.

The easiest way to bootstrap a Lagom project is to use the starter tool provided by Lagom. Alternatively, we can define the project structure and let sbt generate the bootstrap for us:

organization in ThisBuild := "com.baeldung"

version in ThisBuild := "1.0-SNAPSHOT"

scalaVersion in ThisBuild := "2.13.0"

val macwire = "com.softwaremill.macwire" %% "macros" % "2.3.3" % "provided"

val scalaTest = "org.scalatest" %% "scalatest" % "3.1.1" % Test

lazy val `hello` = (project in file(".")).aggregate(`hello-api`, `hello-impl`)

lazy val `hello-api` = (project in file("hello-api"))

.settings(

libraryDependencies ++= Seq(

lagomScaladslApi

)

)

lazy val `hello-impl` = (project in file("hello-impl"))

.enablePlugins(LagomScala)

.settings(

libraryDependencies ++= Seq(

lagomScaladslTestKit,

macwire,

scalaTest

)

)

.settings(lagomForkedTestSettings)

.dependsOn(`hello-api`)This is a simple project structure that we can define in an SBT build file.

Please note that Lagom suggests defining separate projects for the service interface and its implementation for every microservice. Hence, as we can see, we have a hello-world microservice defined in projects “hello-api” and “hello-impl”. Further, the project “hello-impl” depends on the project “hello-api”.

Other than this, there are regular dependencies for Lagom to work like dependencies for Cassandra and Kafka. Note that these are optional, and we may not need them in every microservice.

The first thing we have to do is to define messages that our service will consume and produce. We also need to ensure that we provide either implicit or custom message serializer that Lagom can use to serialize and deserialize request and response messages.

Let’s quickly define the messages:

case class Job(jobId: String, task: String, payload: String)

object Job {

implicit val format: Format[Job] = Json.format

}case class JobAccepted(jobId: String)

object JobAccepted {

implicit val format: Format[JobAccepted] = Json.format

}

Now let’s understand these messages little better:

As we’ve seen, Lagom favors splitting a microservice into a service interface and it’s implementation. Hence, our next step would be to define the service interface:

trait HelloService extends Service {

def submit(): ServiceCall[Job, JobAccepted]

override final def descriptor: Descriptor = {

import Service._

named("hello")

.withCalls(

pathCall("/api/submit", submit _)

).withAutoAcl(true)

}

}This is a simple Scala trait which we know as a service descriptor in Lagom. The service descriptor defines how we can implement and invoke a service.

Let’s understand a few important things here:

Next, we have to provide the implementation for the service interface we’ve just defined. This must include an implementation for each call specified by the descriptor:

class HelloServiceImpl()(implicit ec: ExecutionContext)

extends HelloService {

override def submit(): ServiceCall[Job, JobAccepted] = ServiceCall {

job =>

Future[String] {JobAccepted(job.jobId)}

}

}This is a fundamental implementation for the function we defined service descriptor. However, there are a few things worth noting:

Now, we have to bring the services and their implementations together in a Lagom application. Lagom uses compile-time dependency injection to wire together this Lagom application. Lagom prefers Macwire, which provides lightweight macros that locate dependencies for the components.

Let’s see a quick and easy way to create our LagomApplication:

abstract class HelloApplication(context: LagomApplicationContext)

extends LagomApplication(context)

with AhcWSComponents {

override lazy val lagomServer: LagomServer =

serverFor[HelloService](wire[HelloServiceImpl])

}Here, some interesting things are happening behind these simple lines:

Finally, we need to write an application loader such that the application can bootstrap itself. We can do this conveniently in Lagom by extending the LagomApplicationLoader:

class HelloLoader extends LagomApplicationLoader {

override def load(context: LagomApplicationContext): LagomApplication =

new HelloApplication(context) {

override def serviceLocator: ServiceLocator = NoServiceLocator

}

override def loadDevMode(context: LagomApplicationContext): LagomApplication =

new HelloApplication(context) with LagomDevModeComponents

override def describeService = Some(readDescriptor[HelloService])

}Let’s go over the important things to note in this piece of the code:

It’s possible to configure many different parts of Lagom services through values provided in the configuration file application.conf.

However, for our simple example, the only thing that we need to configure is our application loader:

play.application.loader = com.baeldung.hello.impl.HelloLoaderNow, we have done all that is required to create a simple working example in Lagom. So, we are finally ready to run the application.

Using a command prompt and sbt tool, it’s just a single command to run the entire Lagom application:

sbt runAllOnce the server bootstraps successfully, we should be able to post our jobs to this service using a tool like cURL:

curl --location --request POST 'http://localhost:9000/api/submit' \

--header 'Content-Type: application/json' \

--data-raw '{

"jobId":"jobId",

"task":"task",

"payload":"payload"

}'Lagom is implemented on top of the Play framework. While building Lagom services, we don’t have to be aware of this detail, as we have noticed in our example.

However, Play offers a lot of powerful features, and we may need to access them directly. It’s possible to call some of the Play APIs directly from Lagom.

Play is a high-productivity Java and Scala web application framework maintained by Lightbend. It’s based on lightweight, stateless, and web-friendly architecture. The Play framework also provides concise and functional programming patterns. It internally leverages Akka and Akka Stream to provide a reactive model and natural scalability.

We can build web applications and REST services with components provided by Play like an HTTP server, a powerful routing mechanism, and much more. Play is non-opinionated about the database access and is capable of integrating with several persistence tools.

While it’s entirely possible to build a Lagom service from scratch, we may often find ourselves in situations where we need to add Lagom to existing applications. These applications may already be using the Play framework for various use cases. This gives us a powerful tool to leverage in the Lagom services that we define on top of such applications.

Let’s understand some of the typical use cases requiring us to access Play APIs directly from Lagom services:

Let’s see how we can achieve the objective of integrating a simple Play router with Lagom. We’ll begin by defining a simple router in Play:

class SimplePlayRouter(action: DefaultActionBuilder, parser: PlayBodyParsers) {

val router = Router.from {

case GET(p"/api/play") =>

action(parser.default) { request =>

Results.Ok("Response from Play Simple Router")

}

}

}This is a very simple router and does nothing useful but can help to understand how we can integrate this with Lagom. Our next step would be to wire this in the Lagom application loader and append it to the Lagom server:

override lazy val lagomServer: LagomServer =

serverFor[HelloService](wire[HelloServiceImpl])

.additionalRouter(wire[SimplePlayRouter].router)Here, we’re using the wire macro from Macwire to inject our Play router and append it to the Lagom server using the additionalRouter method.

Although this is sufficient to achieve what we wanted in the first place, there’s a slight problem. As this additional router is not part of the service descriptor, Service Gateway doesn’t automatically publish them as endpoints.

However, we can quickly add an ACL (Access Control List) specific to this router in our service descriptor:

named("hello")

.withCalls(

pathCall("/api/hello/:id", hello _),

)

.withAutoAcl(true)

.withAcls(ServiceAcl(pathRegex = Some("/api/play")))This is good enough, and now we can access our endpoints which are part of the Play router through the Service Gateway:

curl http://localhost:9000/api/playLike with Play, we don’t necessarily have to be aware of the fact that Lagom is built on top of Akka while building Lagom services. But, Akka provides quite a rich set of features that we may need to exploit directly. It’s possible to integrate Akka with Lagom and call Akka APIs directly from Lagom services and vice-versa.

Akka is a set of open-source libraries maintained by Lightbend that we can use for designing scalable and resilient systems. Akka uses the Actor Model to provide a level of abstraction for developing concurrent, parallel, fault-tolerant, and distributed systems. The actor model helps it get rid of memory visibility issues while providing location transparency.

Lagom internally uses Akka libraries to provide several features. For instance, Lagom Persistence and Publish-Subscribe modules are built on top of Akka. Lagom provides clustering to microservices through Akka Cluster. Lagom also provides streaming asynchronous services using Akka Streams.

Akka by virtue of the actor model provides unique ways to write available, resilient, and responsive applications. While working on Lagom, we may need to access some of the Akka APIs directly to control how we achieve these attributes in our application precisely. Similarly, we may have opportunities to signal a Lagom service from an Akka actor.

Let’s go through some of the use cases which may prompt us to integrate Akka and Lagom:

It’s quite straightforward to integrate Akka with Lagom. Almost everything in Akka is accessible through an ActorSystem.

We can leverage the dependency injection in Lagom to inject the current ActorSystem in Lagom service implementations or persistence entities:

class HelloServiceImpl(system: ActorSystem)(implicit ec: ExecutionContext)Here, the default dependency injection in Lagom will take care of injecting the current ActorSystem in our service implementation.

Let’s say that we have to route incoming requests to nodes in the cluster based on some parameters of the request message.

First, we have to define the Actor, which will process the incoming requests:

class Worker() extends Actor {

private val log = Logging.getLogger(context.system, this)

override def receive = {

case job @ Job(id, task, payload) =>

log.info("Working on job: {}", job)

sender ! JobAccepted(id)

// perform the work...

}

}This simple Actor is doing nothing but logging the job details and acknowledging to the sender. Next, we can selectively start a worker actor on some or all nodes of the cluster:

if (Cluster.get(system).selfRoles("worker-node")) {

system.actorOf(Worker.props, "worker")

}Now, we have to create a router which can selectively channel the jobs to worker nodes in the cluster:

val workerRouter = {

val paths = List("/user/worker")

val groupConf = ConsistentHashingGroup(paths, hashMapping = {

case Job(_, task, _) => task

})

val routerProps = ClusterRouterGroup(

groupConf,

ClusterRouterGroupSettings(

totalInstances = 1000,

routeesPaths = paths,

allowLocalRoutees = true,

useRoles = Set("worker-node")

)

).props

system.actorOf(routerProps, "workerRouter")

}Here, we’re using ClusterRouterGroup based on ConsistentHashingGroup. This uses the task attribute of the Job to group and route jobs to the worker nodes. There are quite a few grouping strategies that Akka provides, including ClusterRouterGroup, BroadcastGroup, RandomGroup, and RoundRobinGroup to name a few.

Finally, we’re ready to accept and route the jobs to the cluster nodes in our custom manner. We’ll modify the earlier implementation of our service to accommodate this:

override def submit(): ServiceCall[Job, JobAccepted] = ServiceCall {

job =>

implicit val timeout = Timeout(5.seconds)

(workerRouter ? job).mapTo[JobAccepted]

}Here, we’re simply routing the incoming jobs to the cluster nodes using the router we created earlier with a timeout of five seconds.

Now, we should be able to post our jobs as earlier, and these will be routed as defined by us.

Now, we’ll see how we can easily access Lagom APIs from Akka.

We’ll expand our example to signal back from our simple Actor progress of the workload execution. We’ll leverage Publish-Subscribe which is a well-known messaging pattern to achieve this communication. Lagom provides support for this through PubSubRegistry, which provides PubSubRef which we can use to publish and subscribe to a topic.

We’ll begin by adding the required dependency in the sbt build file of the module “hello-impl”:

libraryDependencies ++= Seq(

lagomScaladslTestKit,

lagomScaladslPubSub,

macwire,

scalaTestAs before, we’ll use Lagom’s dependency injection to inject an instance of PubSubRegistry into our service implementation:

class HelloServiceImpl(system: ActorSystem, pubSub: PubSubRegistry)(implicit ec: ExecutionContext)We’ll also have to define the message that we’ll publish and subscribe to:

case class JobStatus(jobId: String, jobStatus: String)

object JobStatus {

implicit val format: Format[JobStatus] = Json.format

}Now, we’ll modify the Actor we defined earlier to leverage PubSubRegistry and publish messages of the type JobStatus to a topic:

class Worker(pubSub: PubSubRegistry) extends Actor {

private val log = Logging.getLogger(context.system, this)

override def receive = {

case job @ Job(id, task, payload) =>

log.info("Working on job: {}", job)

sender ! JobAccepted(id)

val topic = pubSub.refFor(TopicId[JobStatus]("job-status"))

topic.publish(JobStatus(job.jobId,"started"))

// perform the work...

topic.publish(JobStatus(job.jobId,"completed"))

}

}Here, we’re using the injected PubSubRegistry to get hold of a PubSubRef called topic. Then, we are using PubSubRef#publish to send updates.

Now, we can stream the status updates through another service call. Let’s add a new call to our service interface:

def hello(id: String): ServiceCall[NotUsed, String]

def doWork(): ServiceCall[Job, JobAccepted]

def status(): ServiceCall[NotUsed, Source[JobStatus, NotUsed]]

override final def descriptor: Descriptor = {

import Service._

named("hello")

.withCalls(

pathCall("/api/hello/:id", hello _),

pathCall("/api/dowork", doWork _),

pathCall("/api/status", status _)

)

.withAutoAcl(true)

}Note that the return type of this new method is Source, which is actually an Akka Stream API enabling asynchronous streaming. We’ll also have to provide an implementation for the new method we have introduced here:

override def status(): ServiceCall[NotUsed, Source[JobStatus, NotUsed]] = ServiceCall {

_ =>

val topic = pubSub.refFor(TopicId[JobStatus]("job-status"))

Future.successful(topic.subscriber)

}The only thing remaining to do now is to update the Lagom application and introduce a new component called PubSubComponents:

abstract class HelloApplication(context: LagomApplicationContext)

extends LagomApplication(context)

with PubSubComponents

with AhcWSComponents {

// Provide service bindings as before

}Now, we are ready to access our service calls and post a workload as well as get realtime updates. We’ll need a Websocket client to access the following endpoint for realtime updates:

ws://localhost:9000/api/statusLagom internally is built on top of Play and Akka and hence has specific dependencies on versions of their libraries. In a Lagom application, we may have a requirement to upgrade one or several libraries for specific reasons.

Lagom provides a convenient way to override and upgrade versions of these libraries. We can achieve this by providing dependencyOverrides in the sbt build file of the application.

Further, Lagom adds a number of dependencies directly as well as transitively. However, it does not include all the libraries that Akka has to offer. We have a choice to add any other dependency that we may need but is not already included.

We must, however, exercise caution to not mix incompatible versions. Akka has strict binary compatibility rules, and we must ensure that we do not break them. Also, we should keep in mind that sbt while resolving dependencies gets us the newest one declared directly to transitively. The tool sbt-dependency-graph is quite useful in analyst of project dependencies.

To sum up, in this tutorial, we discussed the Lagom framework and created a very simple application with Lagom. We also understood how Lagom builds upon Play and Akka.

Further, we explored the reasons and ways in which we can access parts of Play and Akka from Lagom directly. We extended our simple application to understand these integrations.